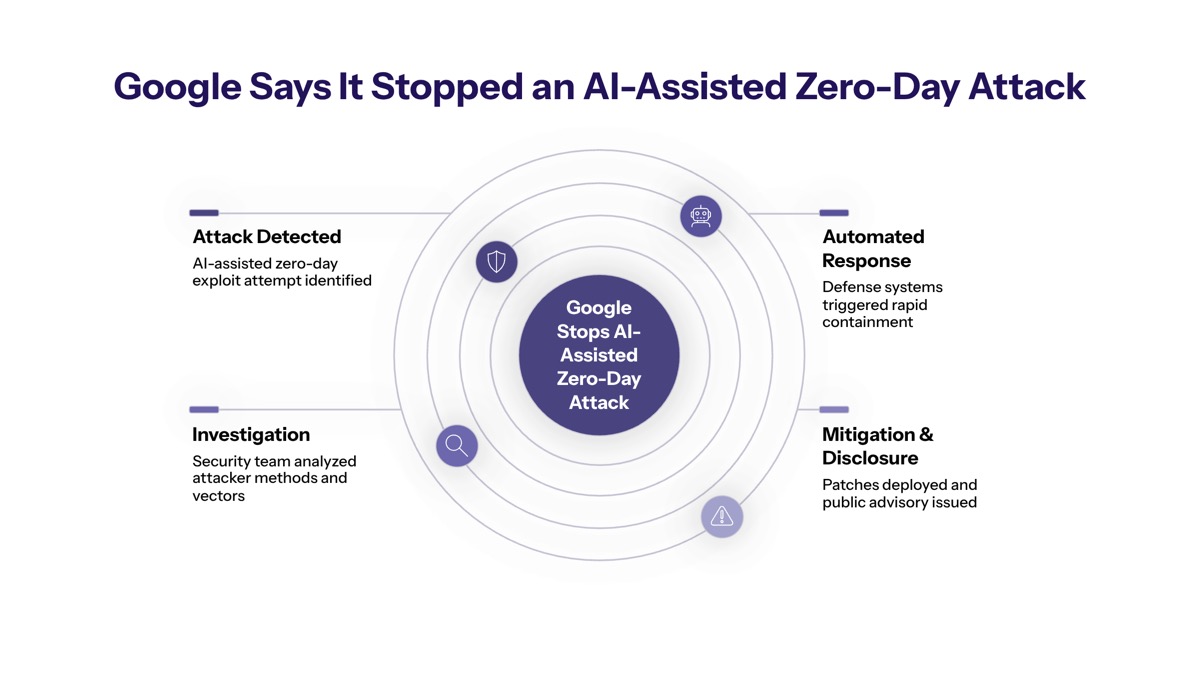

Google Says It Stopped an AI-Assisted Zero-Day Attack

In May 2026, the Google Threat Intelligence Group announced the first real-world zero-day exploit developed by artificial intelligence, marking a significant turning point in cybersecurity. Google found the AI-developed exploit before attackers could use it in a mass exploitation event, showing the importance of proactive security measures in an era where threat actors are increasingly leveraging AI to automate reconnaissance and vulnerability discovery.

This article covers the incident details surrounding Google’s detection, the technical implications of AI-assisted exploitation, and defensive strategies for enterprise security teams. CISOs, security architects, and technology leaders responsible for protecting enterprise systems will find actionable intelligence on how this incident reshapes threat modeling and defensive priorities. The shift from experimental AI concepts to live, AI-generated zero-days marks a significant change in the cyber threat landscape that demands immediate attention.

Google’s Threat Intelligence Group successfully identified and stopped a criminal threat actor planned operation that relied on a zero-day exploit believed to bypass two-factor authentication on a popular open source system administration tool. This represents the first documented case where evidence confirms AI assistance in both vulnerability discovery and exploit development.

Key outcomes readers will gain from this article:

Understanding the timeline and technical specifics of the first AI-assisted zero-day detection

Insight into how AI large language model capabilities accelerate exploit development

Knowledge of Google’s detection framework and behavioral analysis techniques

Actionable enterprise defense strategies against AI-enhanced threat activity

Practical implementation steps for security assessment and modernization planning

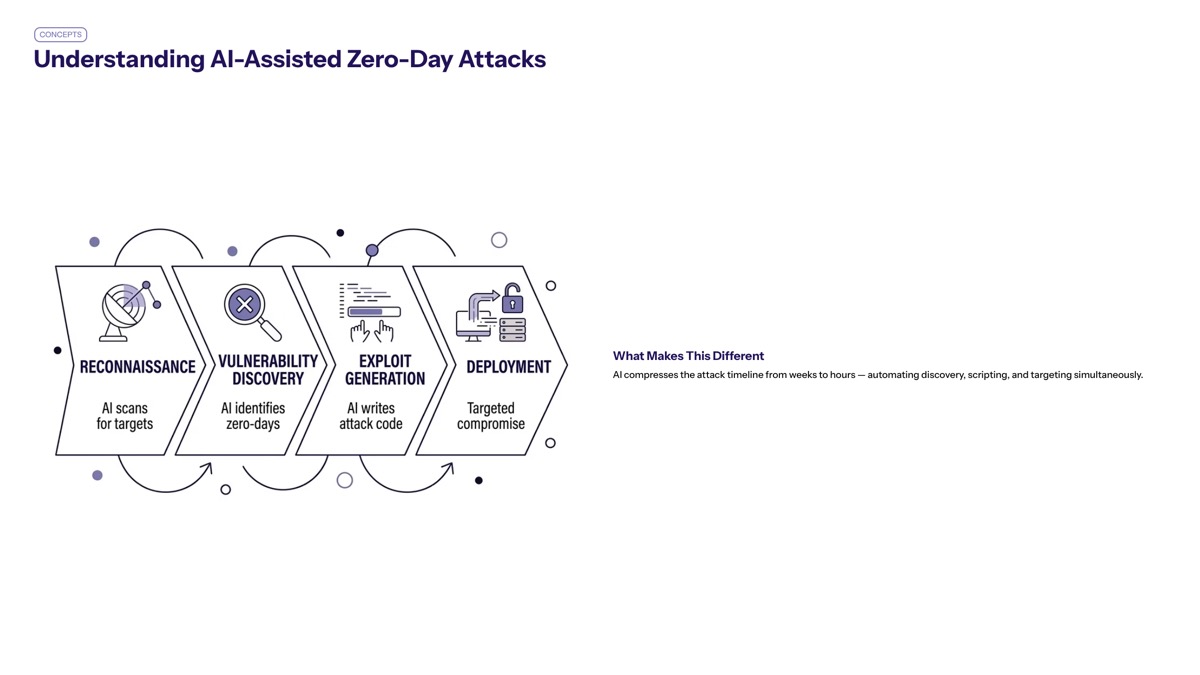

Understanding AI-Assisted Zero-Day Attacks

A zero-day attack exploits a software or hardware vulnerability that is unknown to developers, meaning no patch exists when attackers strike. Historically, discovering zero-day vulnerabilities required elite human engineering and months of research, making such exploits rare and valuable commodities in the threat landscape. The integration of AI tools into this process fundamentally alters both the economics and velocity of cyber threats.

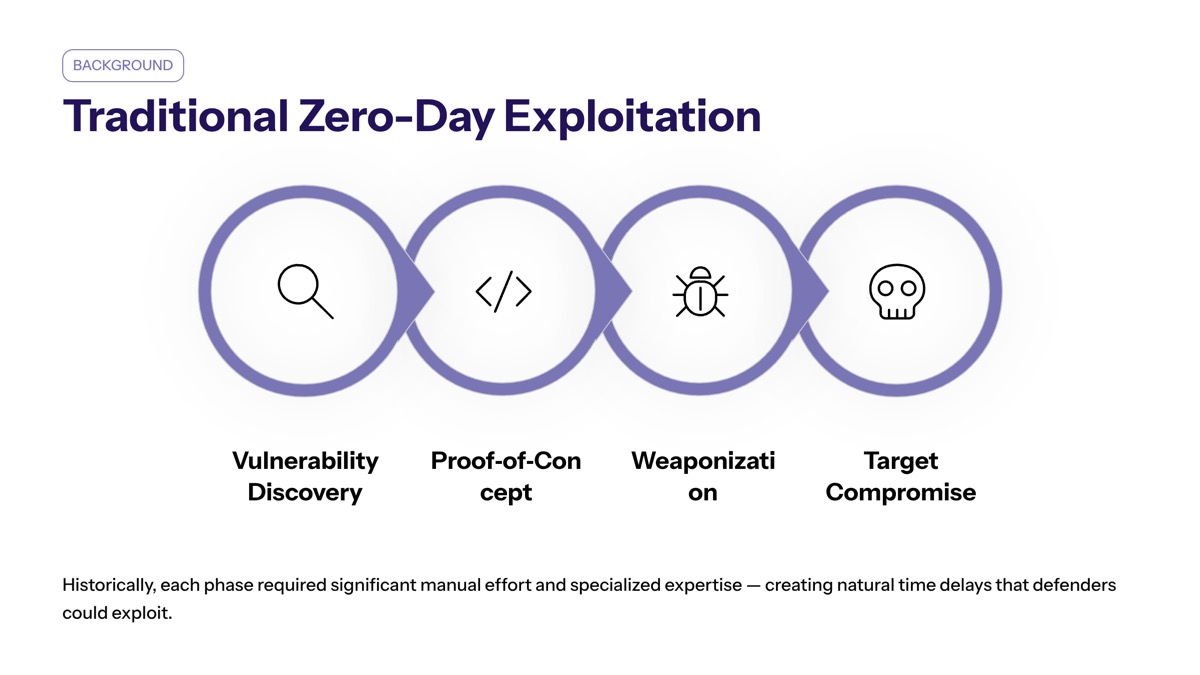

Traditional Zero-Day Exploitation

Zero-day vulnerability research traditionally demanded specialized expertise in reverse engineering, code auditing, fuzzing techniques, and manual trial-and-error analysis. Security researchers would spend months examining target codebases, identifying potential weaknesses, and crafting reliable exploits. This resource intensity naturally limited the volume of zero-day exploits deployed in the wild, creating a barrier that only well-funded threat actors or state-sponsored groups could consistently overcome.

The manual process involved understanding specific runtime environments, debugging edge cases, and iteratively refining attack code until achieving reliable exploitation. This investment of time and specialized knowledge meant that zero-day attacks remained primarily the domain of advanced persistent threats and sophisticated criminal organizations.

AI-Enhanced Threat Capabilities

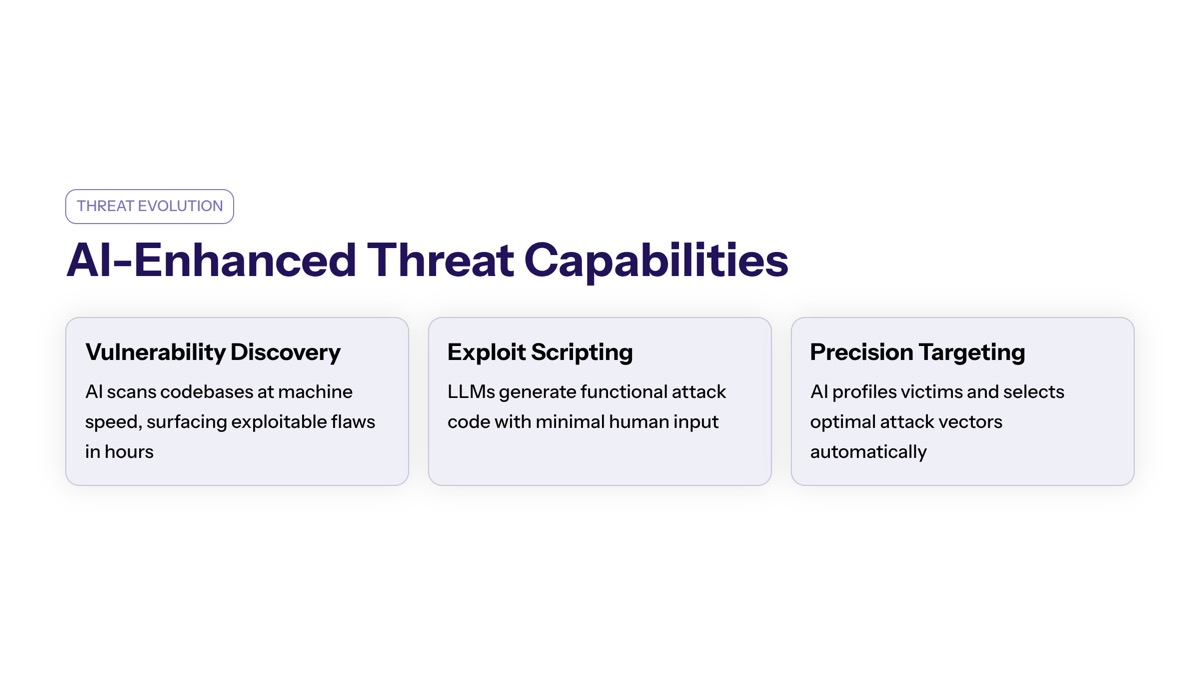

AI models can analyze vast amounts of code to find complex vulnerabilities much faster than human researchers. Large language models demonstrate particular effectiveness at identifying logic-level flaws—such as authentication bypass routes or exception handling errors—that traditional static analysis tools often miss. Threat actors are increasingly using AI to discover and exploit zero-day vulnerabilities, significantly speeding up their operations compared to traditional methods.

Attackers can use AI to quickly scan for, analyze, and exploit vulnerabilities much faster than human researchers can patch them. AI models are being leveraged by cyber crime threat actors to automate the discovery and weaponization of vulnerabilities, significantly lowering the barrier for adversaries to develop sophisticated exploits. The use of AI in cyberattacks allows threat actors to conduct reconnaissance and craft targeted phishing lures with greater precision, increasing the likelihood of successful breaches.

Additionally, adversaries are utilizing AI to enhance their malware capabilities, including the development of polymorphic malware that can dynamically modify its code to evade detection by traditional security measures. The emergence of agentic tools—autonomous malware operations orchestrated through multiple AI prompts and toolchains—enables threat actors to evaluate thousands of vulnerability hypotheses rapidly, creating a more robust arsenal for attacks.

Understanding these enhanced capabilities becomes essential when examining how Google detected the specific incident and what it reveals about future threat activity.

The Google Threat Intelligence Detection

Building on the foundational understanding of AI-assisted exploitation, the specific incident detected by Google provides concrete evidence of how these capabilities manifest in real-world attacks and what indicators security teams should monitor.

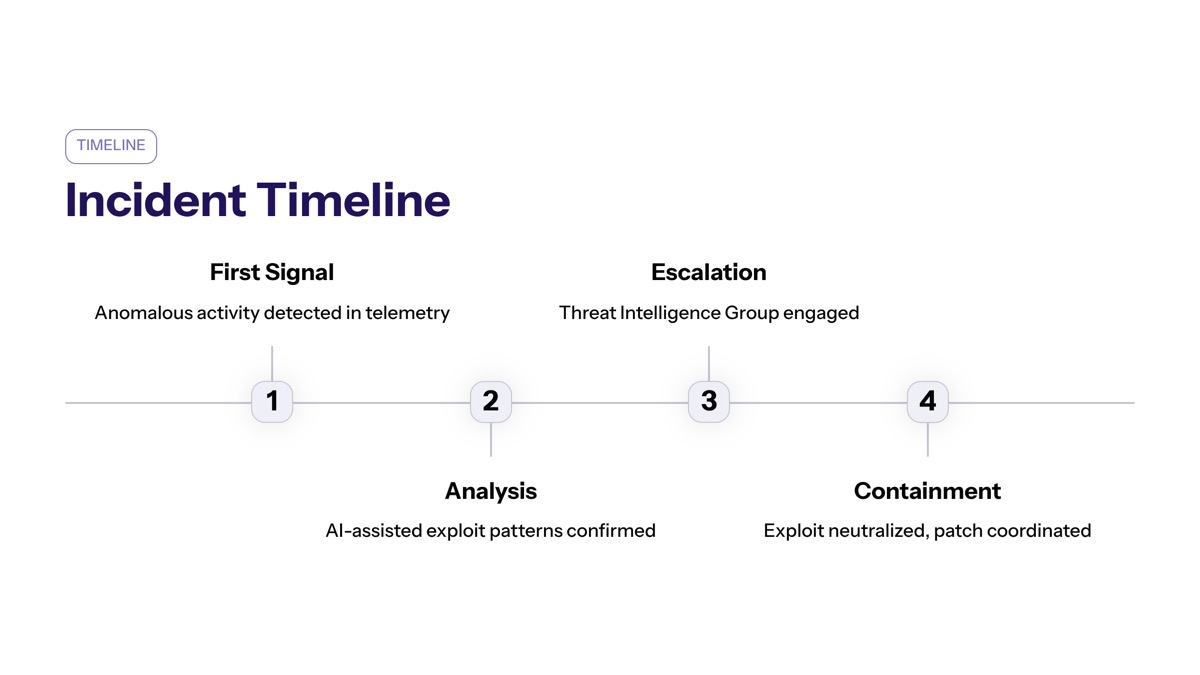

Timeline and Discovery

Google’s Threat Intelligence Group identified the AI-assisted zero-day exploit attempt during late 2025 through early 2026 as part of ongoing AI Threat Tracker monitoring. The discovery occurred through proactive counter discovery efforts rather than reactive incident response, enabling intervention before the criminal threat actor could execute their planned mass exploitation campaign.

The first time Google observed a group of threat actors planning a significant operation that relied on a zero-day exploit, they noted indicators suggesting the attack chain incorporated AI assistance throughout development. Upon confirmation, Google moved to responsibly disclose the vulnerability to the impacted vendor, coordinating a patch release before publicly announcing the discovery on Monday, May 11-12, 2026.

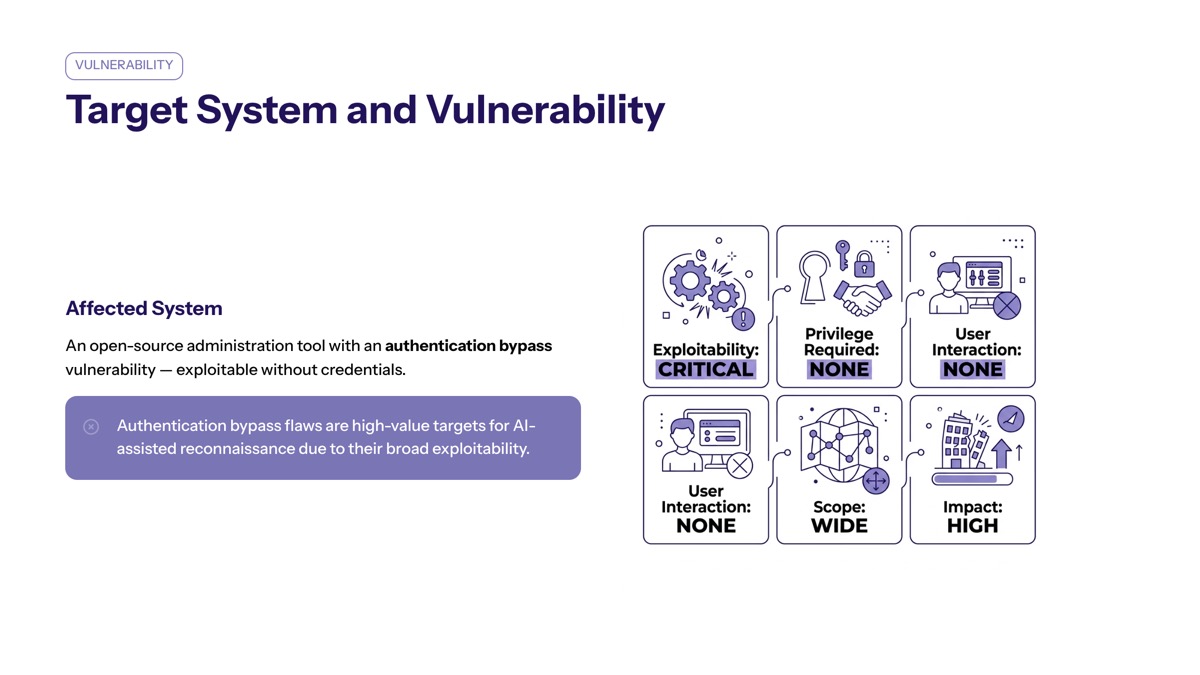

Target System and Vulnerability

The target was a popular open source web-based system administration tool widely deployed across enterprise environments. Google has observed a group of threat actors planning a significant operation that relied on a zero-day exploit, which allowed them to bypass two-factor authentication, indicating the use of AI in discovering vulnerabilities.

Rather than exploiting a traditional memory corruption bug or buffer overflow, the vulnerability represented an authentication logic bypass—a subtle flaw in how the system handled exception paths within its two-factor authentication implementation. This type of logic-level vulnerability proves particularly amenable to AI discovery, as large language models excel at analyzing intended versus implemented behavior in complex codebases.

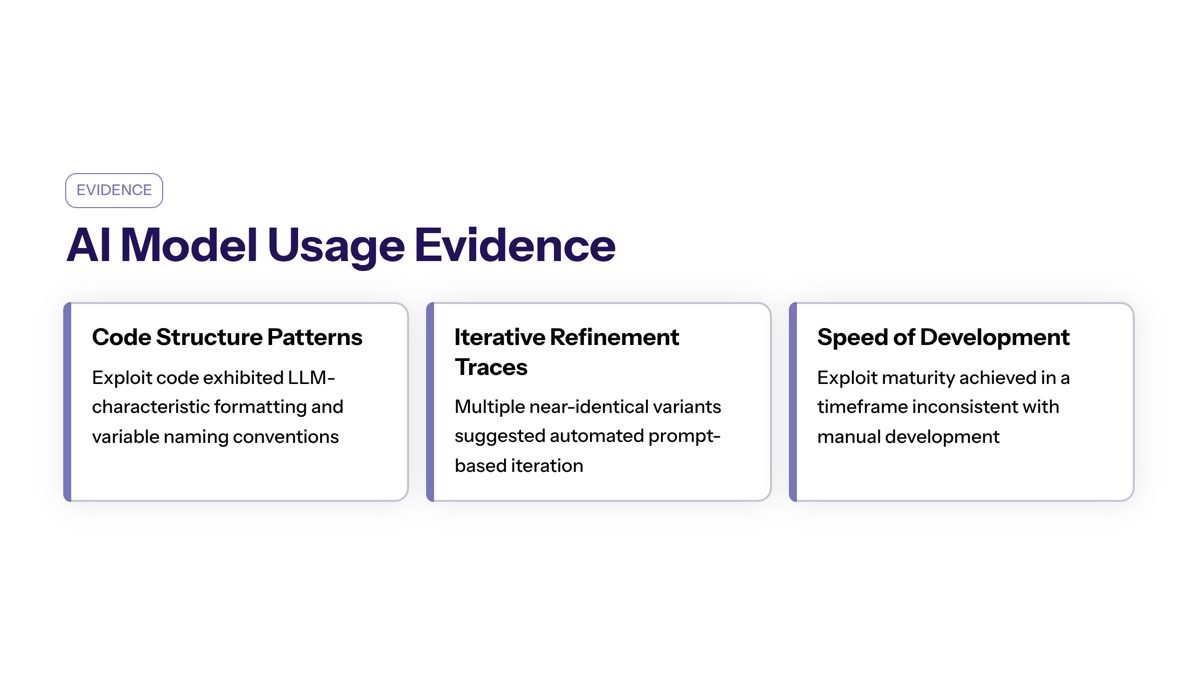

AI Model Usage Evidence

Threat actors have begun using AI to discover and exploit zero-day vulnerabilities, including a notable case where a zero-day exploit was developed with AI to bypass two-factor authentication on a popular web-based system administration tool. Google’s analysis revealed multiple technical indicators confirming AI assistance:

The python script contained a hallucinated CVSS score—a severity metric that appeared fabricated rather than reflecting actual vulnerability characteristics. This type of confident but incorrect data generation is characteristic of frontier models operating without ground-truth validation.

Educational docstrings and structured help menus embedded within the exploit code represented another telltale sign. Hand-crafted exploits typically prioritize functionality over documentation, while AI-generated code often includes explanatory comments consistent with patterns found in LLMs training data.

The code exhibited a C ANSI color class implementation and formatting patterns that matched LLM output characteristics—overly consistent naming conventions, clean Pythonic structure, and organizational features unnecessary for operational exploit code. These artifacts served as detection signals that distinguished AI assistance from traditional development practices.

Google confirmed with high confidence that its own Gemini models were not used in creating the exploit. However, threat activity from groups linked to North Korea and China demonstrated significant interest in using AI for vulnerability research and exploit capabilities at scale.

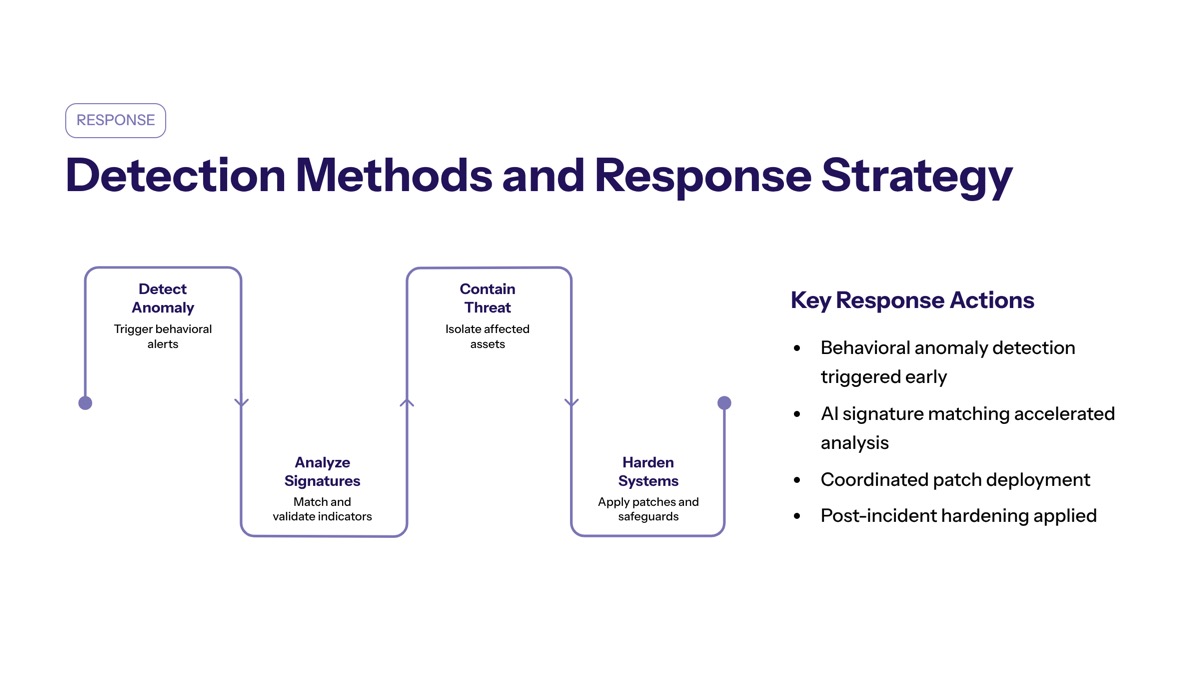

Detection Methods and Response Strategy

With the technical details of the incident established, understanding how Google achieved detection—and how enterprise security teams compare—provides crucial context for defensive planning against AI-enhanced threats.

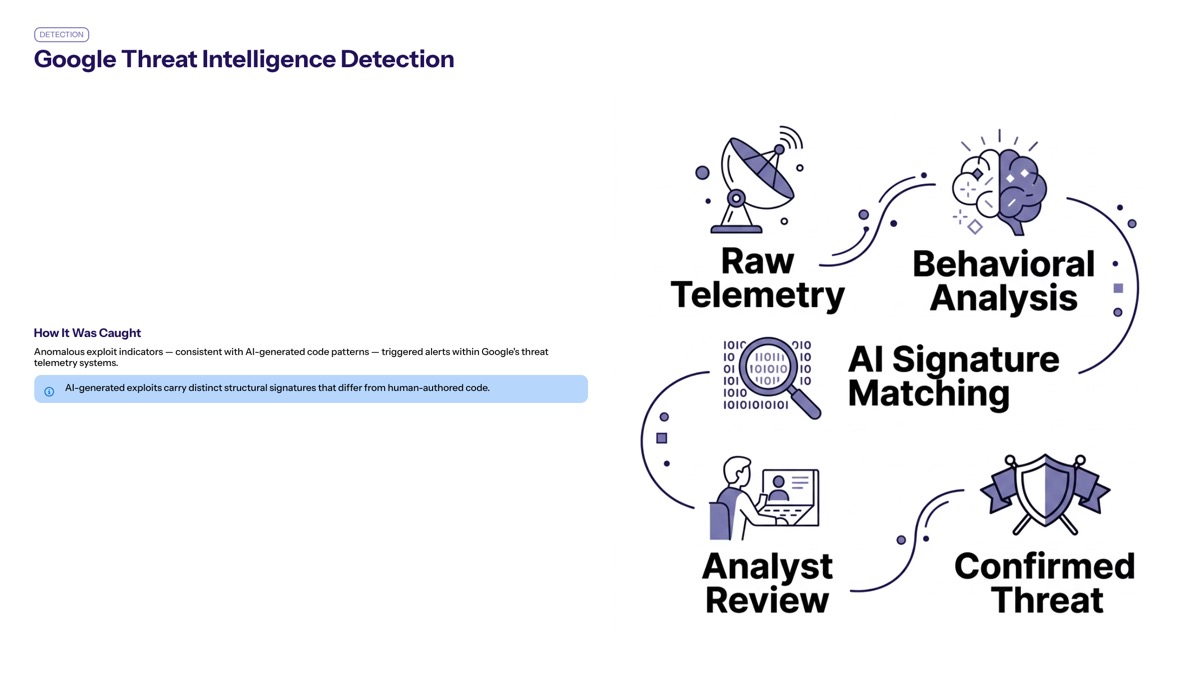

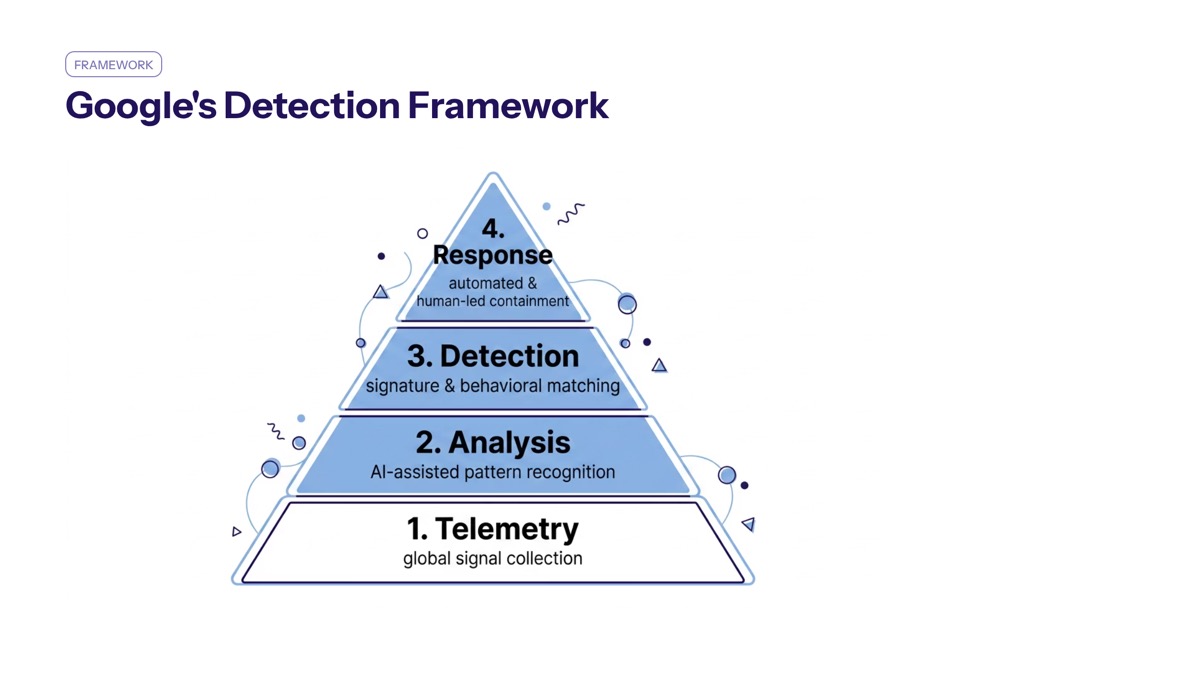

Google’s Detection Framework

Google has implemented proactive measures to counter AI-driven threats, including enhancing product safeguards and employing AI agents to identify and fix software vulnerabilities before they can be exploited. The detection of this AI-assisted zero-day relied on several complementary capabilities:

AI-enhanced behavioral monitoring: Tracking code metadata, structural anomalies, and formatting patterns that deviate from known human-authored exploit characteristics

Threat intelligence correlation: Maintaining visibility into open-source software ecosystems, exploit code marketplaces, and dark web activity to identify emerging attack patterns

Logic flaw analysis: Using AI model capabilities to reason about intended versus implemented authentication behavior, detecting bypass routes in complex codebases

Rapid vendor coordination: Establishing relationships with software vendors to enable swift responsible disclosure and patch development before public announcement

Proactive AI-driven security can still identify and mitigate sophisticated threats despite the advances in AI-powered hacking. AI plays a dual role, acting as both a tool for attackers to find flaws and a shield for defenders to detect and fix them.

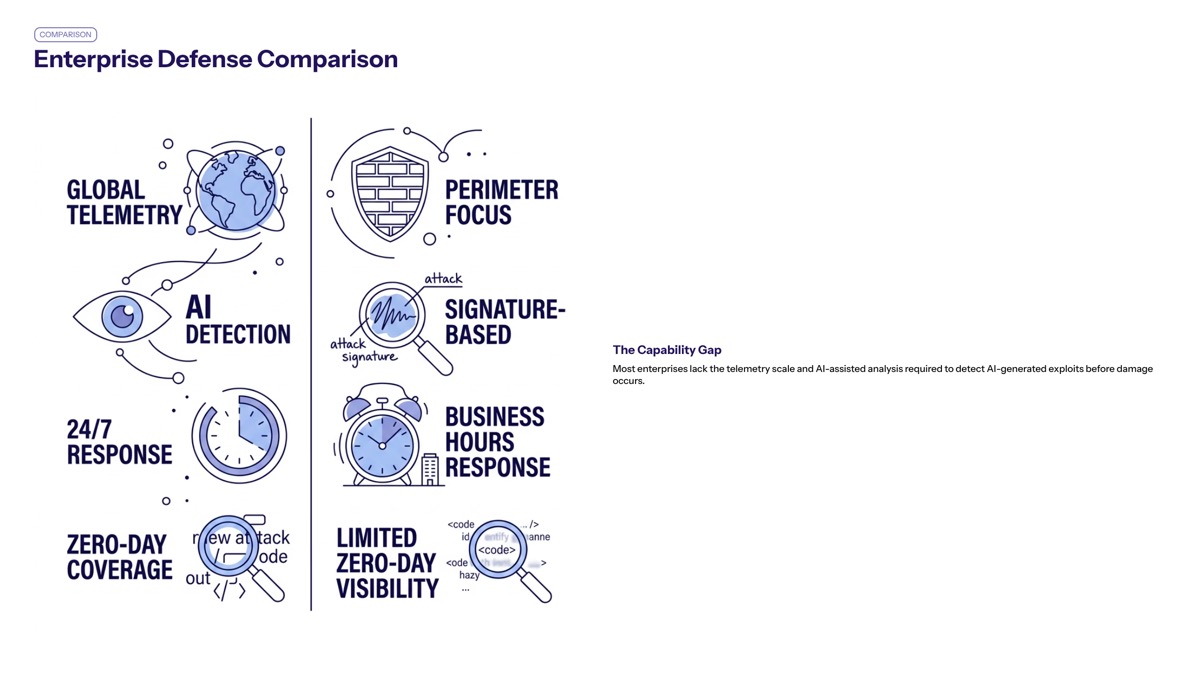

Enterprise Defense Comparison

Understanding detection capability gaps helps organizations prioritize security investments:

Detection Capability | Enterprise Security Teams | Advanced Threat Intelligence (GTIG) |

|---|---|---|

AI fingerprint detection | Limited or absent | Specialized models trained on AI-generated code patterns |

Logic flaw analysis | Manual code review, periodic audits | Continuous AI-assisted behavioral analysis |

Threat intelligence scope | Vendor feeds, public indicators | Dark web monitoring, exploit marketplace visibility |

Disclosure coordination | Ad-hoc vendor relationships | Established responsible disclosure pipelines |

Response velocity | Days to weeks for patch deployment | Hours to days for coordinated disclosure |

Most enterprise organizations lack the data volume, specialized AI detection tools, and threat intelligence infrastructure that enabled Google’s detection. This gap highlights the importance of external intelligence partnerships and investment in AI-aware security capabilities.

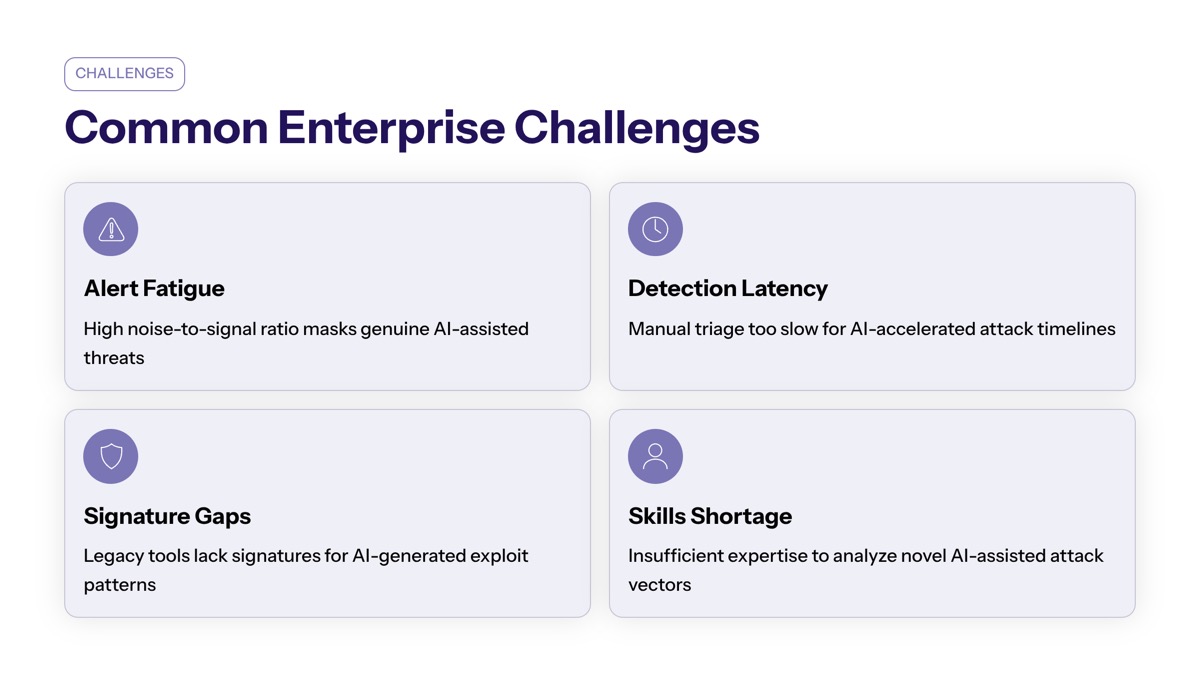

Common Challenges and Enterprise Solutions

Enterprise security teams face distinct challenges when defending against AI-accelerated threat activity. Each problem requires targeted solutions that account for the speed and sophistication of AI-assisted attacks.

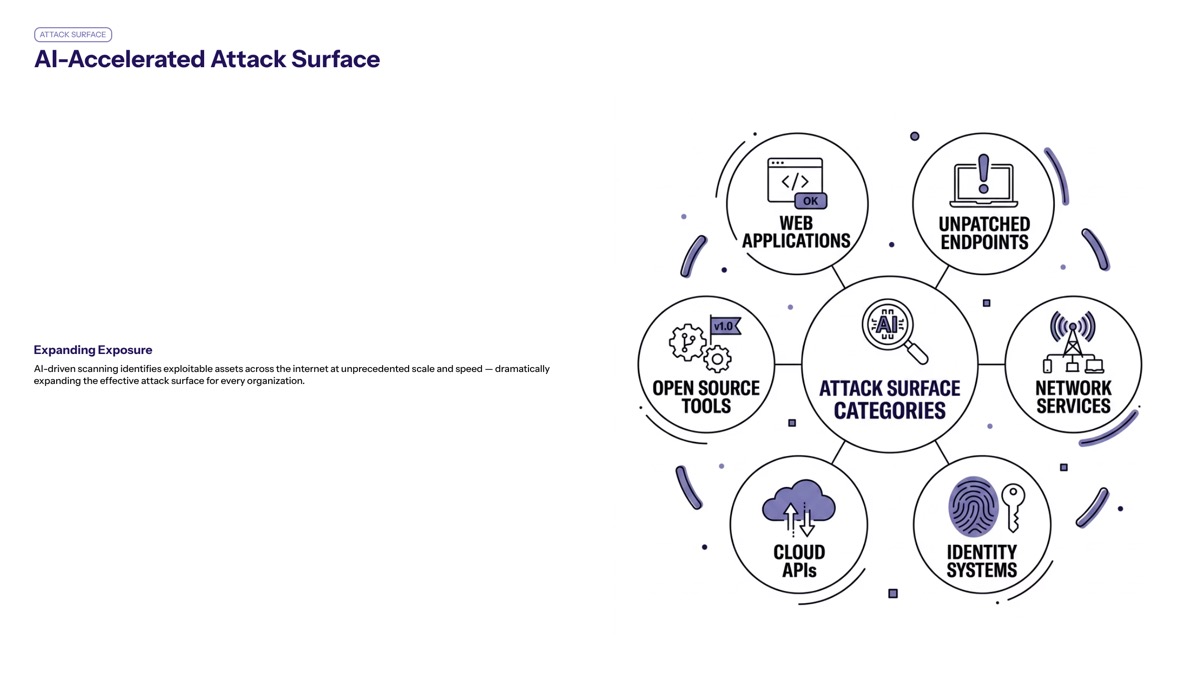

AI-Accelerated Attack Surface

AI models are being leveraged by cybercriminals to automate the development of sophisticated malware, enhancing their ability to evade detection and execute attacks autonomously. The window between vulnerability existence and exploit deployment shrinks dramatically when threat actors can generate and validate POC exploits using AI assistance rather than manual research.

Solution: Organizations must compress patch management timelines and implement compensating controls for critical systems. Deploy web application firewalls with behavioral detection, strengthen two-factor authentication implementations to eliminate exception paths, and architect systems for rapid rollback and recovery. Prioritize high-risk components—authentication systems, embedded devices, legacy software—for accelerated patching workflows.

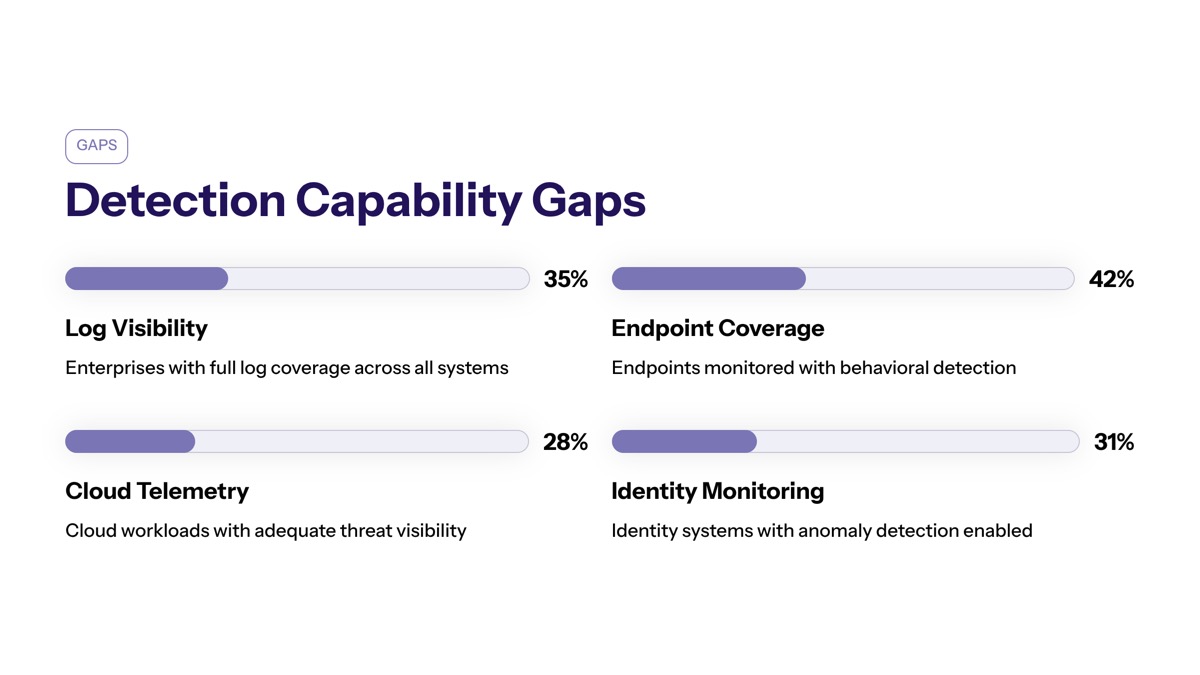

Detection Capability Gaps

Distinguishing AI-generated versus human-created exploit code presents technical challenges that most security tools cannot address. Traditional signature-based detection misses the subtle formatting and metadata anomalies that indicate AI assistance, while behavioral analysis requires training data that many organizations lack.

Solution: Integrate threat intelligence from sources like John Hultquist and the Google Threat Intelligence Group to enrich internal detection capabilities. Implement anomaly detection focused on authentication system behavior rather than code signatures. Partner with managed detection providers who maintain AI-aware detection models and can identify patterns associated with large language models.

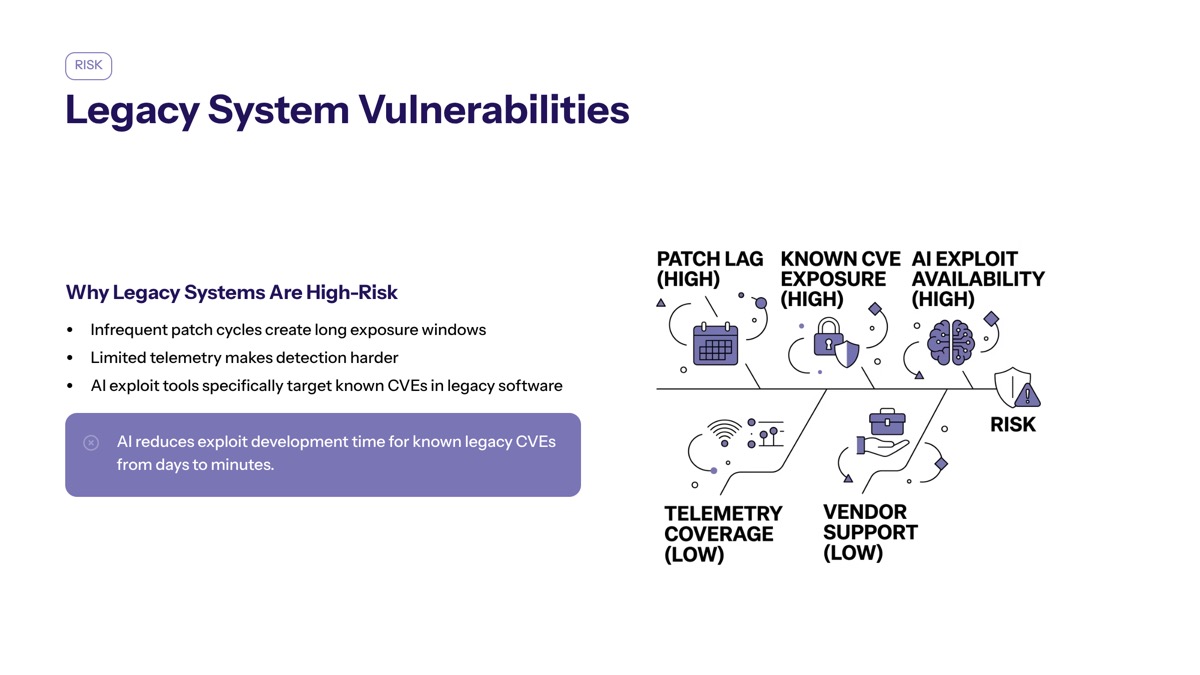

Legacy System Vulnerabilities

Older system administration tools and embedded devices often contain complex authorization logic, weak enforcement of security controls, and minimal telemetry. These characteristics make them prime targets for AI-assisted vulnerability discovery, which excels at identifying logic flaws in poorly documented codebases.

Solution: Prioritize secure modernization of legacy systems, particularly those handling authentication or access control. Conduct focused code audits on exception paths, input validation, and authorization logic branches. Where modernization proves impractical, implement strict network segmentation and enhanced monitoring to detect exploitation attempts. Consider AI-first architecture principles for new deployments, building systems with clear security policies and comprehensive logging from inception.

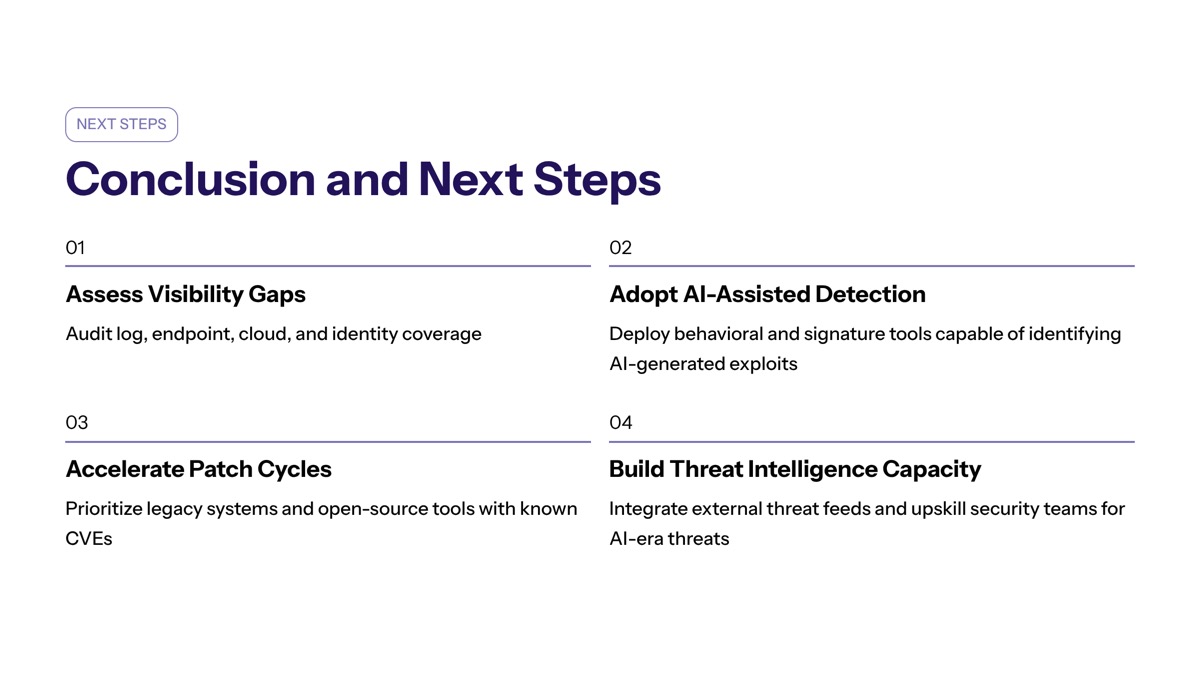

Conclusion and Next Steps

Google’s detection of the first AI-assisted zero-day exploit signals a fundamental shift in the threat landscape. Governments are pressing for stricter vetting, controlled releases, and mandatory safety guardrails on highly capable AI models to prevent misuse, but defensive capabilities must evolve independently of regulatory timelines.

The incident demonstrates that proactive threat intelligence, AI-enhanced detection, and rapid response coordination can neutralize sophisticated AI-developed attacks before mass exploitation. Enterprise security teams must assume that threat actors now possess AI-augmented exploit capabilities and adjust defensive investments accordingly.

Immediate actionable steps:

Conduct a security maturity assessment focused on detection capabilities for logic-level vulnerabilities and AI-generated code patterns

Integrate external threat intelligence feeds from sources monitoring AI-assisted threat activity

Audit authentication systems for exception path vulnerabilities, particularly two-factor authentication implementations

Establish or strengthen vendor relationships to enable rapid patch deployment for critical systems

Evaluate AI-aware security tools that can identify behavioral anomalies indicative of novel exploitation techniques

Related topics worth exploring include AI-first security architecture design, secure software development practices that minimize logic flaw introduction, and supply chain attacks affecting TP-Link firmware and other embedded devices where AI-assisted vulnerability discovery poses elevated risk.

Additional Resources

Google Threat Intelligence Group reporting guidelines and published threat indicators for AI-assisted exploitation

Enterprise security frameworks for behavioral analysis and anomaly detection integration

Secure development practices focused on authentication logic validation and exception path elimination

Senior security auditor guidelines for evaluating AI-resistance in system administration tools

Regulatory developments from the Trump administration and international bodies regarding AI model safety requirements