Lawmakers Press White House to Act on AI Cyber Threats

US lawmakers press White House officials to confront rapidly escalating AI cybersecurity threats, with 32 bipartisan House members sending an urgent letter to National Cyber Director Sean Cairncross demanding immediate federal action. This congressional pressure reflects growing alarm over frontier large language models capable of identifying thousands of unknown software vulnerabilities and generating functioning exploits with minimal human oversight.

This article covers current congressional AI cybersecurity initiatives, proposed legislation, federal agency coordination requirements, and the White House response to these mounting demands. Enterprise security leaders, government contractors, and organizations needing to understand evolving federal AI standards and security mandates will find actionable guidance for navigating this shifting regulatory landscape. The topic carries significant weight as artificial intelligence systems may soon surpass humans in identifying software vulnerabilities, fundamentally changing the cyber threat environment.

Congressional leaders are pressing the White House to establish a comprehensive national policy framework for artificial intelligence cybersecurity through executive orders and increased Department of Homeland Security coordination with state and local governments. The Trump administration is preparing to direct U.S. agencies to collaborate with AI companies to bolster defenses against AI-enabled cyber attacks.

Key outcomes from this analysis include:

Understanding current legislative pressure and specific congressional demands as lawmakers press White House officials

Federal response mechanisms and executive order development timelines shaping AI standards

Enterprise compliance implications for critical infrastructure operators across the country

Preparatory security measures organizations should implement now amid evolving state laws

Emerging public-private partnership models involving AI companies and federal agencies

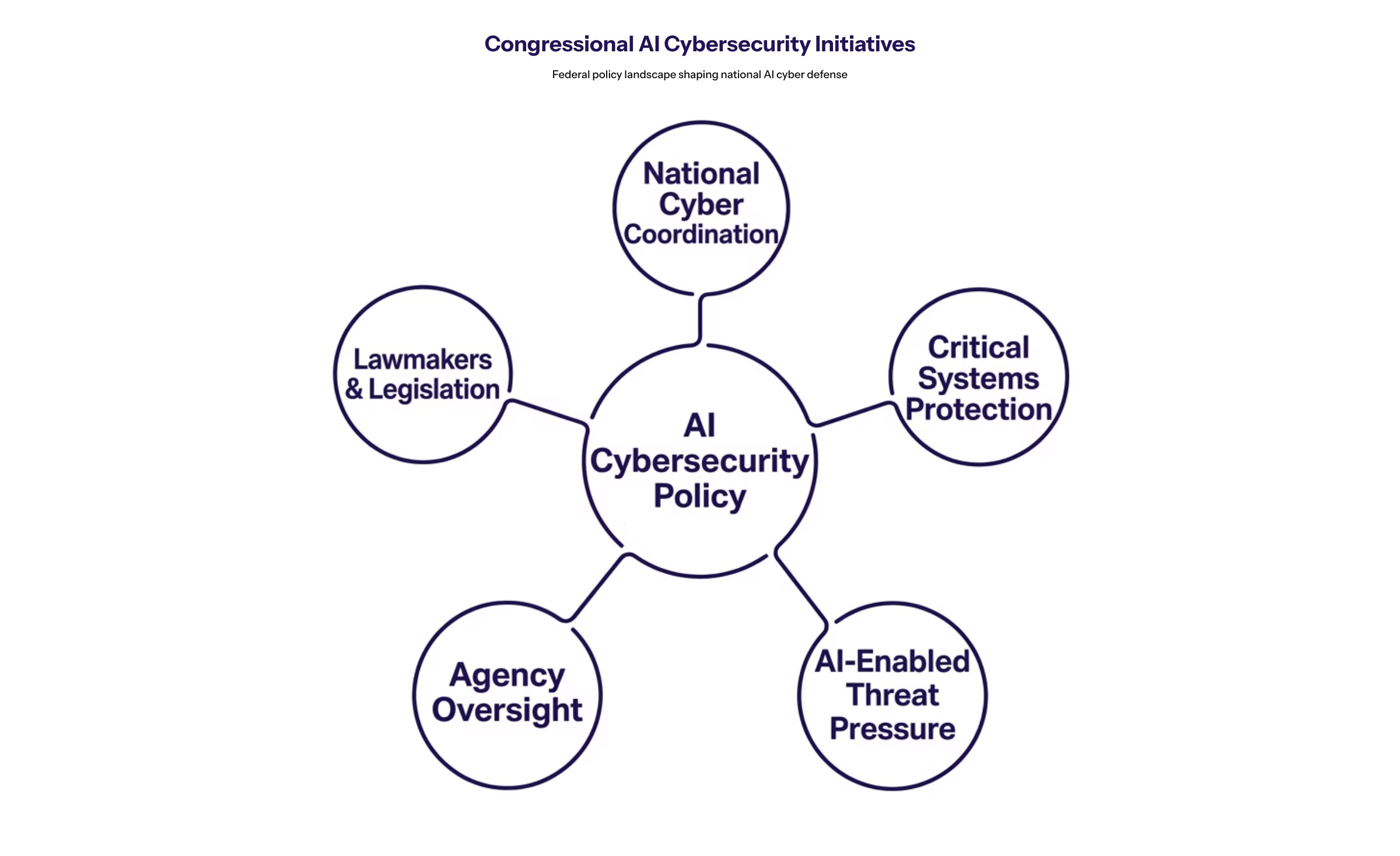

Understanding Congressional AI Cybersecurity Initiatives

Congressional AI cybersecurity initiatives represent a coordinated legislative push to establish binding federal requirements for protecting American systems against AI-enabled attacks. These efforts target both offensive AI capabilities—where artificial intelligence acts as a force multiplier enabling low-skilled adversaries to launch automated, high-volume, and sophisticated cyberattacks—and defensive gaps in current federal frameworks.

Senate Leadership Demands

Senate Minority Leader Chuck Schumer emphasized the need for the Department of Homeland Security to enhance coordination with state and local governments to address AI-driven cyber threats, highlighting that AI systems may soon surpass humans in identifying software vulnerabilities. Schumer called for a comprehensive plan from the Department of Homeland Security to coordinate responses to AI-enabled hacking, stressing the urgency of preparing critical infrastructure against potential cyber attacks.

Schumer’s AI roadmap positions these cyber threats as central national security concerns, requiring enhanced roles for the Defense Department, Department of Energy, and DHS in defending against AI-related vulnerabilities. The Senate leadership has set a deadline, calling for a plan to coordinate the nation’s response to AI-enabled hacking by July 1 of this year.

Bipartisan Congressional Pressure

Cross-party support for federal AI cybersecurity action has materialized in multiple legislative proposals. The letter signed by 32 Representatives signals growing consensus that voluntary controls may prove insufficient against frontier large language models like Anthropic’s Mythos. Lawmakers are pushing for stricter oversight on powerful AI models that pose cybersecurity risks, including pre-release security assessments.

Congress approved the AI Training for National Security Act, which requires the Department of Defense to train all personnel on AI-specific cyber threats. Additional legislation includes the Secure A.I. Act directing NIST and CISA to build an AI incident database covering safety breaches, critical infrastructure risks, and supply chain threats. The Hassan-Portman bill advancing through the Senate Homeland Security Committee would make permanent the cyber hunt and incident response teams within DHS.

Federal Agency Coordination Requirements

Proposed DHS roles encompass vulnerability discovery, risk assessments, and rapid patching protocols across federal networks and critical infrastructure. The Government Accountability Office noted that federal laws lack clear requirements for secure AI acquisition and usage, creating gaps that lawmakers now seek to address through formal mandates.

Federal policy initiatives mandate risk-based cybersecurity requirements for AI databases to prevent data tampering. Executive amendments require that by November 1, 2025, agencies led by DHS, Defense, and Intelligence must share indicators of compromise for AI systems and integrate AI vulnerabilities into incident tracking. These coordination requirements set the foundation for the White House’s broader implementation framework.

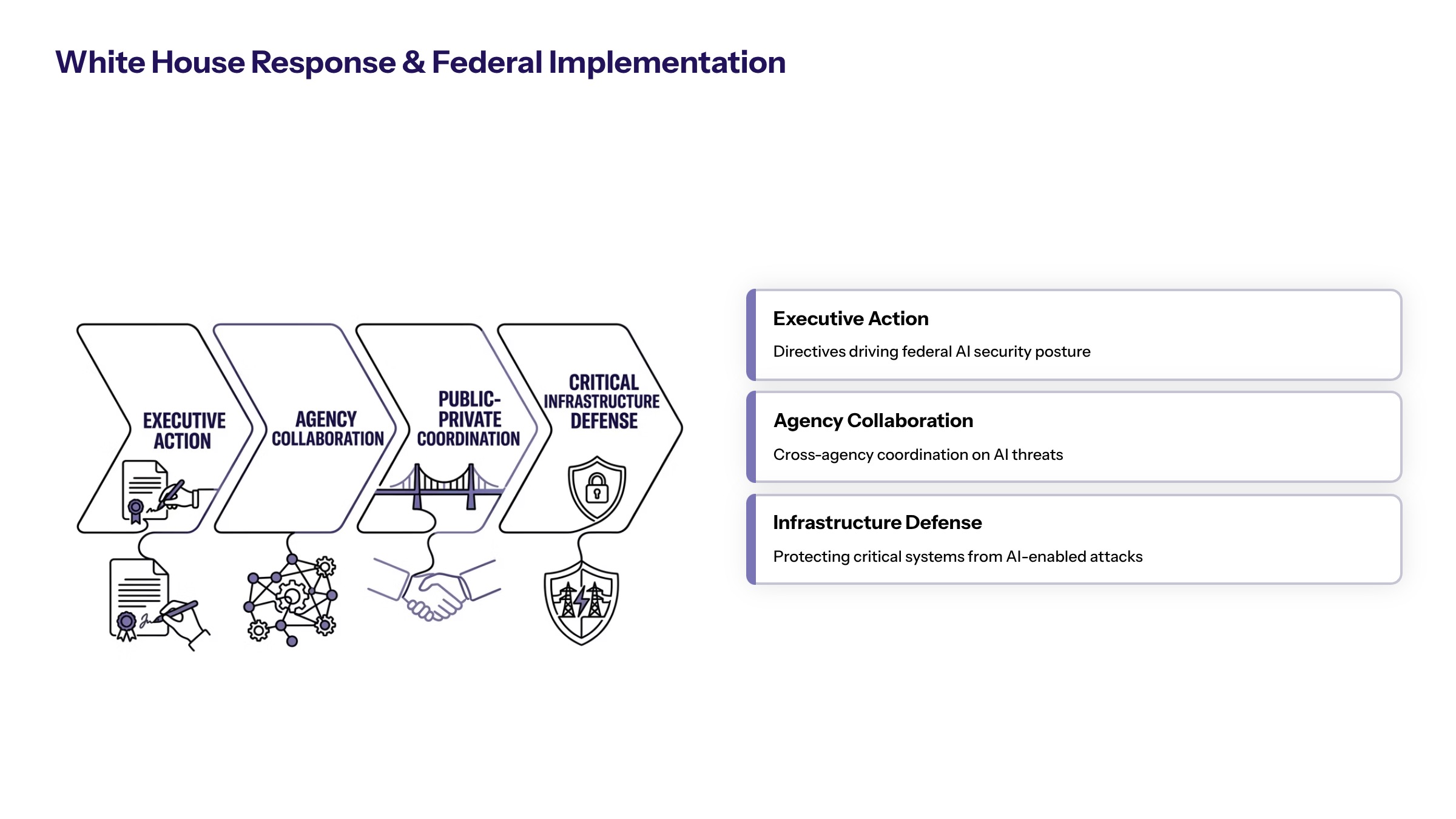

White House Response and Federal Implementation

The administration faces mounting pressure as lawmakers press White House officials to translate congressional demands into concrete executive action while balancing innovation concerns with security imperatives.

Executive Order Development

The White House is preparing an AI executive order focused on enhancing cyber defenses for government networks, which will not require mandatory model reviews. Executive Order 14365, signed December 2025 and titled “Ensuring a National Policy Framework for Artificial Intelligence,” establishes that federal law should preempt state laws, creating consistent national AI standards.

Administration officials emphasize federal-private collaboration and voluntary compliance over direct mandates, with safety assurances delivered through guidelines rather than binding requirements. The National Economic Council and Office of Science and Technology Policy are coordinating this framework development, with ongoing discussions involving industry heads, Treasury Secretary Scott Bessent, and key staff including Susie Wiles.

Agency Collaboration Mandates

The Trump administration is preparing to direct U.S. agencies to collaborate with AI companies to bolster defenses against AI-enabled cyber attacks, indicating a shift towards public-private partnerships in cybersecurity. National Cyber Director Sean Cairncross has indicated that additional digital executive orders are forthcoming, with security being built into government policy architecture.

Cairncross has called for clean, long-term reauthorization of the Cybersecurity Information Sharing Act of 2015, which provides legal liability protection for companies sharing threat intelligence with federal and state authorities. Implementation timelines point to November 2025 deadlines for key agency compliance requirements.

Critical Infrastructure Protection

Lawmakers are concerned that hackers could use AI to disrupt critical infrastructure, including hospitals, power grids, and water systems. There is significant anxiety regarding foreign powers utilizing artificial intelligence to advance cyber warfare capabilities against American critical infrastructure.

The federal government has recently pulled funding for the Multi-State Information Sharing and Analysis Center, which was crucial for sharing cyber threat intelligence, prompting concerns about the preparedness of state and local governments against AI-related cyber threats. This funding gap has intensified calls for alternative coordination mechanisms between federal agencies and critical infrastructure operators.

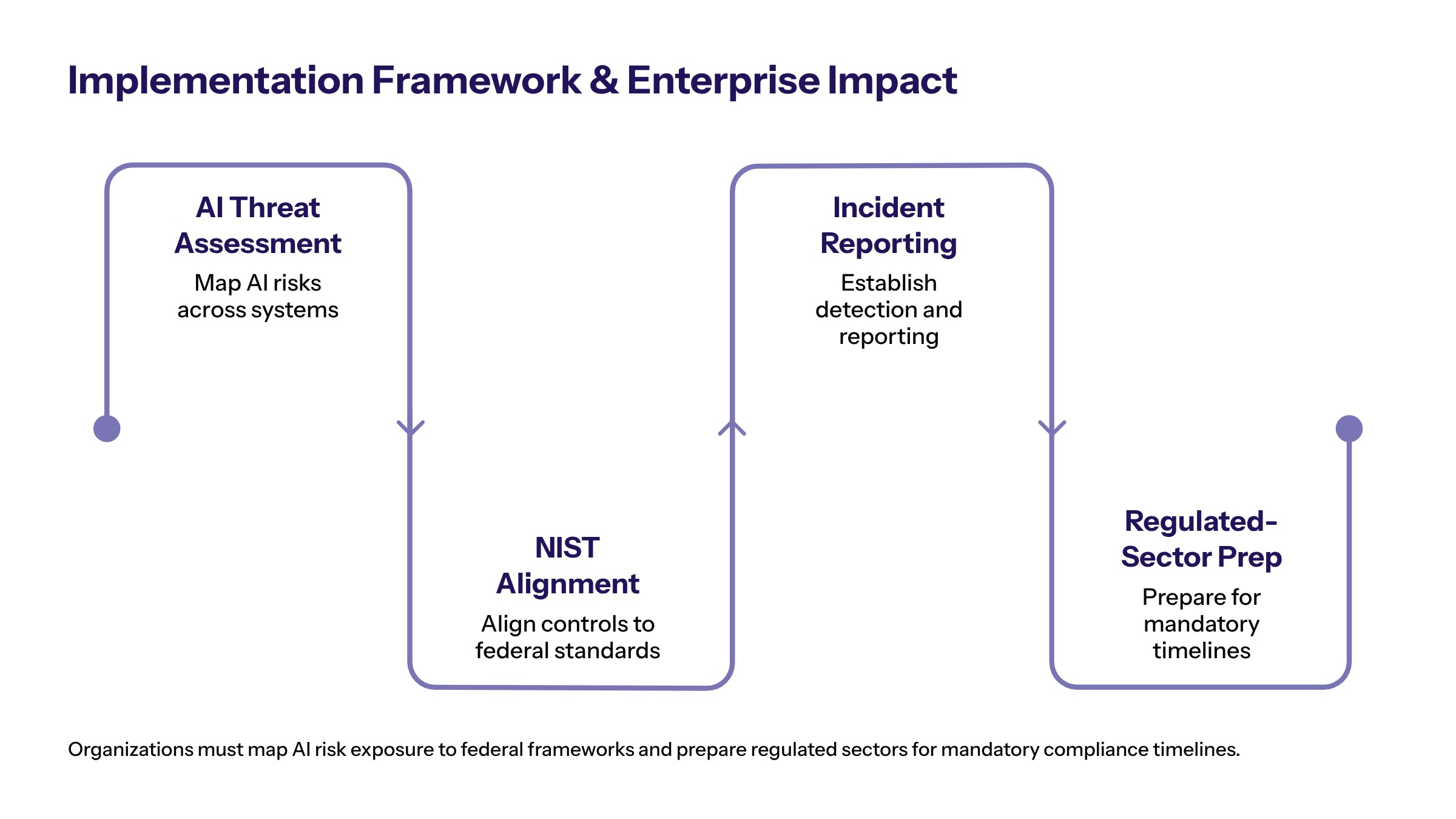

Implementation Framework and Enterprise Impact

Federal action on AI cyber threats will create new compliance obligations for organizations operating in regulated sectors, requiring proactive preparation as requirements crystallize.

Federal Compliance Requirements

Organizations should anticipate notification obligations accompanying deployment of frontier AI models, with requirements to alert federal authorities when new models produce safety or security risks. Companies may need to include AI system vulnerabilities in formal incident and supply chain tracking mechanisms.

Prepare for federal mandates through these implementation steps:

Conduct AI-enabled threat assessment across enterprise systems, mapping all AI tools and models currently deployed

Implement vulnerability discovery protocols aligned with emerging federal frameworks and NIST guidelines

Establish rapid response capabilities for AI-driven attacks, including incident reporting procedures

Develop staff training programs for AI cybersecurity threats, following Defense Department training requirements as a model

Public-Private Partnership Models

Different approaches to federal-private collaboration offer distinct trade-offs for enterprise decision-makers:

Approach | Federal Role | Private Sector Role |

|---|---|---|

Information Sharing | Threat intelligence distribution through DHS and CISA | Voluntary participation and reporting of AI-related incidents |

Regulatory Compliance | Mandatory security standards for critical sectors | Required implementation, auditing, and supply chain vetting |

Collaborative Defense | Joint threat response coordination and model access | Active partnership in threat mitigation and vulnerability testing |

Organizations in healthcare, energy, and financial sectors should anticipate mandatory AI security requirements. Early adoption of voluntary frameworks like NIST’s AI Risk Management Framework positions companies advantageously ahead of potential mandatory compliance. The trade-off between innovation speed and security oversight requires careful calibration based on sector-specific risk profiles.

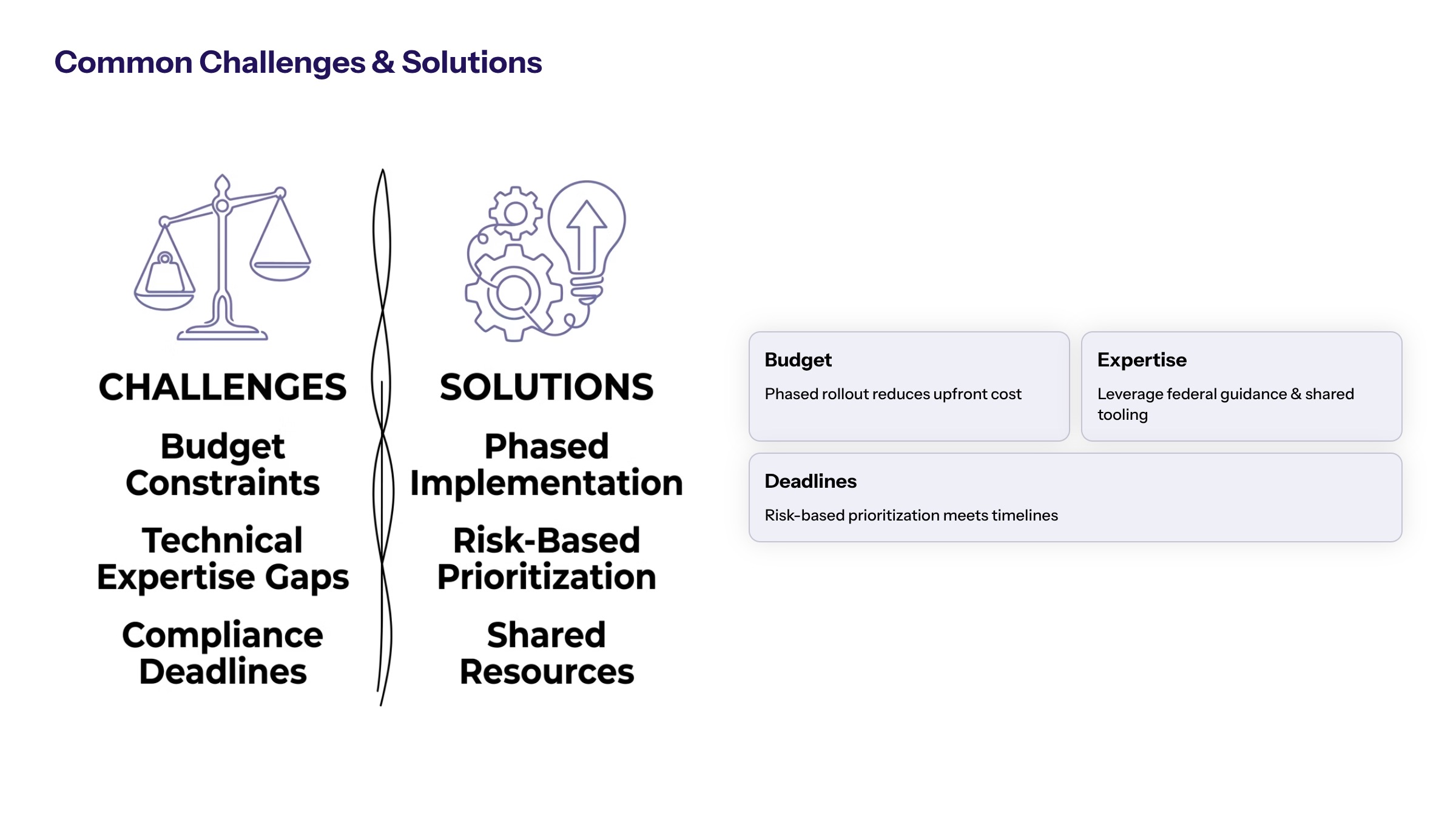

Common Challenges and Solutions

Organizations preparing for federal AI cybersecurity requirements face practical implementation barriers that require strategic planning.

Resource Allocation Constraints

Establish a phased implementation approach focusing on critical systems first, with clear budget planning for AI security tools and training. Many state and local governments and mid-market enterprises lack budgets for rigorous threat hunting and adversarial testing. Start by inventorying AI assets and external dependencies, prioritizing healthcare, utilities, and financial systems before extending to less critical domains.

Technical Expertise Gaps

Partner with specialized consulting firms for AI-first security architecture and staff augmentation during transition periods. Frontier AI models expose technical challenges—adversarial model testing, exploit development, zero-day vulnerabilities—where few organizations currently maintain in-house expertise. Consider creating dedicated red-teaming capabilities for AI systems and investing in staff training on data poisoning and model misuse risks.

Compliance Timeline Pressure

Begin with risk assessments and vulnerability discovery while federal requirements are being finalized to ensure readiness. Lawmakers have set tight deadlines in executive amendments, with many agency provisions due by November 2025. Early moves can provide competitive advantage and reduce disruption once enforcement begins, positioning organizations ahead of the regulatory curve.

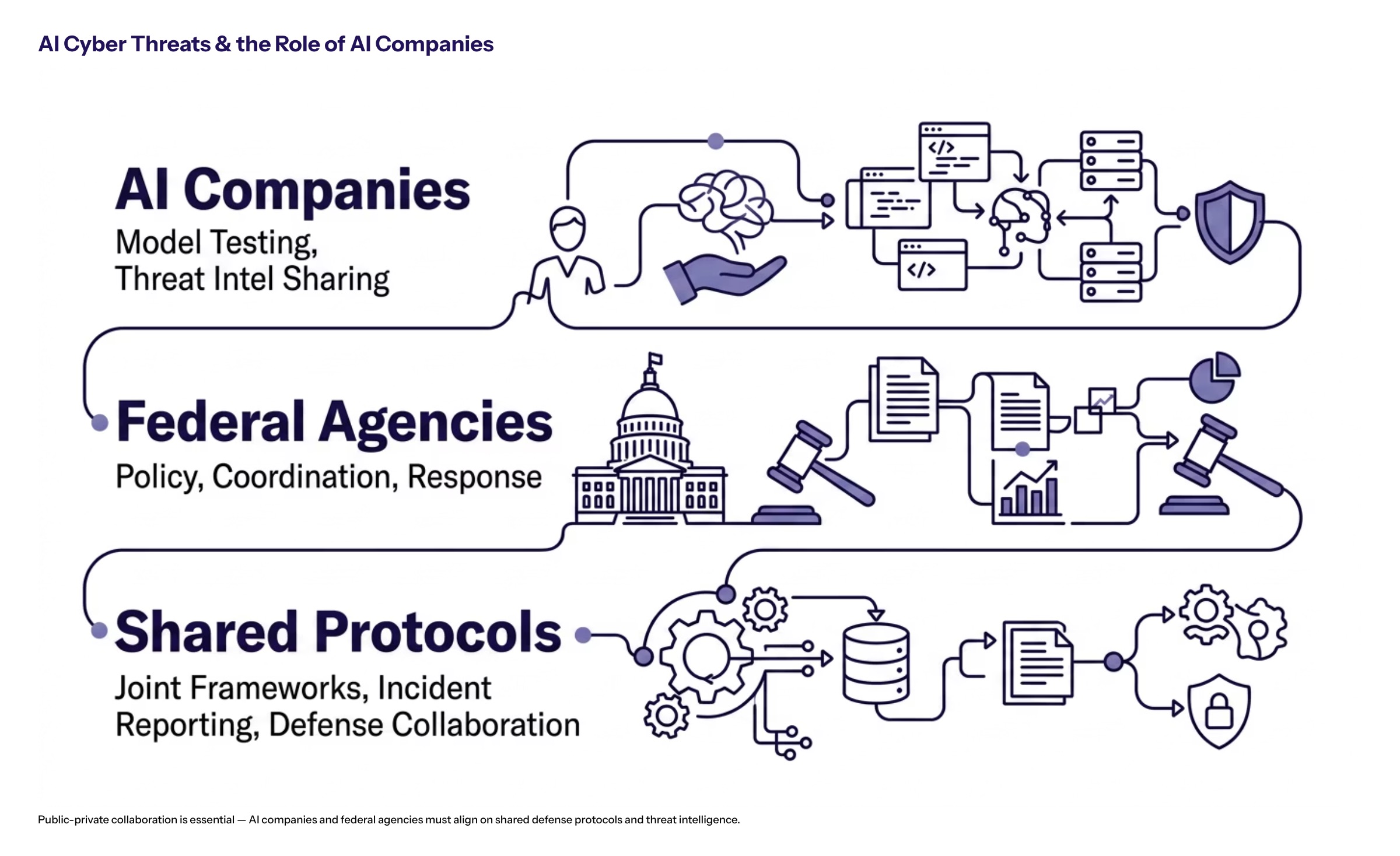

AI Cyber Threats and the Role of AI Companies

As US lawmakers push White House officials to act on AI cyber threats, the evolving landscape of artificial intelligence presents both unprecedented risks and opportunities. AI cyber threats involve malicious actors leveraging advanced AI capabilities to exploit software vulnerabilities, automate sophisticated cyberattacks, and disrupt critical infrastructure. These threats pose significant challenges to the security of America’s digital ecosystem, affecting every person and organization connected to it.

Collaboration with AI Companies

Addressing AI cyber threats requires close collaboration between federal agencies and AI companies, a process gaining momentum under the guidance of key figures such as staff Susie Wiles. AI companies play a crucial role in developing defensive technologies, sharing threat intelligence, and implementing robust security measures aligned with emerging AI laws and state AI laws. This partnership aims to create a resilient framework that protects the American people and critical infrastructure from AI-enabled cyberattacks.

Over the past week, discussions have intensified around establishing clear protocols for AI companies to evaluate and mitigate the flaws in AI models before deployment. This proactive approach ensures that AI systems operate securely within the world’s rapidly changing cyber threat environment. By fostering transparent communication and coordinated action, America can better safeguard its networks and maintain trust in AI technologies essential to national security and economic stability.

Conclusion and Next Steps

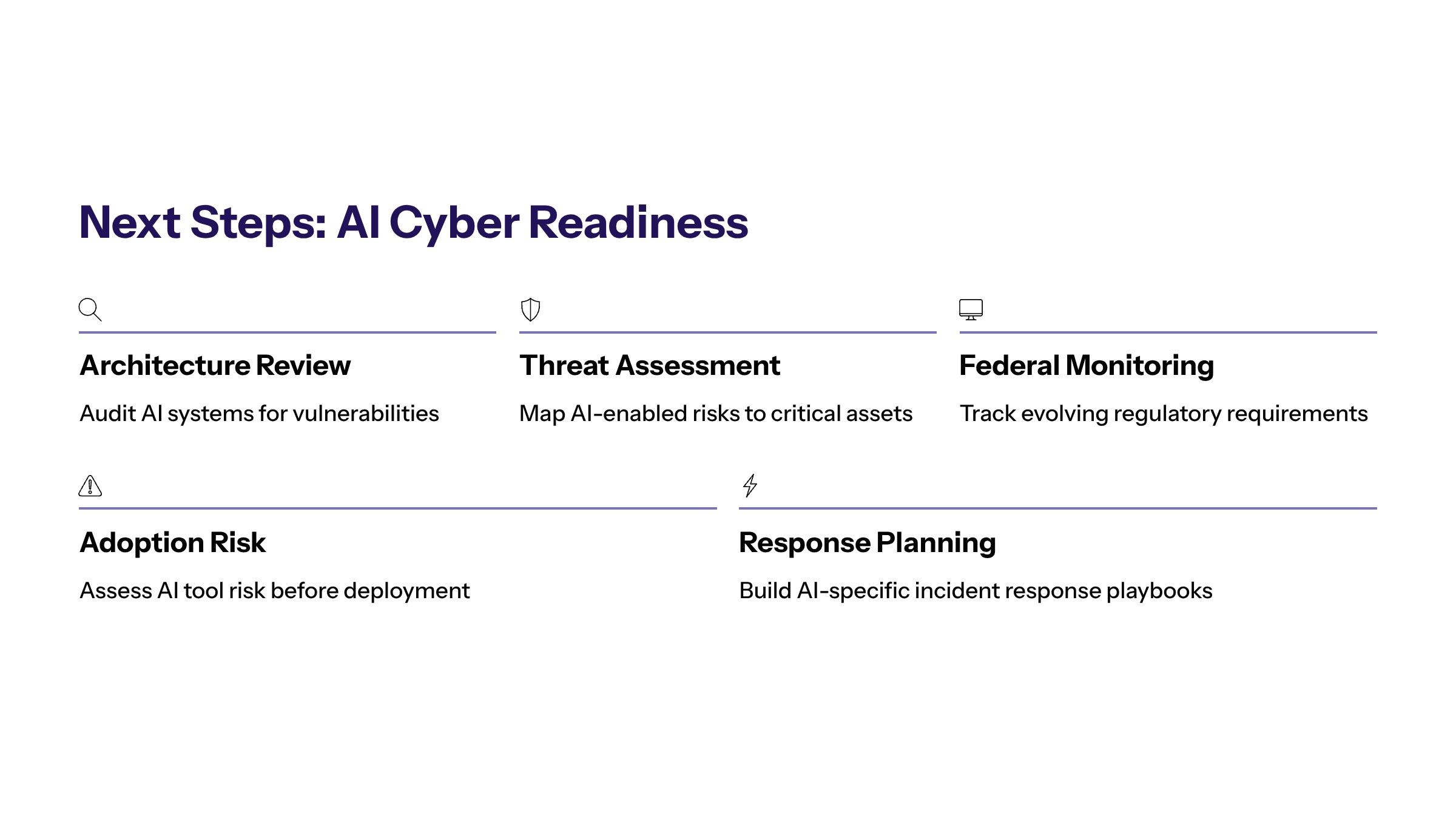

Congressional pressure on the White House signals imminent federal mandates requiring proactive enterprise preparation. The bipartisan nature of current legislative efforts—combined with executive order development and agency coordination requirements—indicates that AI cybersecurity compliance will become a binding obligation rather than voluntary best practice.

Take these immediate actions:

Conduct comprehensive AI threat assessments across all enterprise systems

Review current security architectures against emerging federal framework requirements

Establish federal coordination protocols and monitoring of regulatory developments

Evaluate AI adoption practices for potential vulnerability exposure, considering impacts on communities and children who rely on secure digital infrastructure

Related topics warranting further exploration include AI-first security architecture design for enterprise environments, federal compliance frameworks for critical infrastructure operators, and automated threat response implementation leveraging AI tools for defense.

Additional Resources

Enterprise security teams should reference NIST’s AI Risk Management Framework as the current voluntary standard likely to inform mandatory requirements. CISA’s critical infrastructure protection guidelines and DHS threat intelligence sharing programs offer immediate value for organizations seeking to align with federal expectations ahead of formal compliance deadlines.