Nebius Revenue Surges as AI Cloud Infrastructure Demand Explodes

Nebius Group delivered a stunning 684% year-over-year revenue surge in the first quarter of 2026, reaching $399 million compared to $50.9 million a year earlier. This explosive growth reflects the unprecedented demand for artificial intelligence infrastructure as enterprises transition from experimental AI projects to production-scale deployments across their operations.

This analysis covers Nebius’s financial performance breakdown, the strategic partnerships driving growth, and practical implications for technology executives, investors, and enterprise decision-makers evaluating AI infrastructure partnerships. We examine the market dynamics creating these conditions and assess both opportunities and risks in the AI cloud infrastructure sector.

Direct answer: Nebius achieved $399 million in first quarter revenue (up 684% year-over-year) driven by multi-billion dollar partnerships with Meta and Microsoft, benefiting from enterprise AI workload migration and capacity constraints that keep every GPU they bring online sold to competing customers.

Key outcomes readers will gain from this analysis:

Understanding of the specific revenue drivers behind Nebius’s growth trajectory

Assessment of strategic partnership structures with Meta, Microsoft, and Nvidia

Framework for evaluating enterprise AI infrastructure investment decisions

Risk analysis including capital spending requirements and margin pressure concerns

Timeline considerations for enterprise AI deployment planning

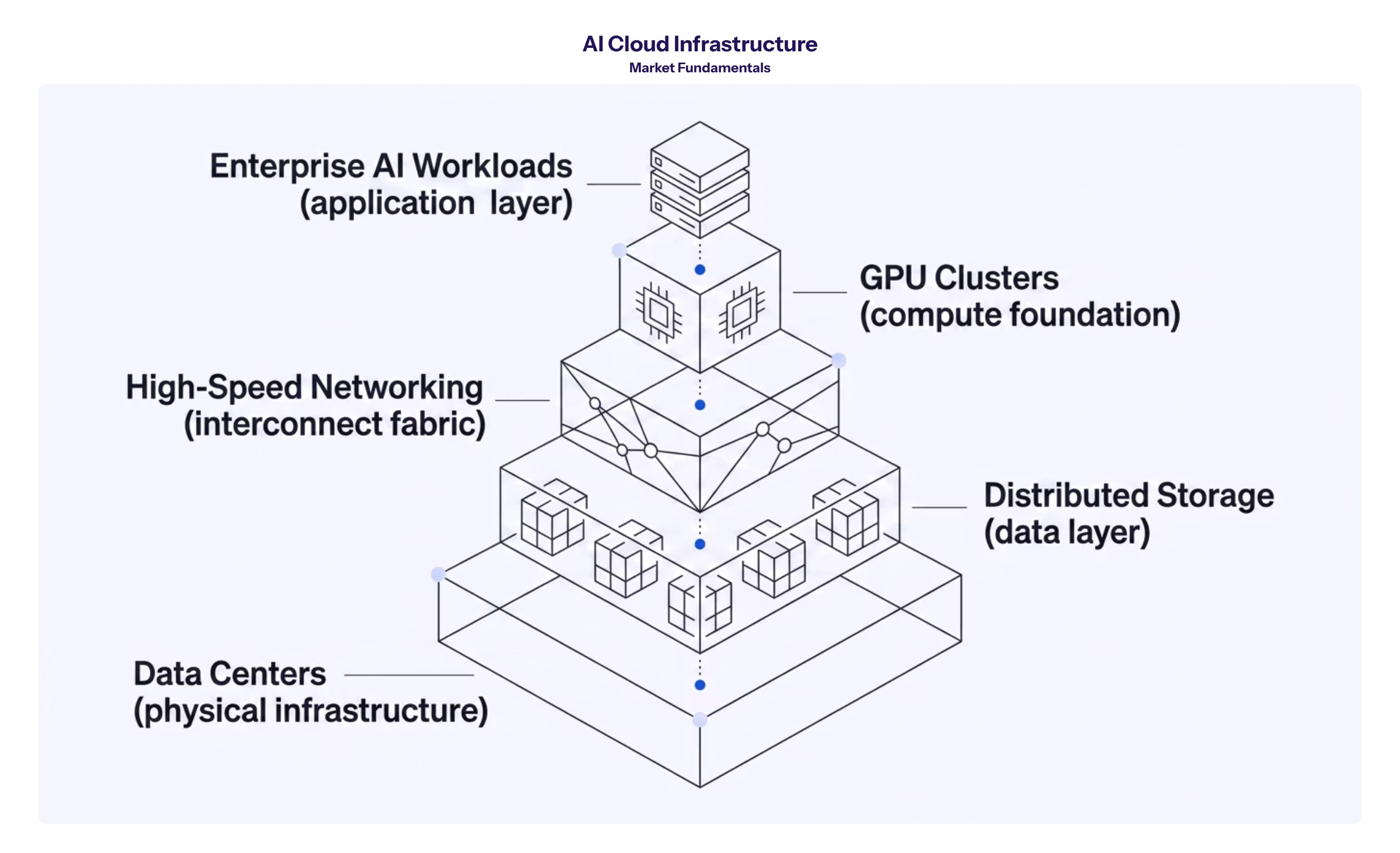

Understanding AI Cloud Infrastructure Market Fundamentals

AI cloud infrastructure encompasses the specialized computing capacity, data center facilities, networking, and software tools required to train and deploy artificial intelligence models at scale. Unlike traditional cloud computing services, this infrastructure is purpose-built for the intensive parallel processing demands of AI workloads.

For enterprise technology leaders and investors, understanding this market is essential because it represents both a significant capital allocation decision and a competitive differentiator. Companies that secure reliable access to AI computing capacity can accelerate their AI initiatives, while those facing infrastructure constraints may fall behind in deploying production AI systems.

GPU-Powered Compute Infrastructure

Graphics processing units form the core of modern AI computing infrastructure. Unlike general-purpose CPUs, GPUs can execute thousands of parallel operations simultaneously, making them ideal for the matrix calculations central to neural network training and inference.

Enterprise AI workloads—from training large language models to running real-time inference at scale—require massive clusters of these specialized chips. Nebius describes its core business as a “neocloud” optimized for AI workloads, offering high-performance GPU compute, storage, networking, and software tools through offerings including Nebius AI Cloud and Token Factory for inference scenarios.

Hyperscale Data Center Operations

Hyperscale data centers operate at gigawatt-scale power consumption, requiring substantial land, power contracts, and specialized facility design. These operations enable the massive computing capacity necessary for training foundation models and running enterprise AI applications.

For enterprises evaluating AI infrastructure partnerships, data center expansion decisions directly impact latency, regulatory compliance, power costs, and availability. Nebius has secured more than 3.5 gigawatts of contracted power as of Q1 2026, with connected capacity (facilities actively operational) targeted to reach 800 MW to 1 GW by year-end 2026.

This infrastructure foundation explains why companies like Nebius can command premium pricing and attract multi-billion dollar partnerships—and why understanding these dynamics matters for evaluating Nebius stock and the broader AI infrastructure market.

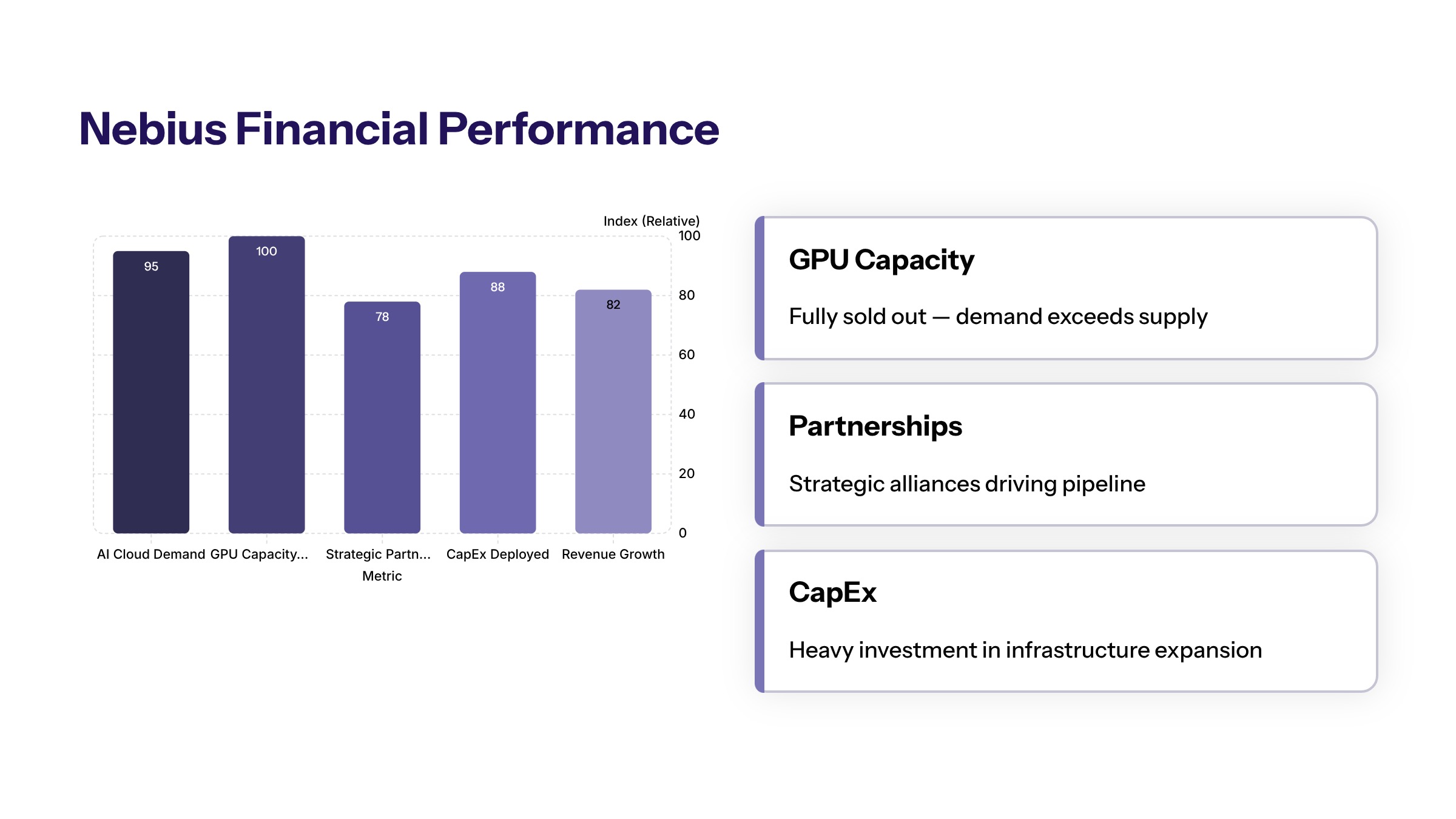

Nebius Financial Performance Breakdown

The first quarter 2026 results demonstrate how infrastructure scarcity and enterprise demand translate into financial outcomes. These metrics reveal both the growth opportunity and the capital intensity required to capture it.

Q1 2026 Revenue Acceleration

Nebius reported quarterly revenue of $399 million in Q1 2026, representing a 684% increase compared to the same period last year when revenue reached $50.9 million. This growth rate exceeded analyst expectations and signals the acceleration phase of enterprise AI infrastructure adoption.

The company’s capacity is typically sold out, with multiple customers competing for every GPU they bring online. This demand-supply imbalance has enabled pricing power—Nebius noted that average selling prices have increased more than 50% in recent deals. The annualized run-rate revenue for the AI cloud business reached $1.9 billion as of Q1 2026, up from $1.25 billion in Q4 2025.

Many customers have transitioned from pilot AI workloads to longer-term reserved capacity contracts extending beyond 12 months, creating greater revenue predictability and reducing the company’s exposure to spot pricing volatility.

Strategic Partnership Impact

Nebius has signed multi-billion dollar agreements with major tech companies that transform its revenue visibility and growth trajectory.

The company signed a five-year strategic partnership agreement with Meta valued at up to $27 billion—the largest single contract in the company’s history. This agreement comprises $12 billion of dedicated capacity starting early 2027, plus up to $15 billion of optional additional capacity Meta may purchase over the same period. The partnership with Meta is expected to support Nebius’s long-term AI infrastructure goals, significantly increasing its contracted power guidance for the end of 2026.

Microsoft entered a multi-year capacity contract valued at $17.4 billion through 2031, potentially increasing to $19.4 billion if additional capacity or services are added. This arrangement covers compute capacity from data centers including the Vineland, New Jersey facility.

Nvidia invested $2 billion into Nebius, providing strategic access to upcoming GPU hardware including Vera Rubin and GB300 architectures, engineering collaboration, and early access to new rack and server designs. Nebius is targeting more than 5 gigawatts of deployed capacity globally by end of 2030 under this partnership.

Operational Metrics and Guidance

Adjusted EBITDA swung to a profit of $129.5 million from a loss of $53.7 million in the prior-year period, exceeding market expectations of $87.2 million. The core AI cloud business achieved 45% adjusted EBITDA margin in Q1 2026, while group adjusted EBITDA margin reached 32%.

Capital spending surged to approximately $2.5 billion in Q1 2026, compared with about $544 million a year earlier—a more than four-fold increase reflecting accelerated build-out of AI computing infrastructure to meet rising demand. The company has raised full-year CapEx guidance to $20-25 billion, up from previous guidance of $16-20 billion.

Nebius raised its full-year revenue guidance for 2026, projecting annual revenue between $3 billion and $3.4 billion, and annual recurring revenue of $7 billion to $9 billion. The company targets approximately 40% group adjusted EBITDA margin for the full year.

The company’s cash and cash equivalents reached a record high of $9.3 billion at the end of the first quarter, providing substantial resources for future investments. This liquidity is backed by approximately $4.34 billion in convertible notes and $2.0 billion raised via warrants.

However, the adjusted net loss (non-GAAP) widened slightly to $100.3 million in Q1 2026 from $83.6 million a year earlier, reflecting ongoing depreciation and interest costs from infrastructure investments. While continuing operations showed a GAAP net income gain of $621.2 million, this was driven largely by a $780.6 million non-cash gain from equity investment revaluations—a non-recurring item.

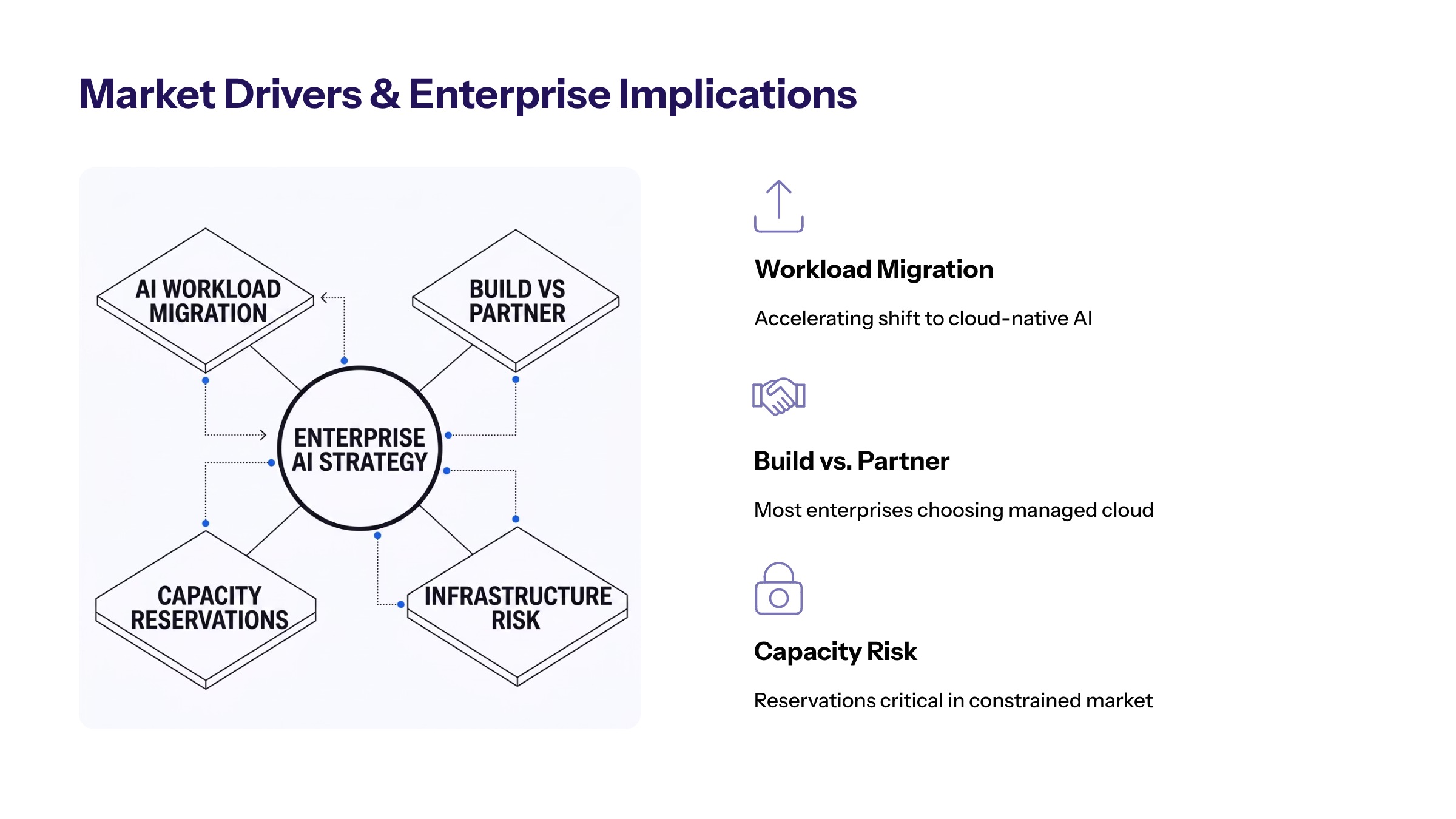

Market Drivers and Enterprise Implications

The AI infrastructure market is experiencing unprecedented demand as enterprises transition from experimental phases to production-scale deployments. Nebius’s financial results signal broader trends affecting enterprise AI strategy and investment planning.

Enterprise AI Workload Migration Timeline

Understanding deployment timelines helps enterprises plan infrastructure partnerships and budget allocations effectively.

Q2-Q3 2026: Pilot AI projects requiring specialized compute resources transition to production. Enterprises evaluate infrastructure partners and negotiate capacity reservations.

Q4 2026 - Q1 2027: Production AI workload deployment and scaling accelerates. Major capacity from partnerships like the Meta agreement begins coming online early 2027.

2027-2028: Full-scale AI integration across enterprise operations. Companies that secured infrastructure access gain competitive differentiation while capacity-constrained competitors face delays.

2028 and beyond: Advanced AI capabilities become table stakes. Infrastructure decisions made in 2026 determine competitive positioning.

Nebius announced a massive 1.2 GW AI factory in Pennsylvania while breaking ground on a gigawatt-scale site in Missouri to front-run 2027 server demand. These sites will take time to become operational—capacity connected often lags contracted or planned power by 12-24 months.

Build vs Partner Decision Framework

Enterprise decision-makers face fundamental choices about AI infrastructure sourcing. The following comparison outlines key evaluation criteria:

Criterion | Build In-House | Partner with Provider (e.g., Nebius) |

|---|---|---|

Capital Requirements | Very high ($500M+ for meaningful scale) | Lower upfront; operational expense model |

Time to Deployment | 18-36 months for new facilities | Weeks to months depending on availability |

Scalability | Limited by owned capacity | Flexible scaling with contract terms |

Technology Currency | Risk of hardware obsolescence | Provider manages hardware refresh cycles |

Operational Expertise | Requires specialized talent | Provider handles infrastructure management |

Contract Flexibility | Full control | Lock-in considerations; exit terms matter |

Nebius’s strategic partnerships with hyperscale customers like Meta and Microsoft are transitioning the company from an electricity arbitrage model to a performance validation phase, indicating a shift in business strategy that enterprises should monitor.

Investment and Risk Considerations

Analysts have flagged high capital expenditures, potential margin pressure, and customer concentration risks as key challenges for Nebius as they scale their infrastructure. Several factors warrant attention:

Capital intensity: The 355% year-over-year increase in capital expenditures reflects massive infrastructure investment. While this builds capacity, it creates depreciation burdens and interest costs that will pressure margins.

Customer concentration: Significant revenue from Meta and Microsoft creates dependency. Contract renegotiation, reduced demand, or partner financial difficulties could materially impact Nebius.

Competitive dynamics: Nebius competes with AWS, Google Cloud, and Azure, which are investing heavily in GPU infrastructure with vertical integration advantages. CoreWeave and other neoclouds also target the same market segment.

Execution risk: Deploying gigawatt-scale capacity requires securing power connections, zoning, permits, labor, and GPU supply chain. Delays defer revenue realization and can reduce margin flow-through.

For investors evaluating Nebius shares, the proprietary cloud architecture and demand signal are compelling, but underlying operations still generate adjusted net losses despite operational improvements.

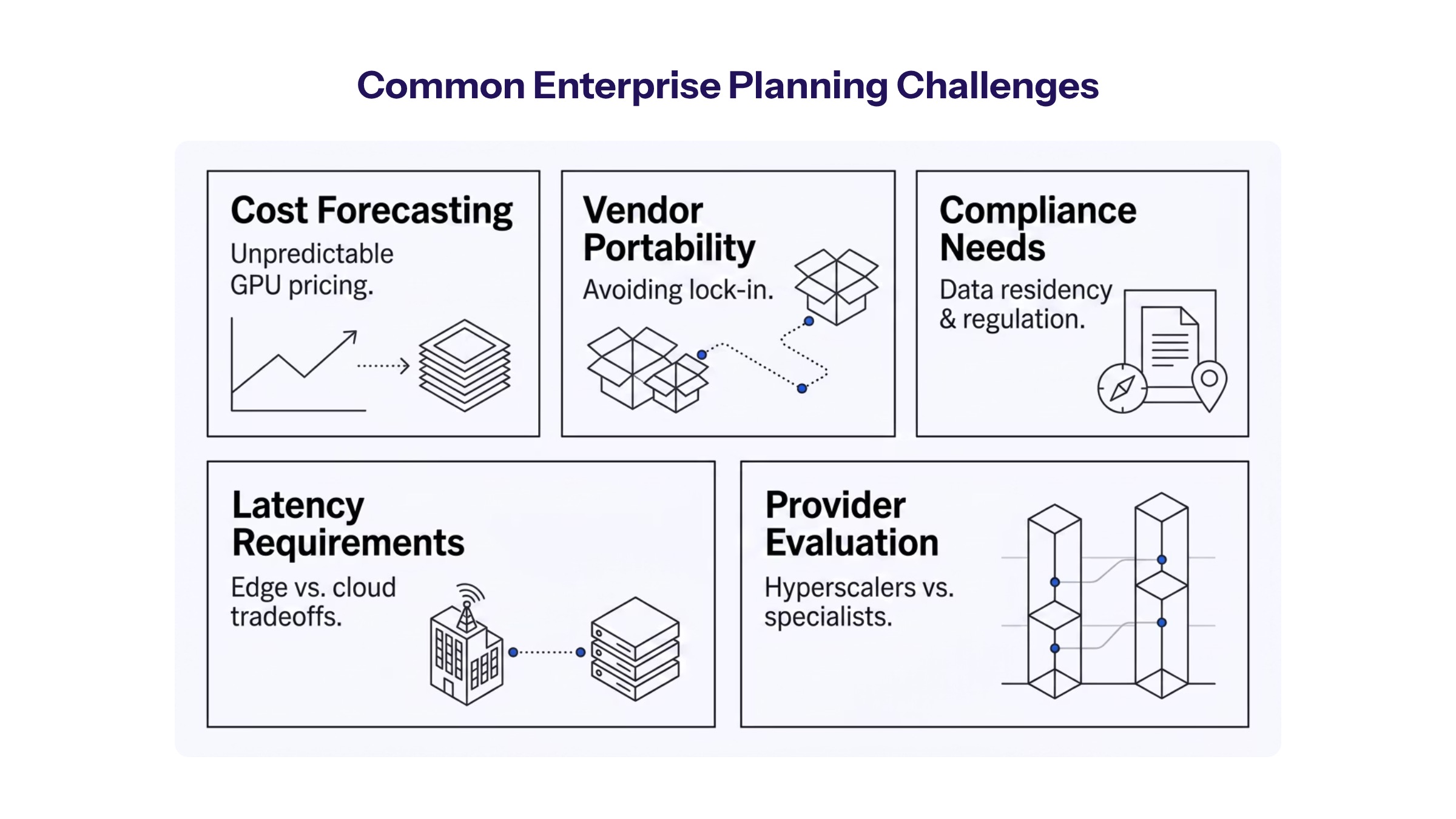

Common Enterprise Planning Challenges

Enterprises evaluating AI infrastructure partnerships encounter several recurring challenges. Addressing these proactively improves decision quality and reduces implementation risk.

AI Infrastructure Cost Forecasting

Estimating AI compute requirements involves significant uncertainty given rapidly evolving model architectures and scaling patterns. Enterprises should:

Build cost models based on training and inference workload projections, incorporating power consumption, hardware depreciation, and labor costs. Compare total cost of ownership for reserved capacity versus on-demand pricing across multiple providers. Plan for 50-100% annual growth in AI compute requirements during initial deployment phases.

Vendor Lock-in and Portability Concerns

Dependence on a single AI infrastructure provider creates strategic risk. Mitigation strategies include:

Negotiating contract terms that permit workload portability without excessive exit costs. Adopting containerization and orchestration tools that abstract infrastructure dependencies. Maintaining relationships with multiple providers even if primary capacity comes from one partner.

Performance and Compliance Requirements

Enterprise AI workloads often involve sensitive data and regulatory constraints. Evaluation criteria should include:

Data residency requirements and geographic footprint of provider facilities. Security certifications and audit capabilities. Inference reliability and latency guarantees—Nebius’s Token Factory platform addresses enterprise concerns about trust, control, and simplicity in production AI deployments. Monitoring and observability tools for model performance tracking.

Nebius’s acquisitions of Eigen AI (inference capabilities, ~$643 million) and Tavily (agentic search, ~$400 million) expand its stack beyond raw hardware into value-add software and model management—differentiators that may reduce enterprise implementation complexity.

Conclusion and Next Steps

Nebius’s 684% revenue growth in Q1 2026 confirms that AI infrastructure demand has moved from speculation to measurable market reality. The company’s strategic partnerships with Meta, Microsoft, and Nvidia, combined with aggressive capital spending and capacity expansion, position it as a significant player in the artificial intelligence infrastructure market.

However, the transition from revenue growth to sustainable profitability remains incomplete. High capital spending, depreciation burdens, and customer concentration create execution risk that investors and enterprise partners should monitor.

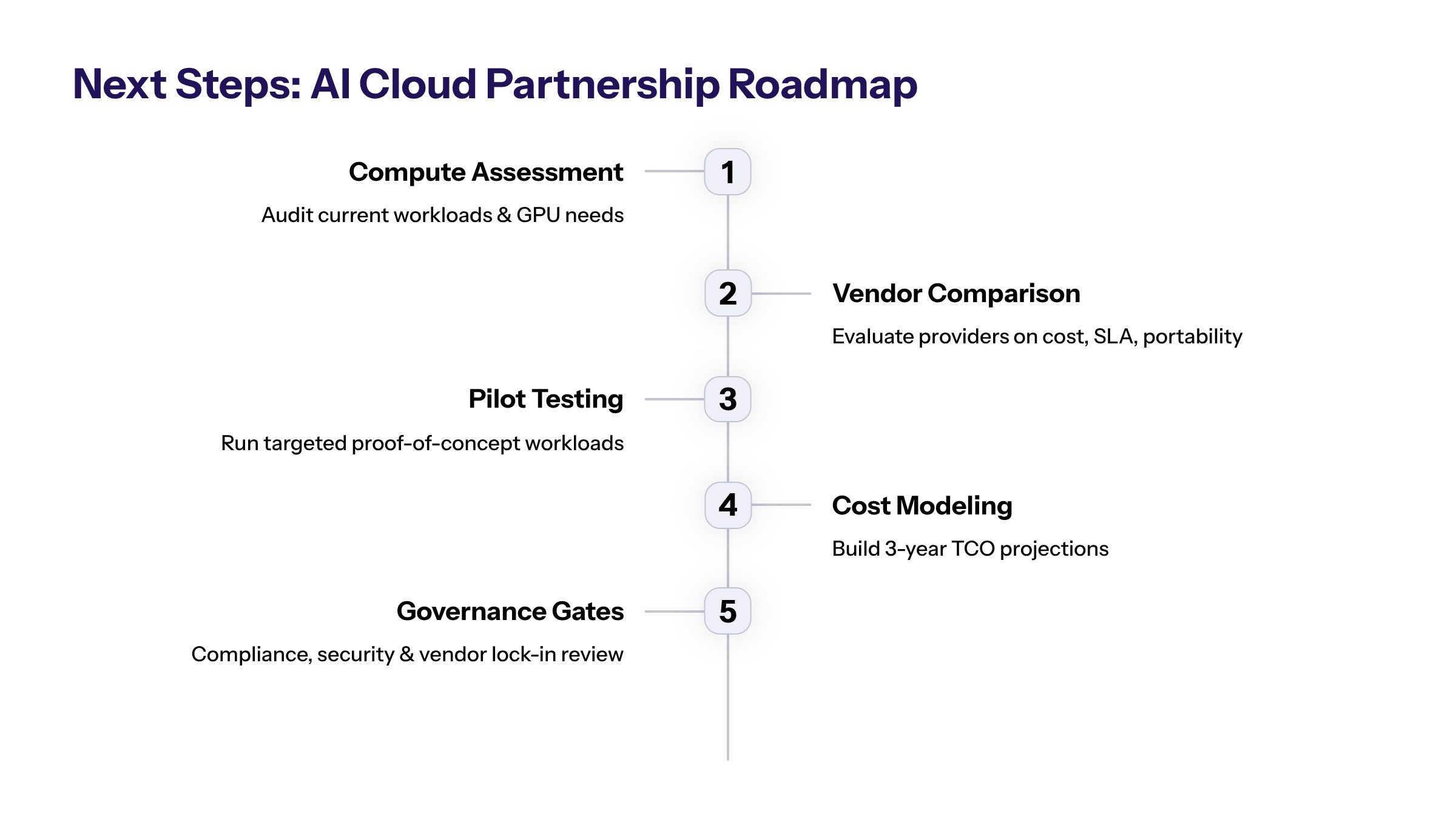

Immediate actionable steps for enterprise decision-makers:

Assess current and projected AI compute requirements across training and inference workloads

Evaluate infrastructure partnership options comparing Nebius, hyperscalers, and other neoclouds

Plan pilot projects that test infrastructure performance before committing to multi-year contracts

Model total cost of ownership scenarios across build, buy, and hybrid approaches

Establish governance frameworks for AI infrastructure vendor selection and risk management

Related topics to explore: AI-first architecture design principles, enterprise AI governance frameworks, cloud infrastructure cost optimization strategies, and data center sustainability requirements.

Additional Resources

Nebius Q1 2026 earnings release and investor presentation (SEC Form 6-K filing)

Enterprise AI infrastructure assessment frameworks comparing GPU cloud providers

Industry analysis reports on AI cloud market trends from major research firms

Power and capacity tracking resources for monitoring infrastructure buildout progress