OpenAI and Microsoft Reportedly Cap Revenue Sharing at $38B: What This Means for AI Partnerships

OpenAI and Microsoft have reportedly agreed to cap their revenue-sharing arrangement at $38 billion, which could reshape the competitive landscape of the artificial intelligence industry. This significant development marks a pivotal shift in one of technology’s most consequential partnerships, with implications extending far beyond the two companies directly involved.

This article examines the enterprise AI implications, partnership dynamics, and competitive landscape changes resulting from this reported agreement. The analysis targets enterprise decision-makers, technology leaders, and AI investment strategists who need to understand how this deal affects vendor relationships, procurement strategies, and long-term technology planning. Understanding these dynamics matters because strategic alliances in the AI industry are becoming central to the future of technology development, as companies seek to share infrastructure and technical expertise.

The $38 billion revenue-sharing cap may allow OpenAI to pursue additional strategic partnerships with major technology companies, including Amazon and Google, while maintaining its foundational relationship with Microsoft. This expanded flexibility could fundamentally alter how enterprises access and deploy AI services.

Key outcomes from this analysis include:

-

Understanding the financial mechanics and strategic purpose of the revenue sharing cap

-

Recognizing how this agreement creates room for new partnerships with cloud providers

-

Evaluating competitive impacts across Microsoft Azure, AWS, and Google Cloud

-

Identifying enterprise procurement implications and vendor selection changes

-

Assessing security, compliance, and integration considerations for multi-cloud AI deployments

Understanding the Revenue Sharing Agreement

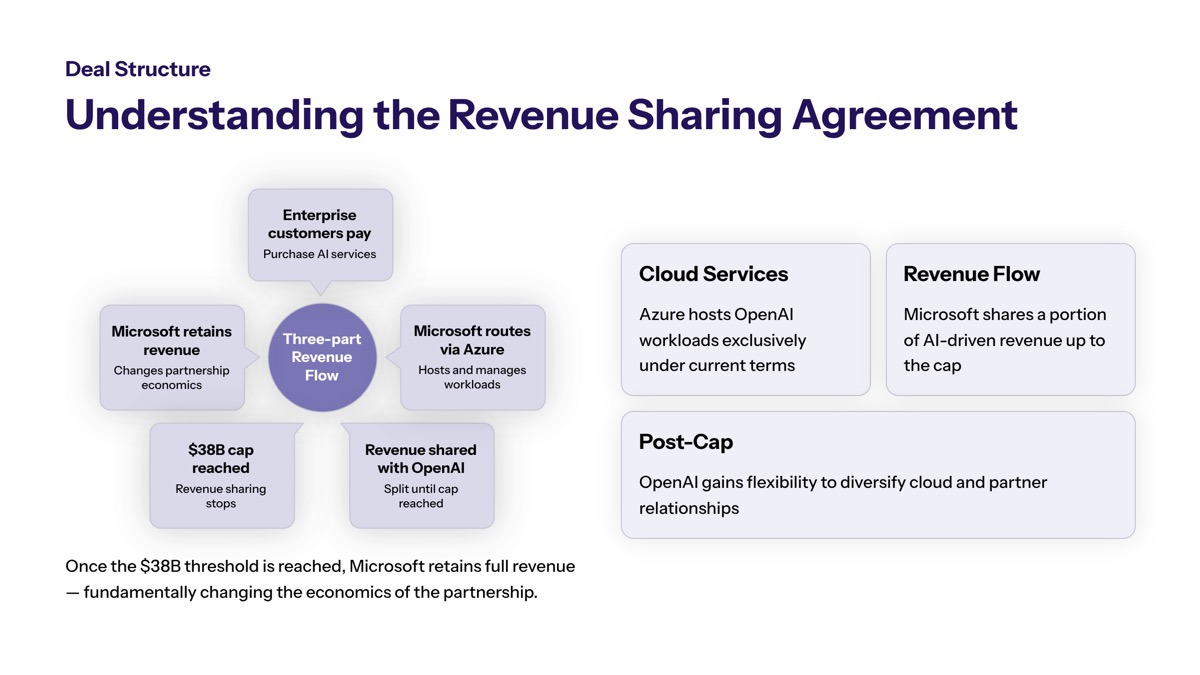

Revenue sharing caps in AI partnerships establish a ceiling on the total payments one company makes to another from generated revenue, creating financial predictability for both parties. In this context, the $38 billion cap limits OpenAI’s total future outflows to Microsoft under their revenue sharing arrangement, regardless of how much revenue OpenAI generates beyond a certain point.

The reported $38 billion figure relates directly to OpenAI’s commercial growth trajectory. With OpenAI reportedly generating approximately $2 billion per month in revenue—with roughly 40% from enterprise customers—the company’s rapid expansion makes this payment cap increasingly significant. As revenue continues to grow, the cap provides OpenAI with more flexibility to retain earnings and invest in development rather than face open-ended obligations to Microsoft.

The Microsoft-OpenAI Partnership Background

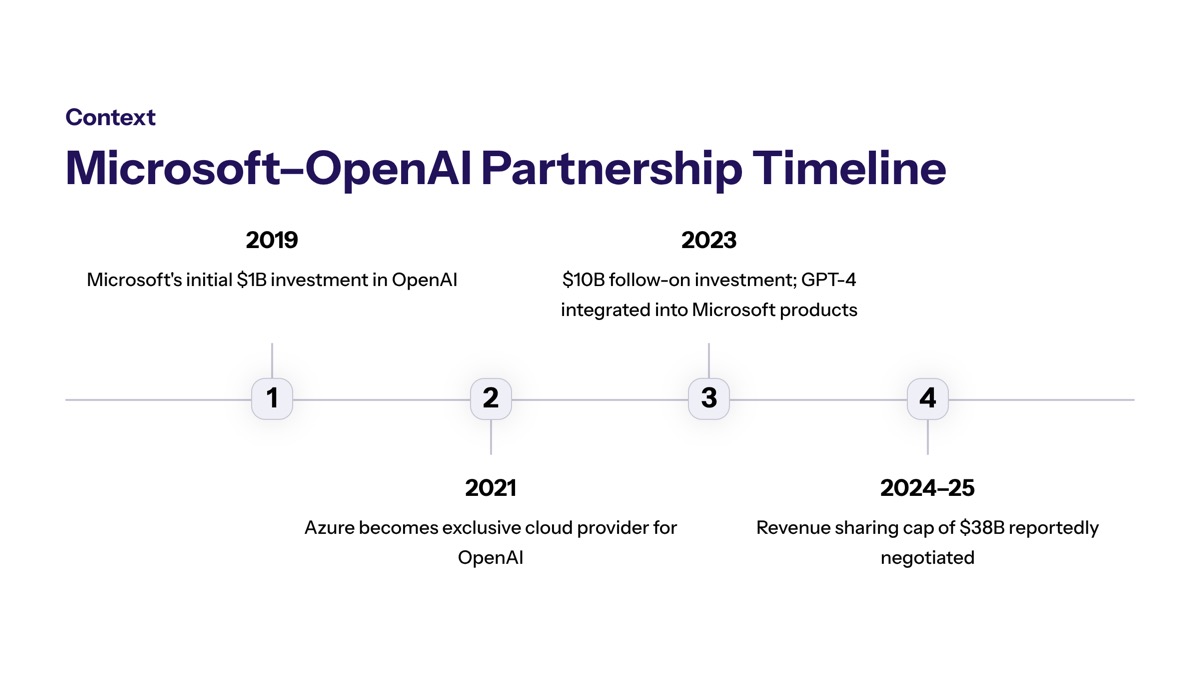

Microsoft first invested in OpenAI in 2019, providing not only capital but critical cloud infrastructure through Azure. This investment eventually grew to over $13 billion, establishing Microsoft as OpenAI’s primary technology partner and cloud provider. The collaboration included exclusivity obligations that granted Microsoft preferred access to license and commercialize OpenAI technologies.

The previous revenue sharing arrangements tied payments to various milestones, including advances toward Artificial General Intelligence (AGI). Under the new terms, revenue sharing payments continue at the same percentage as previously agreed but are now decoupled from performance milestones. Microsoft’s payments to OpenAI for reselling OpenAI’s services will cease entirely—meaning revenue flows become unidirectional from OpenAI to Microsoft for those resold products.

What the Cap Means Financially

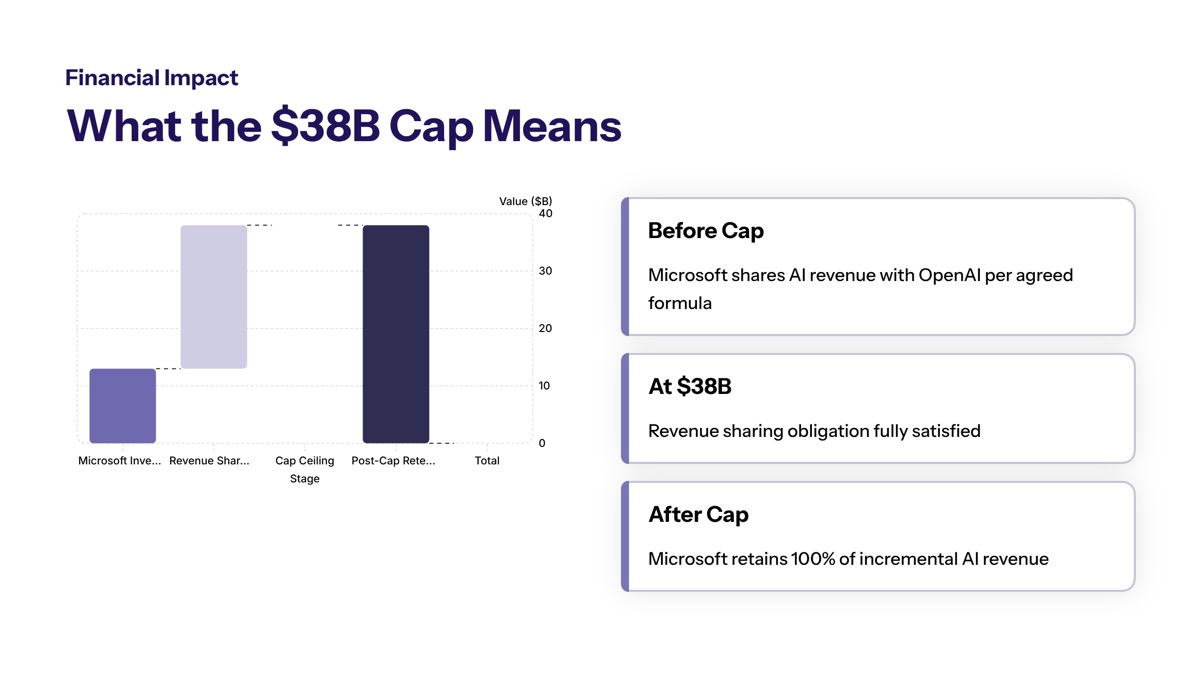

The cap of $38 billion limits OpenAI’s total revenue sharing payments to Microsoft through 2030. Once total payments reach this ceiling, they stop—even if OpenAI’s revenue continues its growth trajectory. Some projections suggest the revised agreement could yield up to $97 billion in savings for OpenAI compared to open-ended obligations under older terms.

This financial restructuring connects directly to OpenAI’s valuation, which has exceeded $800 billion in recent funding rounds. The renegotiation strengthens OpenAI’s financial position for a potential initial public offering (IPO) as early as late 2026. Having predictable financials via the cap makes financial modeling more credible to investors and simplifies the company’s path to public markets.

Strategic Implications for AI Partnerships

The reported $38 billion revenue-sharing cap between OpenAI and Microsoft may allow OpenAI to pursue additional strategic partnerships with other major technology companies, potentially reshaping the competitive landscape of the AI industry. This shift reflects broader market dynamics where collaboration and diversification increasingly drive competitive advantage.

OpenAI’s Expanded Partnership Flexibility

Analysts suggest that the revenue-sharing cap could create more flexibility for OpenAI to diversify its partnerships and pursue additional revenue opportunities. Under revised terms, Microsoft’s license to OpenAI’s intellectual property becomes non-exclusive through 2032, meaning OpenAI can now license its technologies to other cloud providers and partners.

OpenAI is pursuing a multi-cloud strategy to manage its computing needs, projected to reach tens of billions in annual costs. The agreement specifies that OpenAI products continue to ship first on Azure unless Microsoft is unable to support necessary capabilities—but this provision opens the door for deployment across multiple cloud environments when requirements demand it.

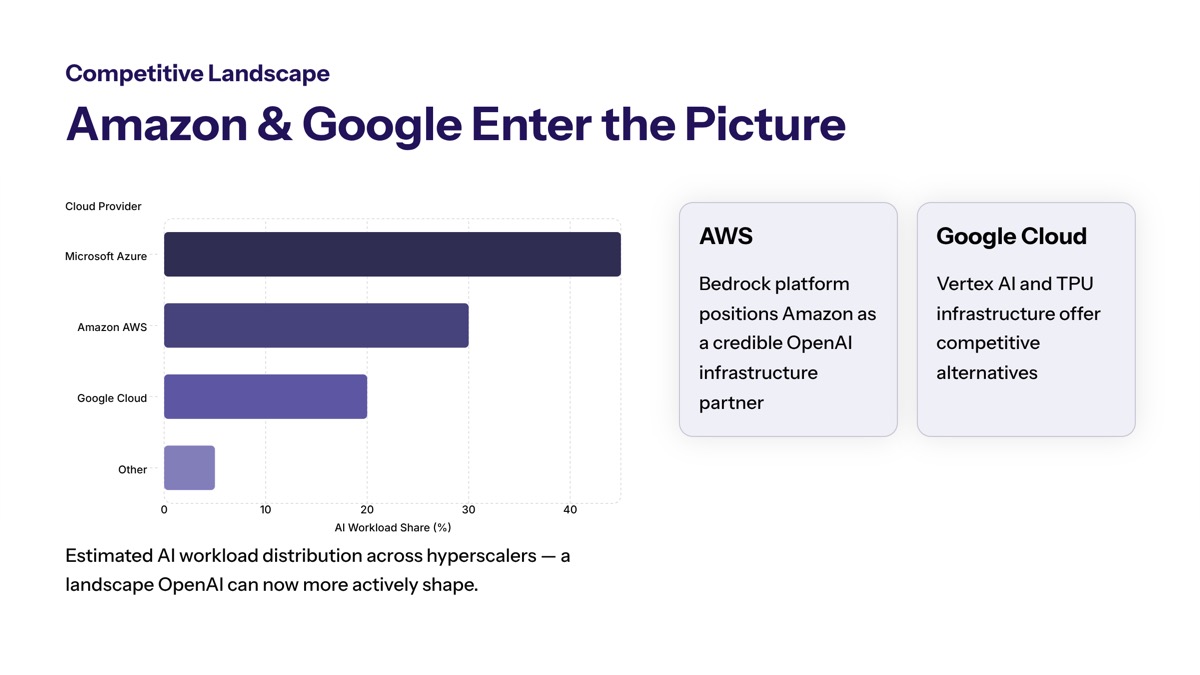

Amazon and Google Partnership Opportunities

The potential for OpenAI to engage more directly with Amazon and Google could increase competition within the AI industry, particularly among cloud providers. Amazon Web Services (AWS) has already reported “frankly staggering” inbound demand after opening up distribution channels with OpenAI, suggesting significant enterprise appetite for alternatives to Azure-exclusive deployment.

Google Cloud offers its own advantages for AI workloads, including custom TPU hardware optimized for machine learning and extensive experience with large-scale model deployment. Both AWS and Google provide enterprises with options to align AI services with existing cloud commitments, potentially improving performance through regionally optimized deployments.

Enterprise Customer Impact

How multiple partnerships could benefit enterprise AI adoption extends across several dimensions. Businesses that felt constrained by Microsoft-only deployment now have greater flexibility in vendor selection, pricing negotiations, and architectural decisions.

Improved pricing, performance, and integration options result from competition among cloud providers seeking to host OpenAI services. Enterprises can potentially achieve better SLAs, lower latency through regional deployment optimization, and enhanced redundancy through multi-cloud strategies. Companies worried about vendor lock-in gain room to optimize costs, compliance requirements, and technical performance simultaneously.

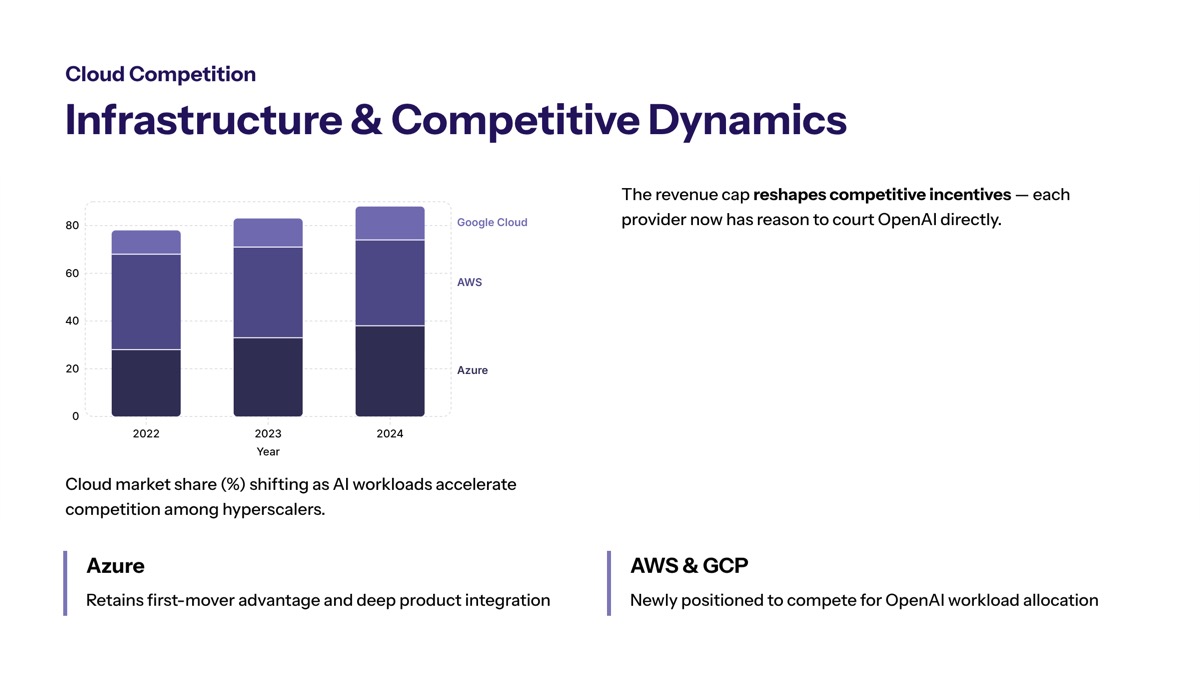

Impact on Cloud Infrastructure and Competition

Cloud computing has emerged as one of the most critical battlegrounds in artificial intelligence, as training and deploying advanced AI models requires enormous computational capacity. The AI industry is experiencing intense competition as major technology companies invest billions into AI infrastructure, cloud computing, and advanced machine learning systems.

Cloud Provider Competitive Dynamics

Microsoft, Amazon, and Google are all competing aggressively to dominate the rapidly expanding market of AI infrastructure and cloud computing services. Each platform brings distinct strengths to AI workload deployment:

|

Criterion |

Microsoft Azure |

Amazon AWS |

Google Cloud |

|---|---|---|---|

|

AI Integration |

Native OpenAI integration, Copilot ecosystem |

Bedrock, SageMaker, custom silicon |

Vertex AI, TPU infrastructure |

|

Data Center Scale |

Global footprint, enterprise focus |

Largest global presence |

Strong international coverage |

|

Enterprise Features |

Deep Microsoft 365 integration |

Broadest service portfolio |

Advanced analytics, BigQuery |

|

Compliance Options |

Extensive certifications |

Industry-leading compliance |

Strong regulatory coverage |

|

Pricing Models |

Enterprise agreements, hybrid options |

Flexible consumption, savings plans |

Sustained use discounts |

To support multi-cloud distribution of large language models, OpenAI must ensure consistency, reliability, and model performance across heterogeneous infrastructure—different GPUs, chips, networking architectures, and data center configurations.

Enterprise AI Procurement Changes

Enterprise procurement decisions may evolve through several stages as the new partnership terms take effect:

-

Assess current Azure-exclusive OpenAI deployments for multi-cloud compatibility requirements

-

Evaluate alternative cloud provider capabilities against specific AI workload needs

-

Develop vendor selection criteria that account for new partnership flexibility

-

Negotiate pricing and service terms that leverage increased competition among providers

-

Implement governance frameworks supporting multi-cloud AI service management

The AI sector has become one of the strongest drivers of investor interest, with companies associated with AI infrastructure and software development experiencing significant valuation growth over the past year. This market context influences how enterprises prioritize AI vendor relationships and infrastructure investments.

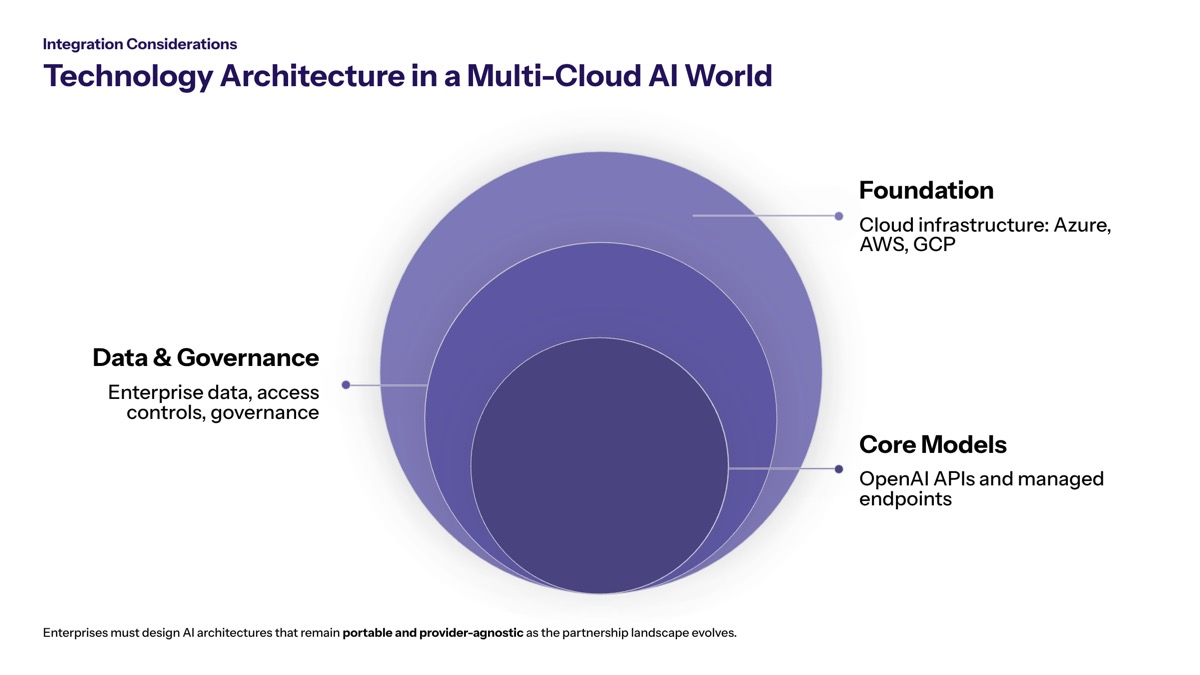

Technology Integration Considerations

Multi-cloud integration presents both challenges and opportunities for enterprise implementations. Differences in cloud provider infrastructure—GPU types versus TPUs, custom silicon options, networking capabilities—become more material when deploying AI services across multiple environments.

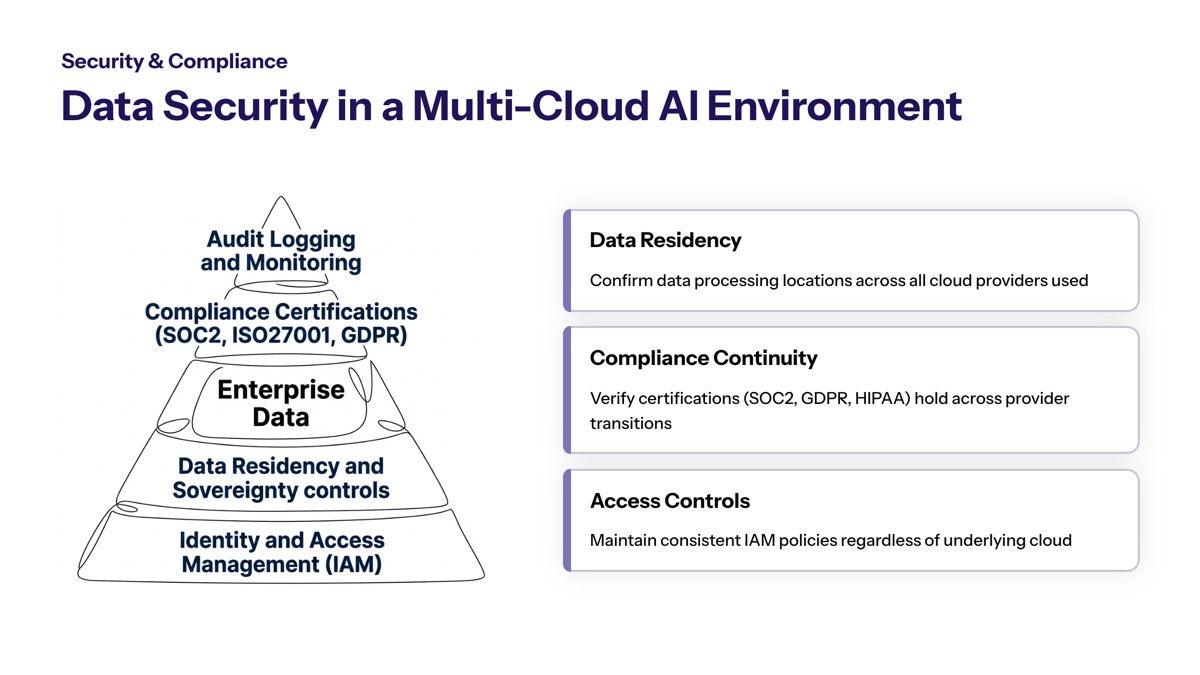

Security and compliance implications require careful attention. Data security, privacy, and compliance become complicated when models deploy across multiple clouds. Each provider maintains different certifications, operates in different jurisdictions, and offers varying data sovereignty and audit capabilities. Enterprises in regulated industries—healthcare, finance, education—must ensure consistent security posture across whatever clouds host OpenAI services.

Common Questions and Concerns

As AI companies expand their partnerships and market influence, they are increasingly attracting scrutiny from regulators concerned about competition, data privacy, and antitrust issues. Enterprise stakeholders raise several important questions about how these partnership changes affect their operations.

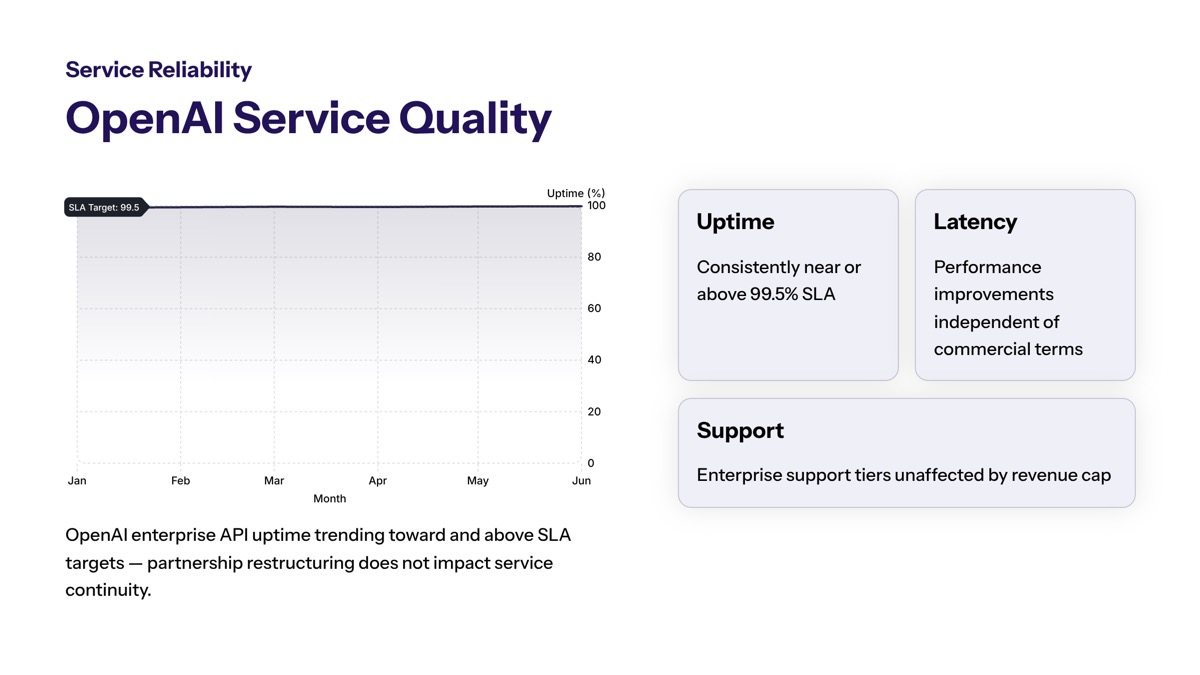

Will This Affect OpenAI Service Quality?

Service continuity and performance implications remain central concerns for enterprise customers dependent on OpenAI technologies. Microsoft retains “primary cloud partner” status, meaning OpenAI products continue to ship first on Azure unless Microsoft cannot support required capabilities. This arrangement suggests continued investment in Azure-based OpenAI services while potentially enabling performance improvements through competition.

The multi-cloud approach may actually improve service quality through redundancy and regional optimization. Enterprises unable to achieve desired latency or compliance through Azure-only deployment may find alternatives that better meet their specific requirements.

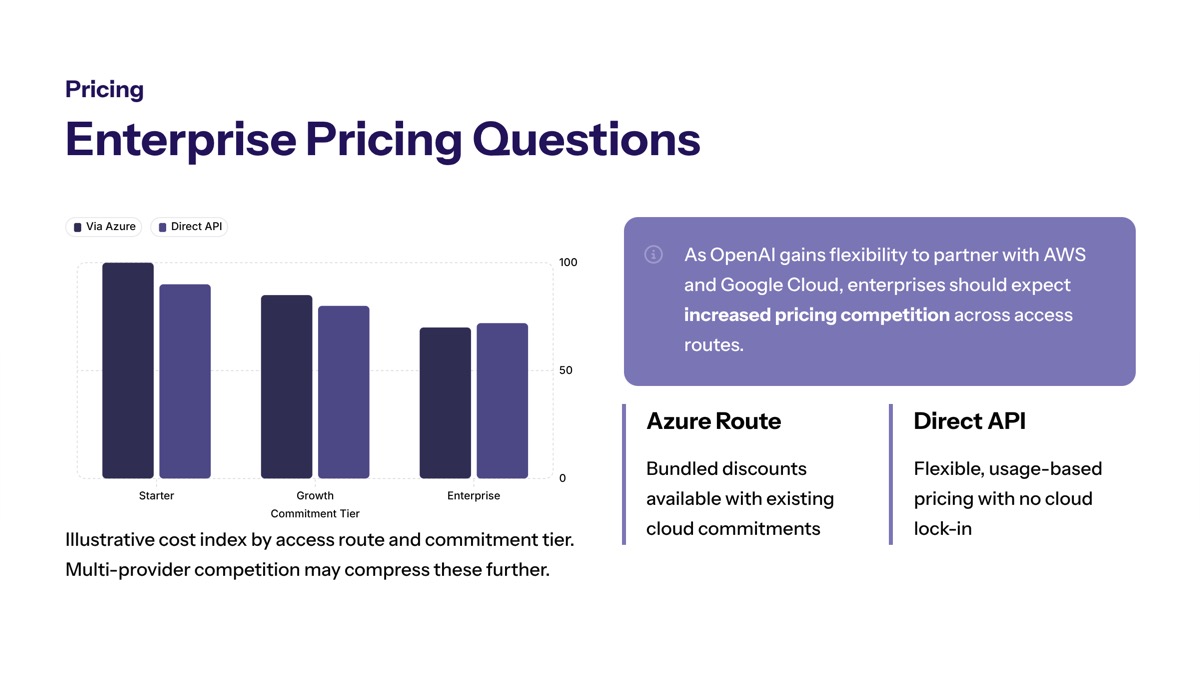

How Will Pricing Change for Enterprise Customers?

Potential pricing impacts stem from increased competitive dynamics among cloud providers. When multiple hyperscalers compete to host OpenAI services, enterprises gain negotiating leverage and pricing options that were previously unavailable.

However, support costs, integration complexity, and licensing fees may offset some savings. Enterprises should immediately verify how existing Azure-based OpenAI contracts accommodate potential multi-cloud deployment and what new terms might apply. The result of increased competition typically benefits customers, but implementation costs and transition complexity require careful analysis.

What About Data Security and Compliance?

Multi-provider security considerations multiply when AI services span multiple cloud environments. Each cloud provider maintains different security architectures, compliance certifications, and data handling practices. Enterprises must ensure that data governance policies account for potential model deployment across Azure, AWS, or Google Cloud.

Regulatory compliance requires consistent controls regardless of where AI models execute. Organizations in heavily regulated industries should evaluate how multi-cloud AI deployment affects existing compliance frameworks and whether additional controls or certifications become necessary.

Conclusion and Next Steps

The reported $38 billion revenue sharing cap represents a significant shift in the AI industry’s most important partnership. OpenAI gains expanded flexibility to pursue new partnerships with Amazon and Google while Microsoft retains substantial value through its equity stake, non-exclusive licensing rights, and continued payments through 2030. For enterprise customers, this change opens the door to improved competition, pricing flexibility, and deployment options.

Immediate action items for enterprise technology leaders include:

-

Review existing OpenAI service contracts for multi-cloud deployment implications

-

Assess current cloud infrastructure against emerging AI workload requirements

-

Develop vendor evaluation criteria that account for new partnership dynamics

-

Engage procurement and legal teams on potential contract renegotiations

-

Establish governance frameworks supporting multi-cloud AI service management

Related topics worth exploring include AI vendor management strategies that account for rapidly evolving partnerships, cloud strategy optimization for machine learning workloads, and partnership risk assessment frameworks that help enterprises navigate the changing competitive landscape.

Additional Resources

Enterprise AI partnership evaluation frameworks should address vendor stability, partnership flexibility, and long-term technology roadmaps. Organizations evaluating AI infrastructure commitments benefit from structured assessment tools that compare cloud provider capabilities across relevant dimensions.

Cloud infrastructure comparison tools for AI workloads help enterprises match specific requirements—model size, inference latency, training compute, geographic distribution—to provider capabilities. These tools increasingly incorporate multi-cloud deployment scenarios as partnership terms evolve.

Regulatory compliance guides for multi-cloud AI deployments address the complex intersection of AI governance, cloud security, and industry-specific requirements. As AI services potentially span multiple cloud environments, comprehensive compliance frameworks become essential for risk management and regulatory confidence.