Palo Alto Says New AI Models Found Seven Times More Vulnerabilities: A Security Breakthrough

Palo Alto Networks announced in May 2026 that frontier AI models discovered 75 vulnerabilities across their 130+ product portfolio in a single month—a 7x increase from their typical monthly findings of approximately 5 vulnerabilities. This cybersecurity giant leveraged advanced AI capabilities from Anthropic’s Claude Mythos, Claude Opus 4.7, and GPT-5.5-Cyber to scan both SaaS delivered products and customer operated products, marking a pivotal shift in how organizations identify and fix vulnerabilities.

This analysis covers AI-driven vulnerability scanning methodologies, implementation requirements for enterprise security operations, and strategic implications for teams managing complex software environments. Security professionals, development leaders, and risk managers will find actionable guidance for adapting their programs to the AI era of vulnerability discovery.

Palo Alto Networks used multiple models including Claude Mythos and GPT-5.5-Cyber under Project Glasswing and the Trusted Access for Cyber program, producing 26 CVEs from the 75 identified flaws. This represents the first time AI-driven scans produced the majority of vulnerabilities found in their security advisories.

Key outcomes from this analysis:

Understanding why frontier AI models scanning capabilities exceed traditional static analysis tools

Implementation requirements for enterprise AI scanning programs

Timeline considerations for defensive action before AI driven exploits start becoming the new norm

Strategic approaches for integrating AI into the software development lifecycle

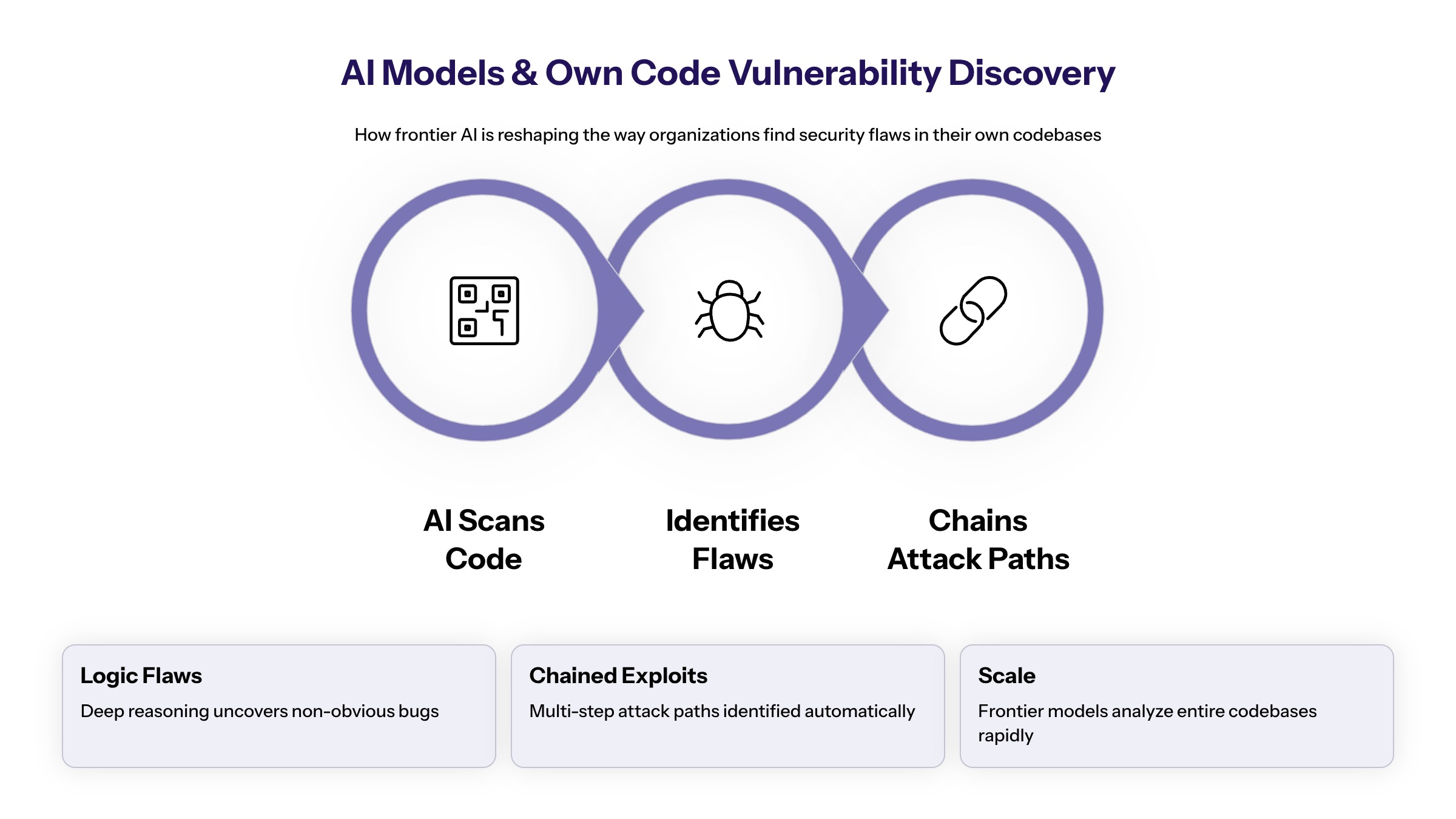

Understanding New AI Models and Their Impact on Own Code Vulnerability Discovery

Frontier AI models represent highly advanced large language models capable of deep code fluency, multi-step reasoning, and autonomous vulnerability chaining. Unlike traditional static application security testing tools, these new AI models can reason about context, logic flow, and emerging code paths to find vulnerabilities that conventional scanners miss. AI models have reached a level of coding capability where they can surpass most skilled humans at finding and exploiting software vulnerabilities, as demonstrated by the capabilities of models like Claude Mythos.

Frontier AI Security Models Including Claude Mythos

Palo Alto Networks employed three primary frontier AI models in their scanning initiative. Anthropic’s Claude Mythos achieves approximately 50% coding efficiency improvement over Claude Opus 4.6 in certain code fluency tasks. GPT-5.5-Cyber, released through OpenAI’s Trusted Access for Cyber program, demonstrates comparable performance in cyber security benchmarks. Claude Opus 4.7 rounds out the multi-model approach, each bringing distinct capabilities to vulnerability identification.

Microsoft’s MDASH system provides an industry comparison, orchestrating over 100 specialized AI agents including both frontier and distilled models. In a record Patch Tuesday, MDASH helped Microsoft identify 17 new vulnerabilities, though Palo Alto’s results demonstrate even greater scale through their multi-model approach.

Multi-Model Scanning Approach to Detect Vulnerabilities in Own Code

Palo Alto Networks employed multiple models rather than relying on a single system to maximize detection coverage. Each model brings different strengths to code analysis—some excel at identifying logic flaws while others demonstrate superior ability in spotting configuration vulnerabilities. The use of traditional Static Application Security Testing and Dependency Scanners are insufficient, as they fail to identify full-stack logic flaws exposed by advanced AI.

Effective AI scanning requires robust context requirements and threat intelligence integration. Models perform optimally when provided with architecture documentation, deployment configurations, and historical vulnerability data. This contextual awareness enables AI to identify not just individual bugs but complete attack paths that chain multiple weaknesses.

Scale and Coverage Expansion Enabled by New AI Models

AI scanning enabled Palo Alto to analyze their entire portfolio, including recent acquisitions that might otherwise take months to assess manually. AI can identify software vulnerabilities dramatically faster, accomplishing in three weeks what would typically take a human penetration tester a year. This capability expansion represents a fundamental shift in how organizations can approach first party code security.

The breadth of coverage demonstrates that advanced AI models can autonomously expose, chain, and weaponize software flaws at unprecedented speeds. This machine speed analysis creates both opportunities for defenders and urgent timelines for action.

Palo Alto Networks’ Implementation Strategy Using Claude Mythos and Other Models

Palo Alto’s May 2026 results emerged from a systematic approach to AI-driven vulnerability discovery. The collaboration among companies in Project Glasswing allows participants to leverage the capabilities of AI models like Claude Mythos to proactively identify and remediate vulnerabilities, thereby enhancing the overall security posture of their software.

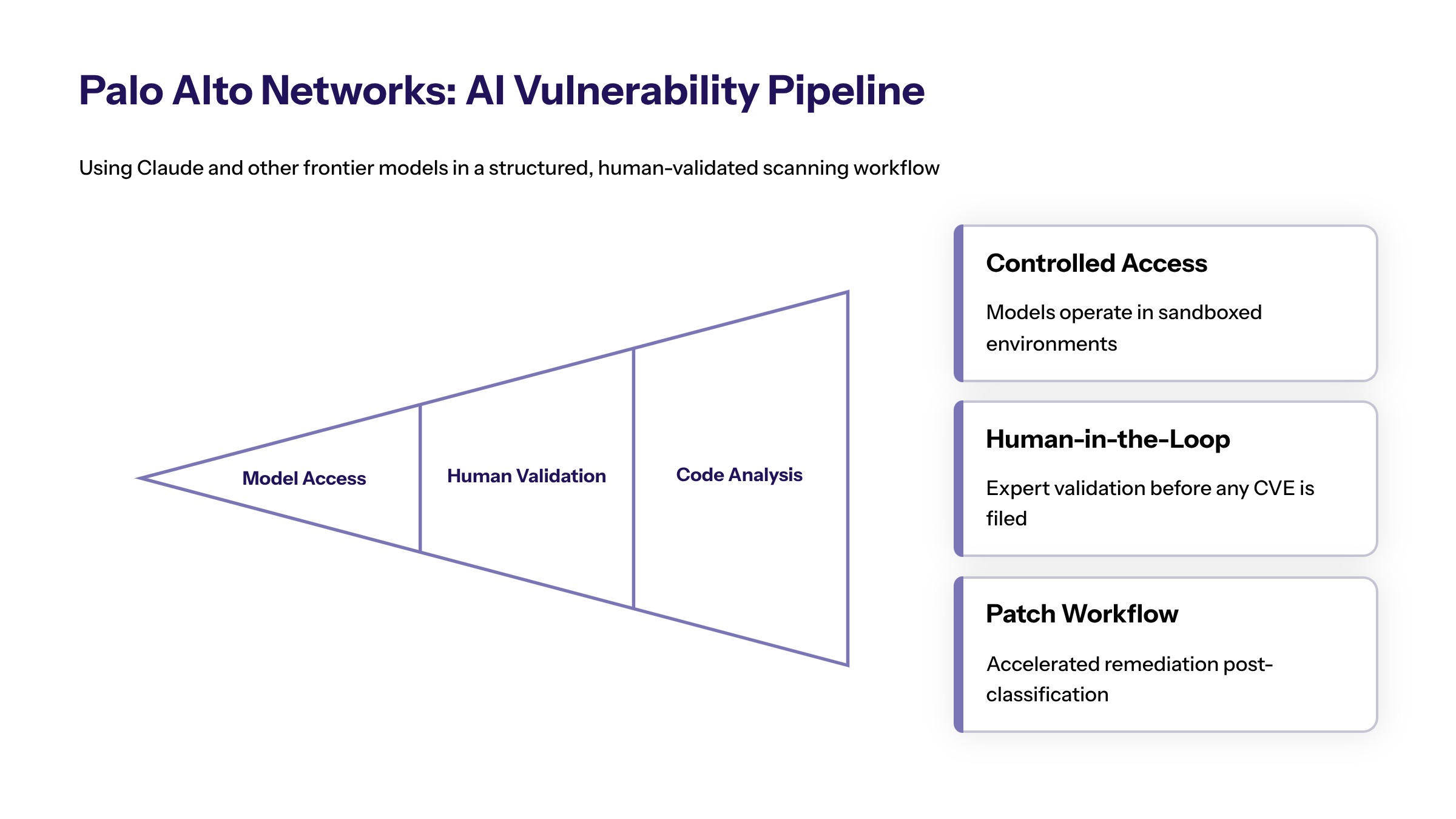

Scanning Pipeline and Methodology for Own Code

The multi-stage process began with early access under Project Glasswing (Anthropic) and Trusted Access for Cyber (OpenAI). AI models analyzed various layers—code base, logic flows, configuration, and dependencies—to detect vulnerabilities across the entire stack. Project Glasswing is a collaborative initiative involving major tech companies like Amazon Web Services, Microsoft, and Google, aimed at enhancing cybersecurity through the use of advanced AI models to identify and fix vulnerabilities in critical software systems.

After initial detection, human experts validated findings against their own code repositories, mapped issues into CVE classifications, and categorized severity levels. This integration with existing security development lifecycle practices ensures that AI-discovered vulnerabilities feed directly into established remediation workflows.

Product Portfolio Coverage and Vulnerabilities Fixed

The scan encompassed over 130 product lines, including both SaaS delivered products and customer operated products. Prioritization focused on products with larger customer bases, externally exposed interfaces, and those handling sensitive security functions. This approach reflects the reality that organizations must prioritize faster detection and remediation of vulnerabilities due to the evolving threats posed by AI technologies.

Products acquired through recent acquisitions received particular attention, as these often contain code bases that haven’t been subjected to the acquiring company’s security standards. AI scanning harness approaches enabled rapid assessment without requiring complete code familiarity.

The 75 vulnerabilities consolidated into 26 CVEs through validation and classification processes. Palo Alto confirmed that none of these issues were being exploited in the wild at announcement time, providing a window for remediation before attackers could act. AI models can generate functional exploits from multiple combined flaws with a success rate of over 70%, making rapid patching critical.

For SaaS products, patches could be deployed immediately without customer action. Customer operated products required standard development, testing, and deployment cycles, though Palo Alto accelerated these timelines given the volume of high severity vulnerabilities discovered.

Technical Implementation and Industry Comparison of New AI Models

Building on Palo Alto’s results, enterprise security teams require clear performance benchmarks and implementation frameworks to evaluate AI scanning adoption.

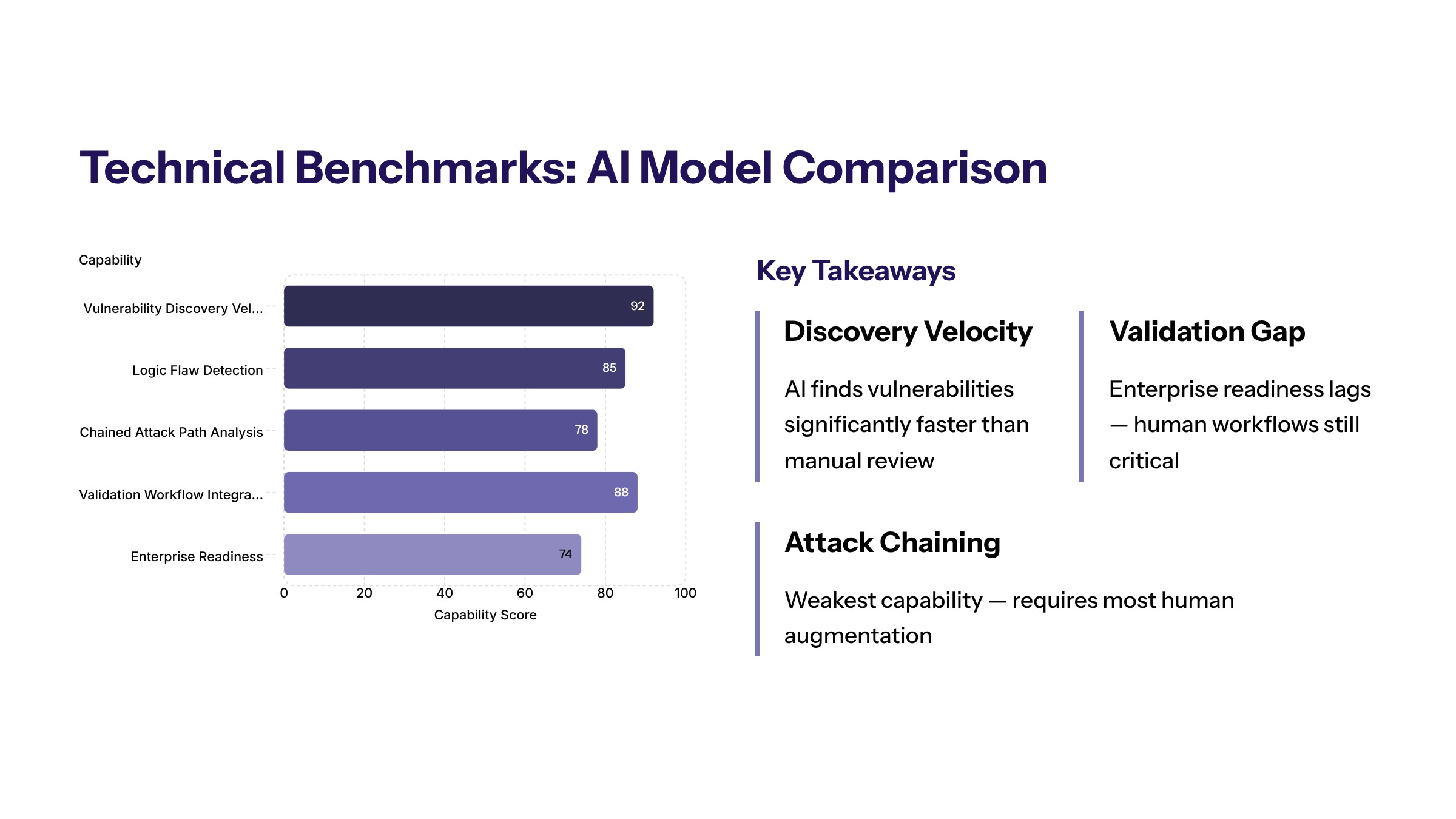

AI Model Performance Metrics Highlighting Claude Mythos

The quantitative impact is significant: vulnerability discovery increased from approximately 5 per month to 75 in a single scan cycle, representing the 7x increase that captured industry attention. Chief Product Officer Lee Klarich emphasized that many findings involved vulnerability chaining—combining multiple lower-severity bugs into critical attack paths. AI’s ability to combine low-severity vulnerabilities into critical attack paths renders traditional scoring systems ineffective for risk prioritization.

In benchmark testing, Mythos completed a 32-step simulated network attack (“The Last Ones”) end-to-end in 3 out of 10 attempts, while Claude Opus 4.6 averaged fewer completed steps. This demonstrates the ability of frontier AI to not just find flaws but to chain them into working exploits.

False positive rates require human validation, though AI significantly reduces the signal-to-noise ratio compared to traditional scanners. The use of AI in vulnerability detection is expected to lead to a surge in vulnerability discovery and patching, as organizations adopt AI scanning technologies to identify flaws before they can be exploited.

Mozilla provides an industry comparison: in April 2026, they fixed 423 Firefox bugs—more than 5x March’s 76 fixes—reflecting how vendors are accelerating patch efforts under this new vulnerability flood. Organizations must adapt to an increased volume of security advisories, with many experiencing spikes in alerts due to vulnerabilities discovered by AI.

Enterprise Implementation Requirements for AI-Driven Exploits Defense

Infrastructure requirements include access to frontier-capable AI models through programs like TAC or Glasswing, adequate compute resources, and secure environments with governance to prevent misuse. The emergence of AI capabilities in cybersecurity necessitates a shift in how organizations approach security, moving towards integrating AI models directly into the software development lifecycle to prevent vulnerabilities from reaching production.

Team expertise needs extend beyond traditional configuration scanning. Security personnel must understand code-level vulnerabilities, logic analysis, and proof-of-concept chaining to effectively validate AI outputs. The increase in development velocity using AI introduces a notable rise in software vulnerabilities, necessitating stronger secure coding practices.

Cost considerations include licensing for restricted models, validation engineering time, and patch development resources. To effectively counter AI-enabled attacks, companies must adopt extended detection and response systems combined with automated security operations center solutions.

Strategic Timeline Analysis for the New Norm of AI-Driven Exploits

Phase | Defender Actions | Adversary Capabilities |

|---|---|---|

Current (May 2026) | Frontier AI scanning by security vendors | Limited access to advanced models |

3-5 Months | Enterprise adoption of AI scanning | Increased access to AI exploitation tools |

6-12 Months | Integrated development lifecycle security | AI driven exploits become standard |

Organizations have a narrow three-to-five-month window to outpace adversaries before AI-driven exploits become the new norm, highlighting the urgency for enhanced security measures. The emergence of advanced AI capabilities has significantly reduced the time between vulnerability discovery and exploitation, making it critical for organizations to implement robust security measures quickly.

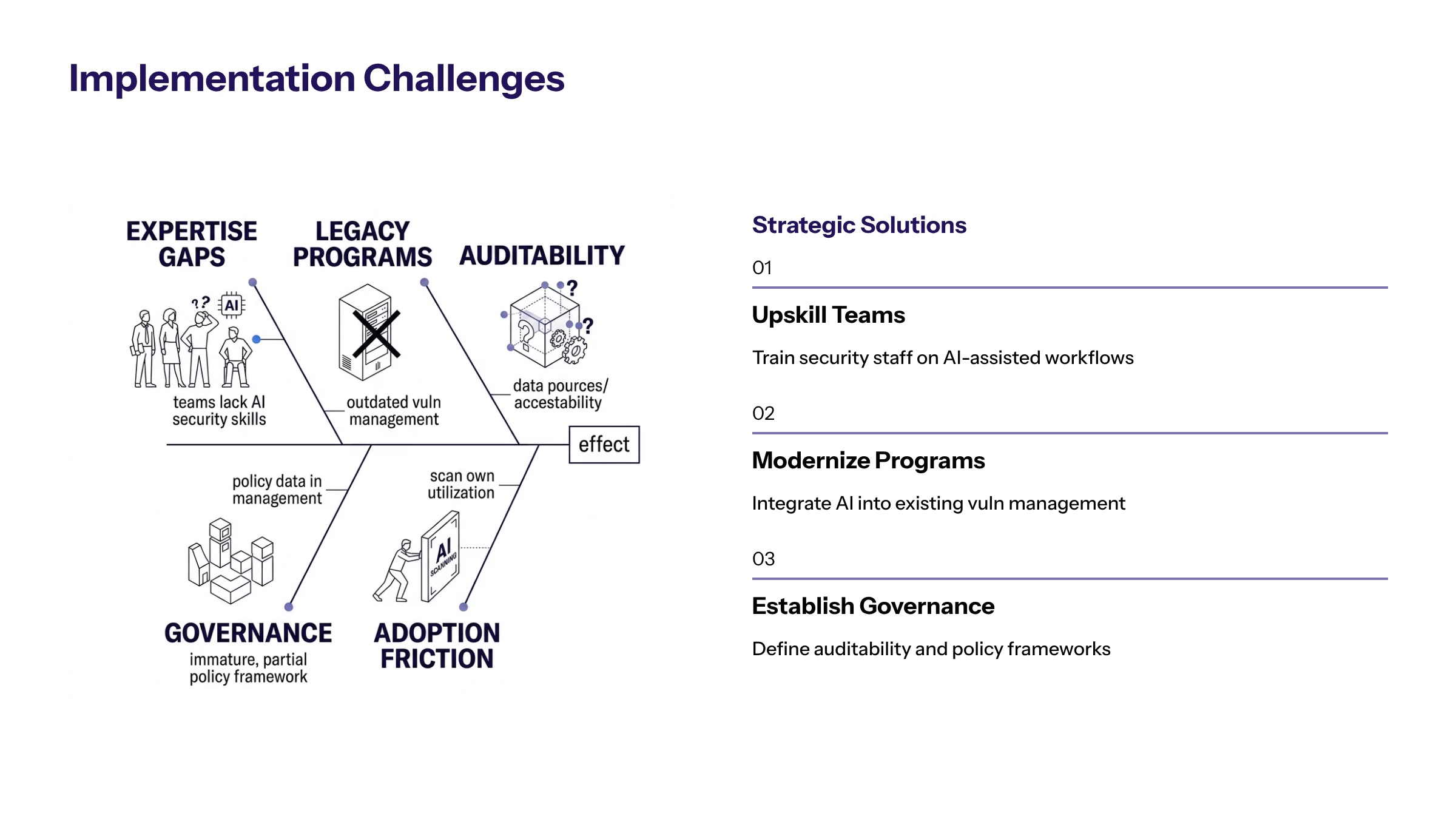

Implementation Challenges and Strategic Solutions for AI-Driven Exploits

Enterprise teams face practical barriers when adopting AI vulnerability scanning at scale. Addressing these challenges requires strategic planning and resource allocation as an immediate priority.

Resource and Expertise Constraints in Responding to New AI Models

Building internal capabilities for frontier AI model access requires vetting processes, secure infrastructure, and governance frameworks. Many organizations lack the internal expertise to interpret AI findings effectively. Cybersecurity requires a shift from traditional manual processes to real-time, automated defenses to manage the volume of AI-driven threats.

Training security teams involves developing skills in vulnerability validation, exploit chain analysis, and severity assessment. The shift toward AI-driven security measures requires organizations to implement autonomous defenses to match the speed of emerging threats. Teams must learn to identify when AI findings represent genuine risk versus false positives requiring dismissal.

Integration with Legacy Security Programs to Solve Vulnerability Challenges

Incorporating AI scanning results into existing vulnerability management programs requires workflow adaptation. Traditional programs operate on slower cadences—monthly patches, quarterly scans—that cannot accommodate the volume frontier AI produces. Virtual patching involves deploying AI-driven shields at the network layer as a temporary defense while permanent patches are developed.

Balancing automation with human expertise remains critical. While AI excels at discovery, determining business impact and prioritization requires contextual understanding that machines lack. Organizations have a narrow three-to-five-month window to upgrade software defenses before AI-driven zero-day attacks become prevalent.

Regulatory and Compliance Considerations for the Future of AI-Driven Security

For regulated industries, AI-driven scanning must be auditable and documented. CVE disclosures, proof-of-concepts, validation processes, and response timelines may have legal and regulatory implications. A Zero Trust security model is essential for differentiating identity and ensuring security in environments increasingly affected by AI technologies.

Governance frameworks must address model access controls, finding handling procedures, and disclosure timelines. The security of APIs and cloud-native environments has become a top priority due to a notable increase in API attacks linked to AI advancements.

Strategic Implications and Next Steps in the Era of New AI Models

Palo Alto Networks’ 7x vulnerability discovery increase signals a fundamental shift in cybersecurity posture requirements. AI is expected to play a major role in identifying security flaws that previously took significant time to uncover, thus changing the cybersecurity landscape. As AI models become more capable of identifying and exploiting vulnerabilities, the cybersecurity landscape is shifting, necessitating that organizations adopt new security strategies to defend against these evolving threats.

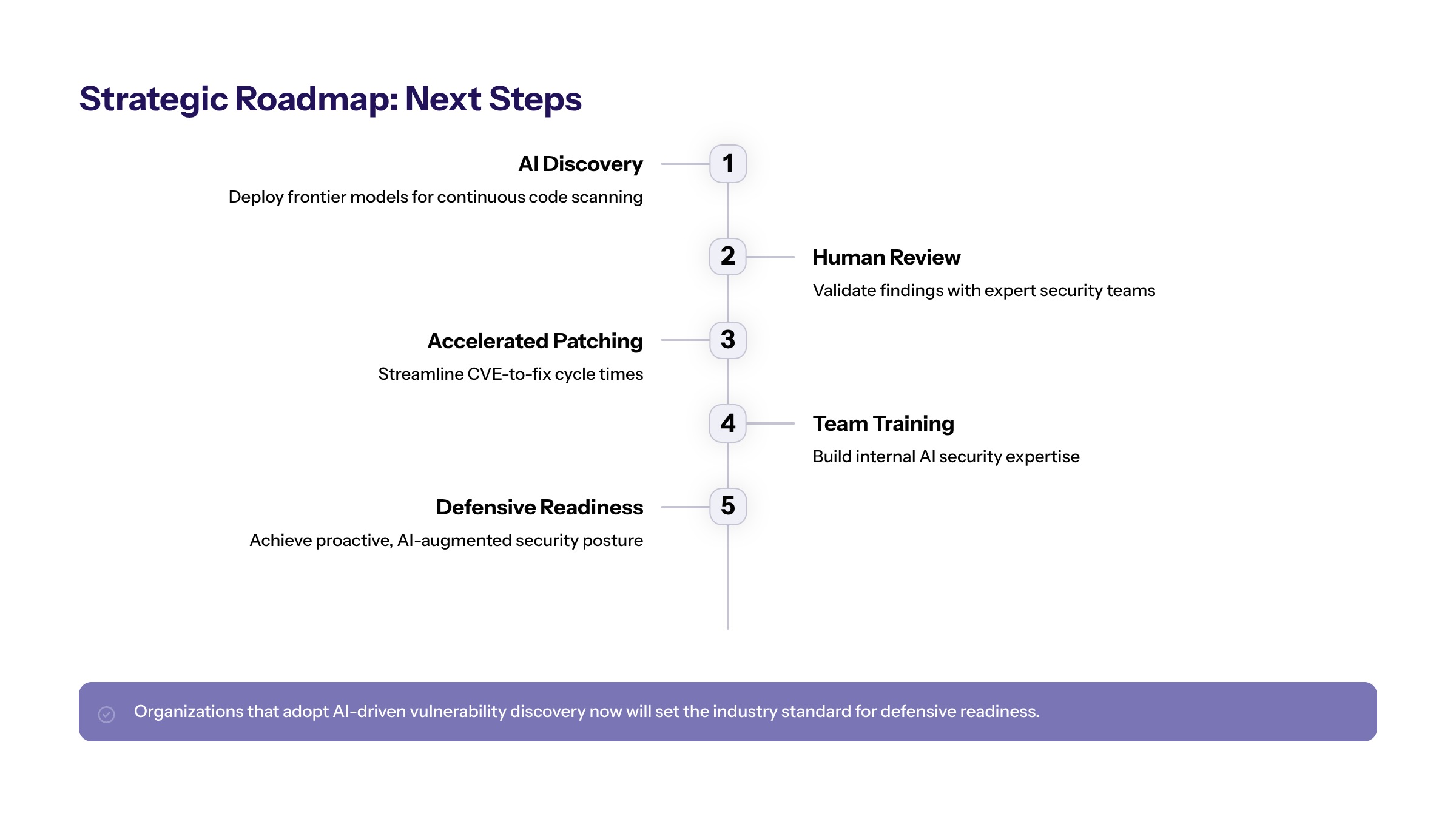

Immediate actionable steps for enterprise security teams:

Assess current vulnerability management capacity against projected AI-discovered volume increases

Evaluate AI scanning tool options through vendor programs or collaborative initiatives like Project Glasswing

Develop implementation timelines that account for the three-to-five-month window before adversary AI adoption accelerates

Begin training security personnel on AI output validation and vulnerability chain analysis

Update patch deployment processes to accommodate increased discovery velocity

AI-driven attacks are anticipated to become commonplace within a short timeframe, emphasizing the urgent need for immediate defensive measures. The urgency for collaboration in cybersecurity is underscored by the expectation that AI-driven exploits will become the new norm within a narrow three-to-five-month window, prompting organizations to act swiftly to secure their systems.

Related topics for further exploration include AI-first security architecture design, custom software development security integration, and modernization of legacy vulnerability management systems to operate at machine speed.

Additional Resources

Reference materials for implementation planning:

Palo Alto Networks’ May 2026 security advisories documenting the 26 CVEs discovered through AI scanning

Project Glasswing participation requirements for collaborative AI security initiatives

Industry frameworks for AI security integration including NIST and ISO guidance on automation in vulnerability management

Enterprise assessment tools for evaluating AI scanning readiness and infrastructure requirements