US Government Pushes Pre-Release AI Model Reviews: New Federal Requirements and Implementation Framework

The US government now requires pre-release AI model reviews for advanced AI systems, marking a fundamental shift from voluntary oversight to mandatory federal evaluation before deployment. These reviews involve comprehensive safety, security, and capability assessments conducted by federal agencies—particularly the Center for AI Standards and Innovation (CAISI) within the National Institute of Standards and Technology (NIST)—before AI models can be released to the public.

This article covers the federal agencies involved in pre-release review (NIST, Department of Commerce, CAISI), the types of AI models subject to evaluation, and the implementation timeline currently unfolding. It focuses on federal-level requirements and does not address state-level regulations or international frameworks except where relevant for context. The target audience includes AI developers, enterprise software teams, compliance officers, and technology executives working with advanced AI systems who must understand how these requirements affect their development cycles and deployment strategies.

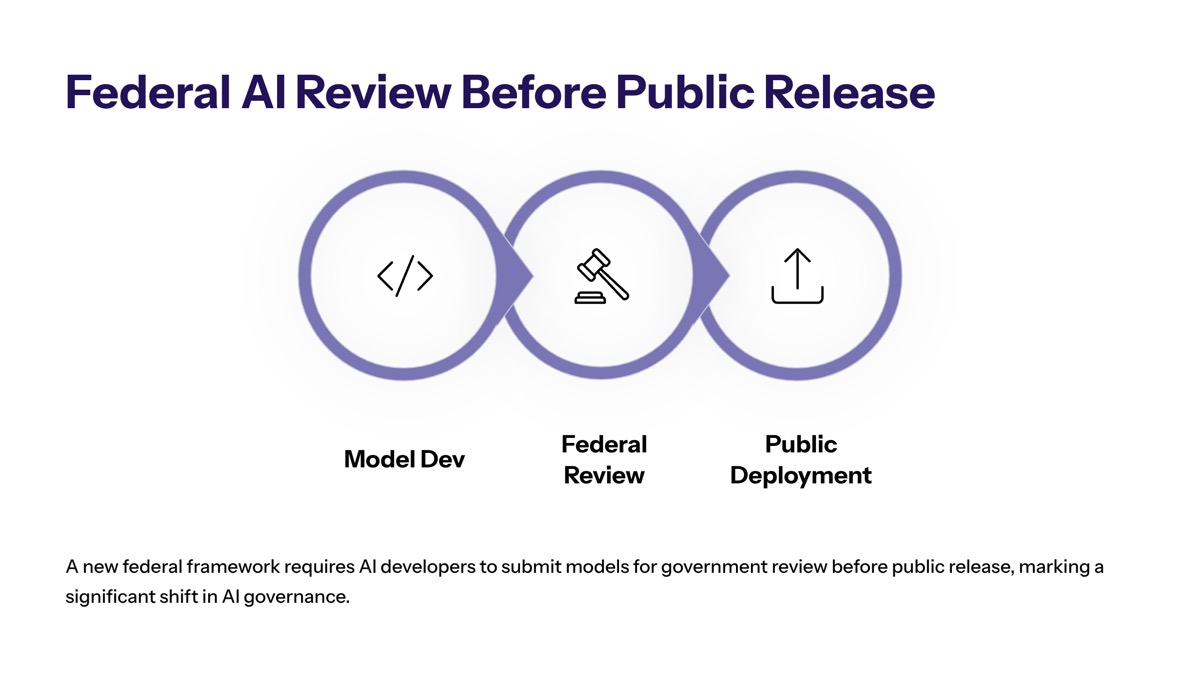

The US government now mandates pre-release safety and security evaluations for high-risk AI models before deployment. Major tech firms have agreed to provide early access to their frontier AI models for national security testing before public release, and CAISI has completed over 40 evaluations of unreleased models as of May 2026.

Key outcomes you will gain from this article:

Understanding which AI models trigger mandatory review requirements

Clarity on compliance timeline and federal agency roles

Knowledge of the CAISI evaluation framework and testing protocols

Practical steps for preparing technical documentation

Solutions for navigating common implementation challenges

Understanding Federal AI Pre-Release Review Requirements

A pre-release AI model review under current federal guidance refers to a comprehensive evaluation conducted before an AI system is deployed to the public. These evaluations assess AI capabilities across national security domains—including cybersecurity vulnerabilities, biosecurity risks, and chemical weapons potential—often using versions of models with reduced safety guardrails to reveal underlying capabilities that might otherwise be obscured.

The shift represents a significant transition in the US government’s approach to AI, combining private innovation with government safety oversight. The federal government is moving from a hands-off approach to direct involvement in the development cycle of AI, potentially altering release timelines for cutting-edge AI.

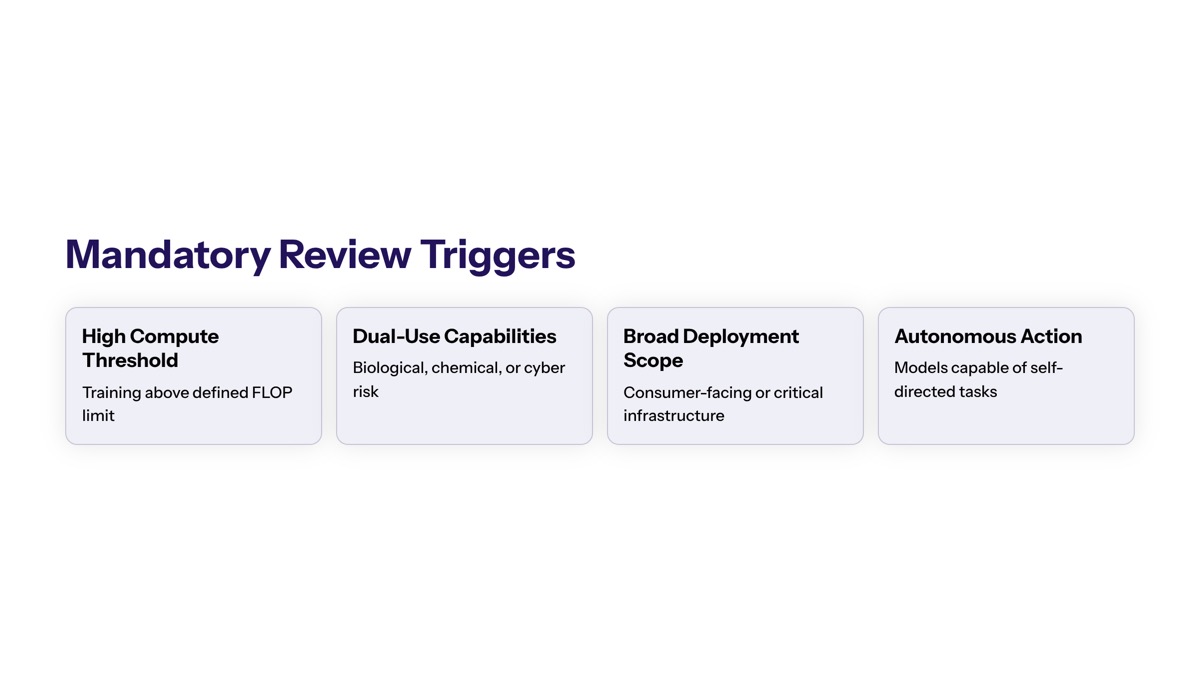

Mandatory Review Triggers

High-risk AI systems requiring pre-release evaluation are defined primarily by their capabilities and potential to pose risks in national security domains. The National Security Memorandum NSM-25, issued in October 2024, defines “frontier AI models” as general-purpose models near cutting-edge performance whose training or capabilities present elevated risk to national security.

Specific thresholds for model size, capabilities, and deployment context that trigger federal review are not yet precisely codified in terms of FLOPs or parameter counts. Instead, triggering depends on capability assessment across risk domains—particularly cybersecurity, biosecurity, and chemical threats. The initiative to enforce pre-release reviews has been accelerated by the emergence of highly capable cybersecurity AI models, with concerns that AI can identify and exploit software vulnerabilities at unprecedented speeds, leading to significant cybersecurity threats.

The National Institute of Standards and Technology (NIST) released the Artificial Intelligence Risk Management Framework (AI RMF 1.0) in January 2023, providing voluntary guidance for organizations to identify, assess, and manage risks associated with AI systems. Current mandatory review requirements build upon this framework, connecting pre-release evaluation to existing science and technology policy infrastructure.

Review Scope and Standards

Safety, security, and bias testing requirements during the pre-release phase encompass multiple evaluation dimensions. AI evaluations are designed to assess and compare how AI models perform on different tasks, providing valuable insights for developers, users, and independent evaluators. These assessments include red-team testing, adversarial inputs, supply chain risk analysis, and security verification protocols.

The primary goal of the proposed policy is to prevent the misuse of AI models for cyberattacks or by adversaries. This represents an evolution from previous voluntary commitments—in July 2023, the Biden administration secured voluntary commitments from seven major AI companies to manage risks associated with AI, including ensuring AI products undergo security testing before public release. Current requirements expand oversight from voluntary agreements to mandatory evaluation processes.

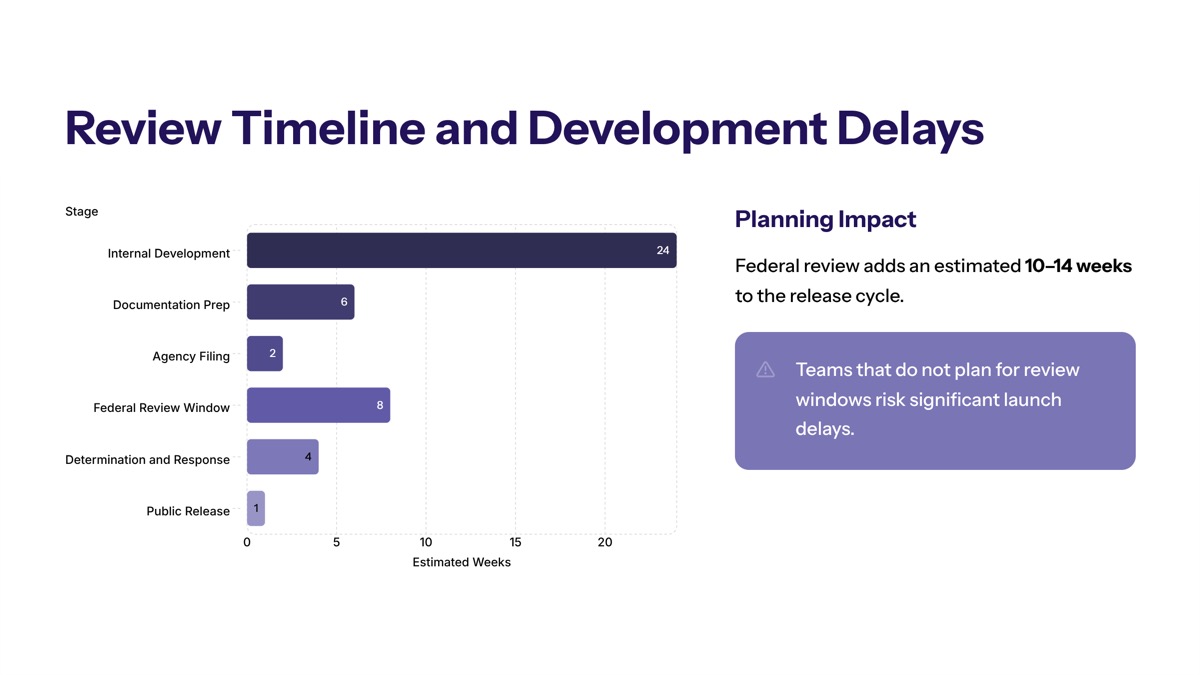

Pre-release vetting for AI models could introduce delays as models undergo evaluation by the Center for AI Standards and Innovation (CAISI) under the Commerce Department—a factor organizations must incorporate into their development planning.

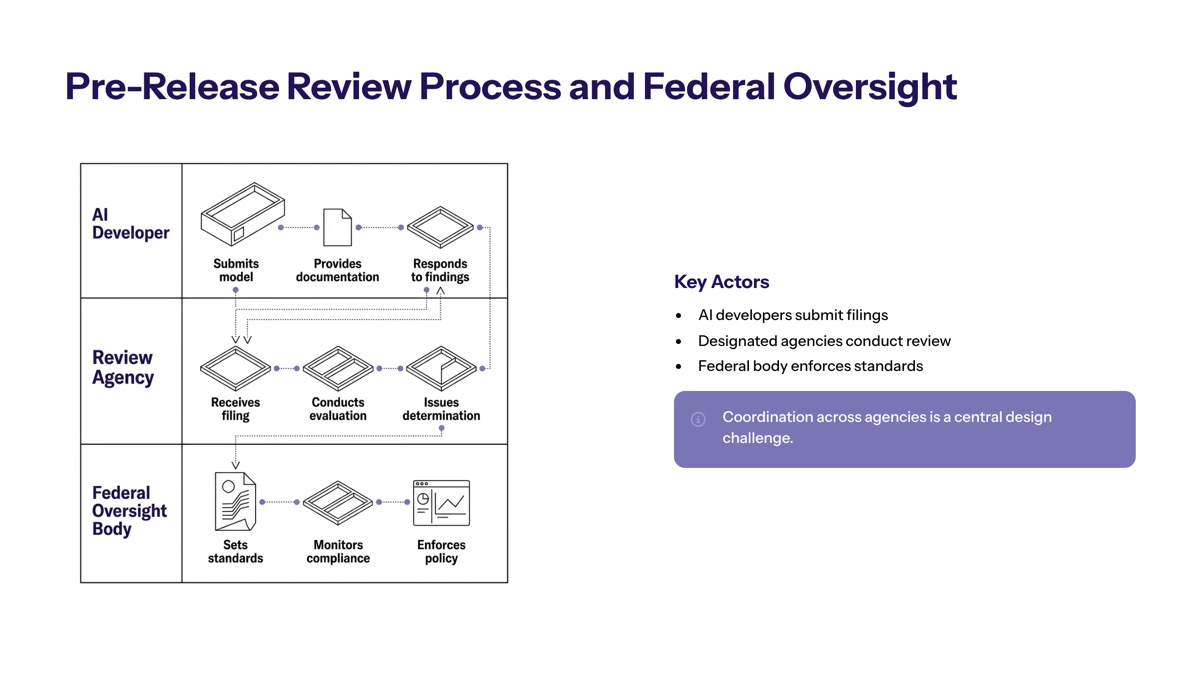

Pre-Release Review Process and Federal Oversight

Building on the foundational requirements outlined above, organizations must navigate a specific evaluation process involving multiple federal agencies and detailed documentation protocols. This section examines how CAISI conducts evaluations and what technical standards apply to pre-release assessments.

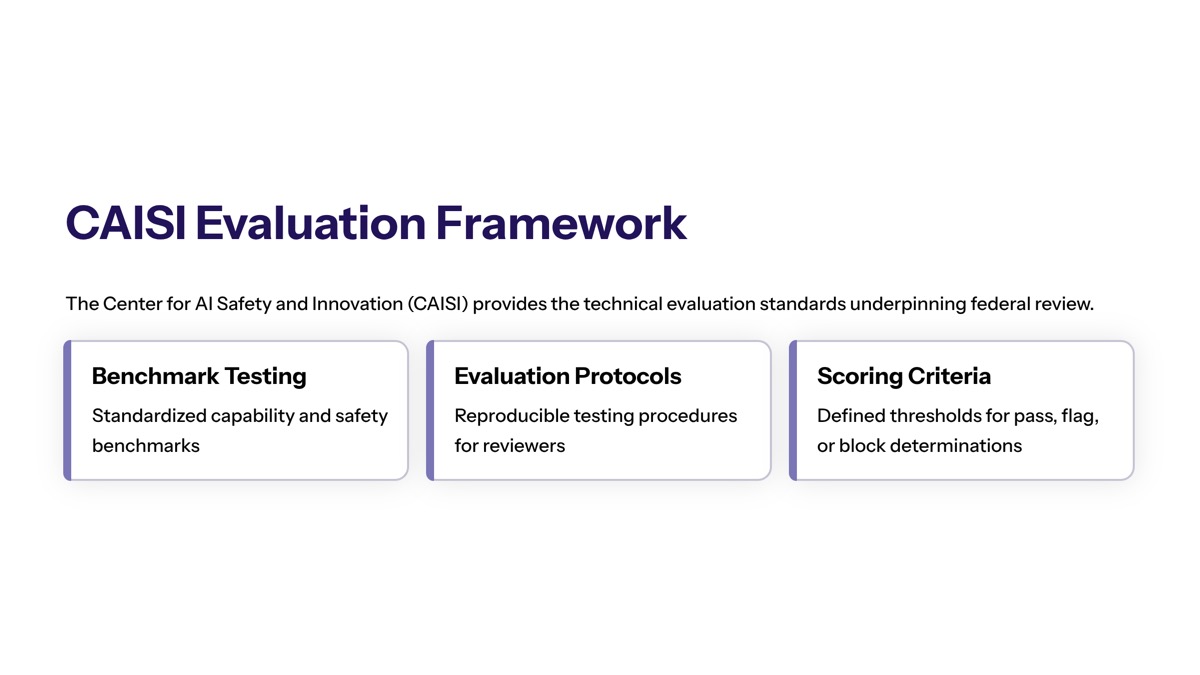

CAISI Evaluation Framework

The Center for AI Standards and Innovation, established in 2025 under President Trump, serves as the industry’s primary point of contact for frontier AI model evaluation. CAISI’s mandate emphasizes demonstrable risks such as national security concerns—cybersecurity, biosecurity, and chemical weapons—rather than broader public safety considerations alone.

In April 2026, the Center for AI Standards and Innovation (CAISI) evaluated the open-weight AI model DeepSeek V4 Pro, indicating the importance of standardized evaluations in assessing AI model performance. This evaluation of foreign AI systems demonstrates CAISI’s expanding scope to include adversary AI systems alongside commercial AI systems from private sector AI developers.

CAISI has completed over 40 pre-deployment evaluations, including unreleased state-of-the-art models. As of May 2026, Google DeepMind, Microsoft, and xAI have signed agreements with CAISI allowing US government access to their models before public release—joining OpenAI and Anthropic, which had existing partnerships. NIST staff and interagency experts conduct evaluations through the TRAINS Taskforce, with some assessments occurring in classified environments for sensitive national security risks.

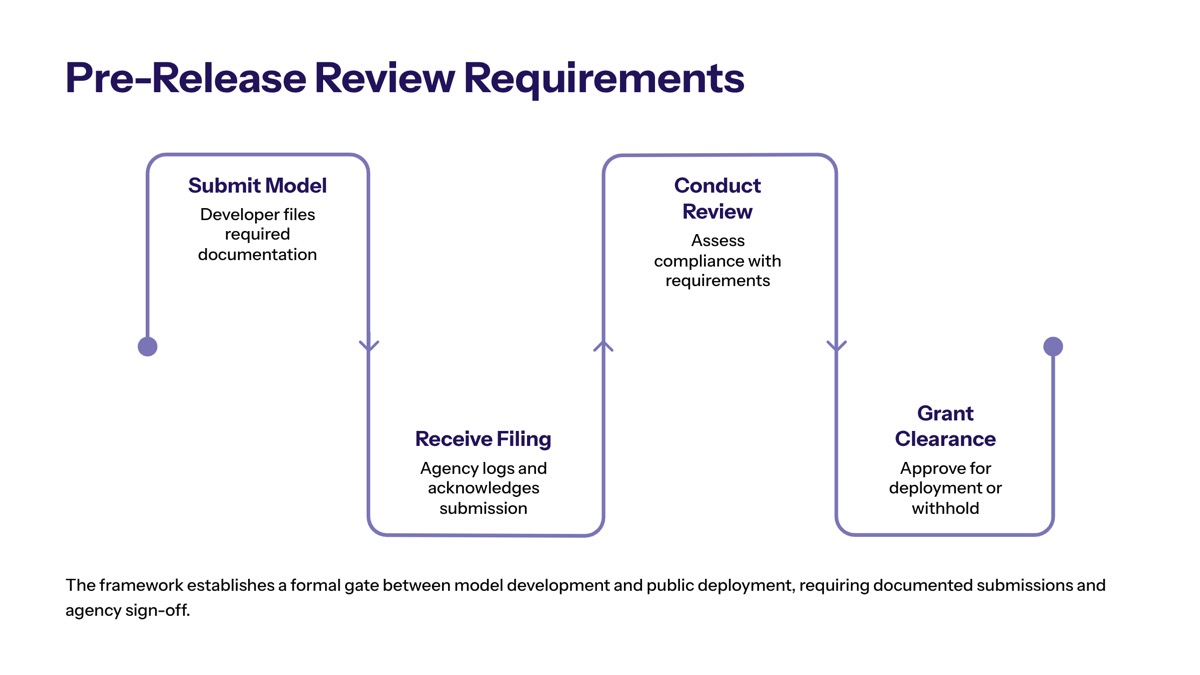

Timeline and documentation requirements include submitting detailed model architecture specifications, weights, training data provenance, and safety mitigations. Organizations should plan for extended review periods—the evaluation process is designed to be thorough rather than expedient, prioritizing security over speed to deployment.

Safety and Security Testing Requirements

Specific technical evaluations required before model deployment include:

Red-team testing: Adversarial assessment protocols that probe cybersecurity vulnerabilities and potential misuse vectors

Unclassified evaluations: Standard security verification testing for public safety considerations

Capability assessments: Testing of AI capabilities with reduced guardrails to understand latent behaviors

Supply chain analysis: Evaluation of model provenance and potential vulnerabilities from training data or external components

CAISI’s evaluations often use versions of models with guardrails removed to better understand raw capabilities—this allows evaluators to assess potential security vulnerabilities that might be hidden with safety fine-tuning. Organizations must prepare infrastructure to share models and reduced guardrail versions with CAISI for comprehensive evaluation.

The focus on demonstrable risks means evaluators prioritize scenarios involving malicious behavior, malign foreign influence arising from AI capabilities, and dual-use potential. Testing protocols examine whether models could assist in developing chemical weapons, conducting cyberattacks, or enabling adversarial actors.

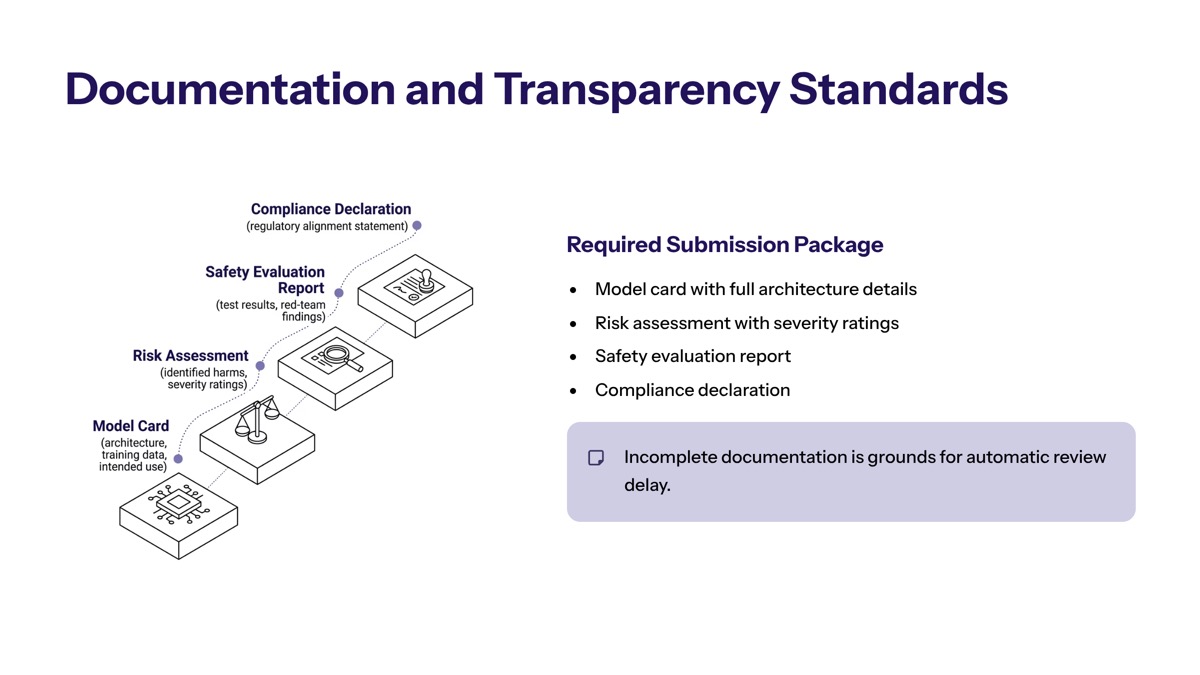

Documentation and Transparency Standards

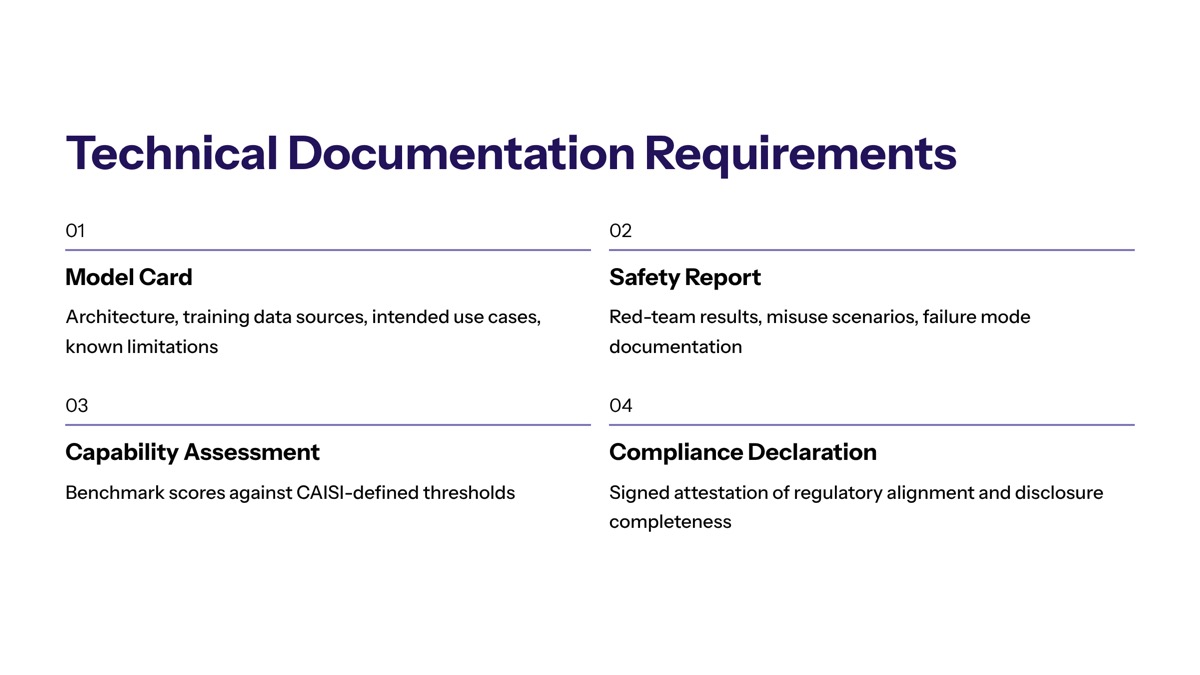

Required documentation for model capabilities, training data, and risk mitigation measures includes:

Model architecture specifications and training methodology

Training data provenance and composition

Safety mitigations implemented during development

Adversarial testing results from internal evaluation

Risk assessment across national security domains

CAISI collaborates with other federal agencies via interagency feedback loops, providing regular guidance to model developers. Transparency of results varies—some evaluation findings are classified, meaning enterprises depending on assurances may not have full visibility into all findings.

Regulatory focus is shifting toward active information-sharing between labs and the government, rather than just post-market enforcement of existing laws. This collaborative research approach allows CAISI to develop evaluation methods that serve both security verification and innovation priorities.

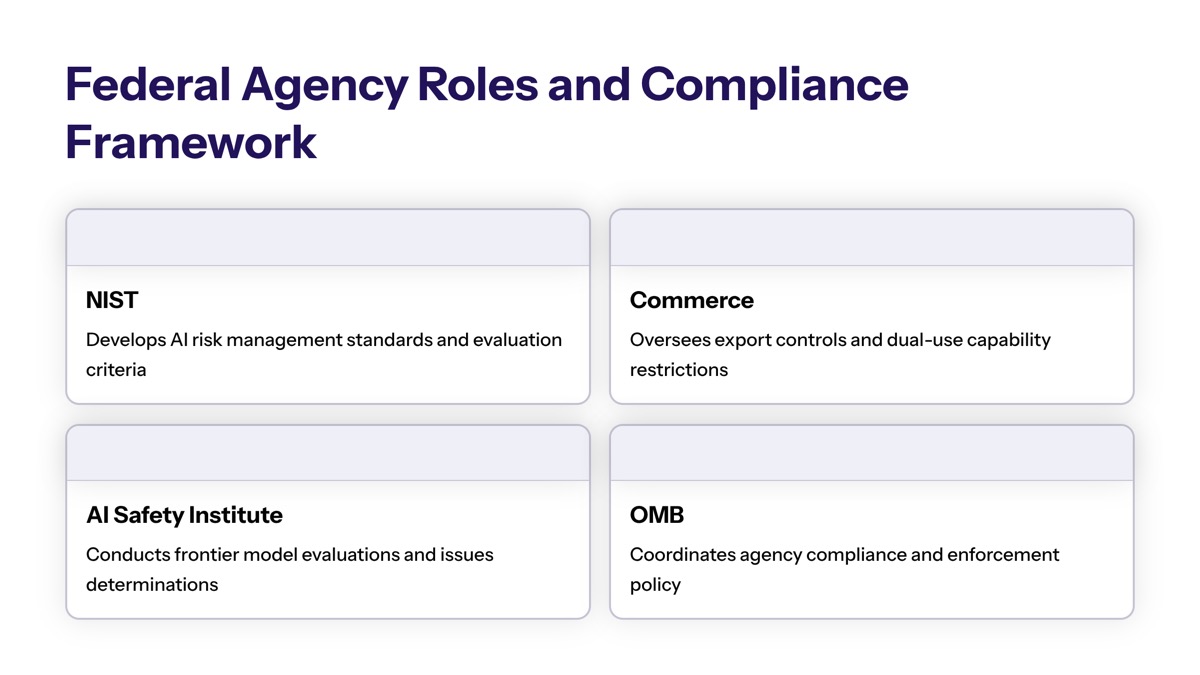

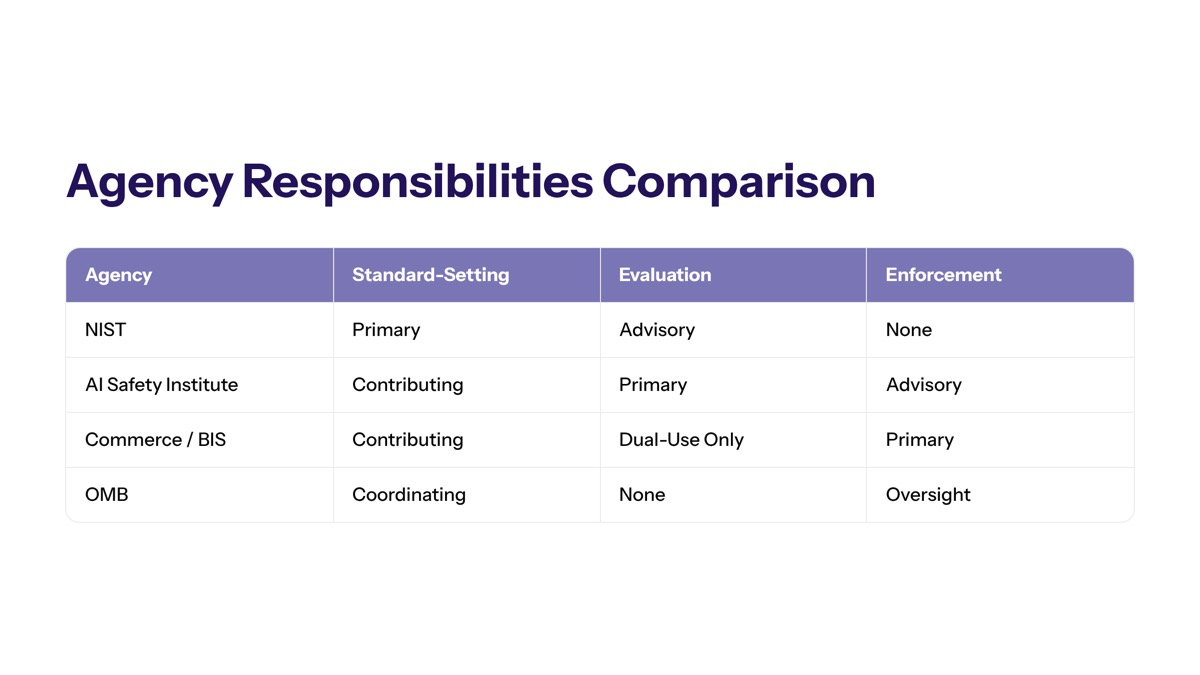

Federal Agency Roles and Compliance Framework

Multiple federal agencies share responsibility for AI oversight, each with distinct roles in the pre-release review ecosystem. Understanding which agency oversees specific use cases helps organizations navigate compliance efficiently and avoid coordination gaps.

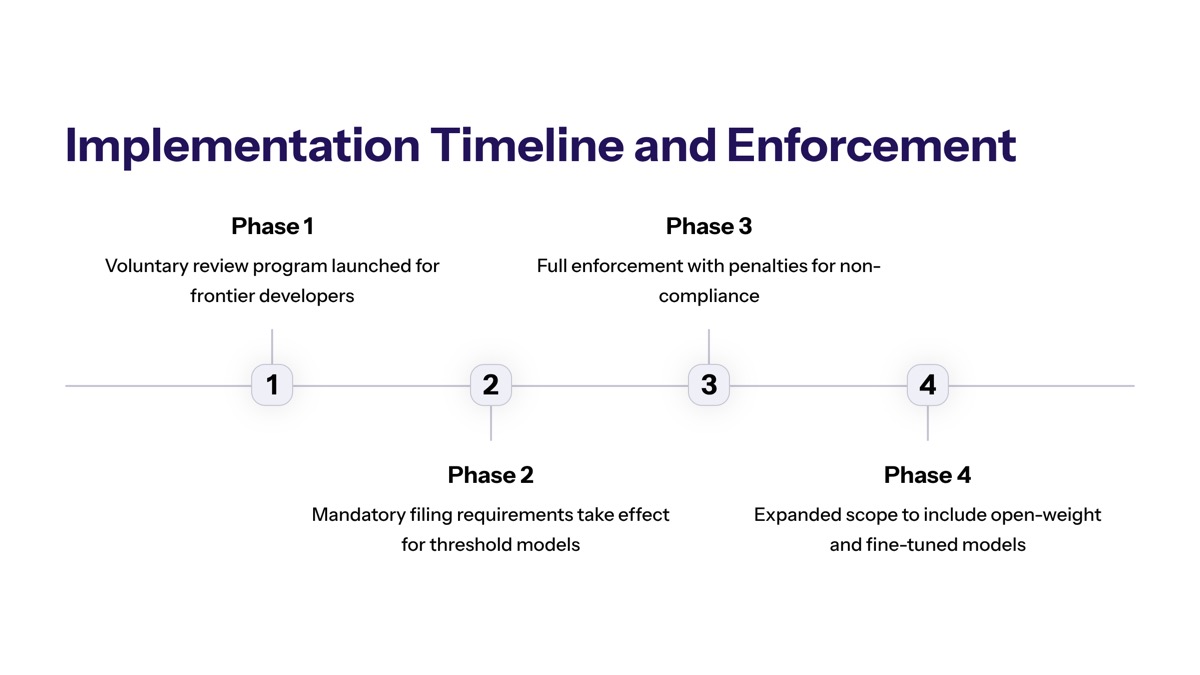

Implementation Timeline and Enforcement

Organizations working with frontier AI models should begin compliance activities immediately, as the evaluation framework is already operational.

Step 1: Initial model registration and pre-review submission Assess whether your AI models meet frontier capability thresholds. Prepare documentation of model architecture, training data, and safety mitigations. Contact CAISI to initiate the submission process for models approaching public release.

Step 2: Technical evaluation and safety assessment phase CAISI conducts evaluations using both unclassified and classified testing protocols. Provide access to model versions with reduced guardrails when requested. Expect engagement from interagency experts via the TRAINS Taskforce.

Step 3: Documentation review and compliance verification Federal evaluators assess whether documentation meets standards for transparency and risk mitigation. Organizations may receive feedback requiring additional safety measures or documentation before proceeding.

Step 4: Final approval or conditional deployment authorization Following successful evaluation, organizations receive guidance on deployment. Some approvals may include conditions or ongoing monitoring requirements.

Key policy milestones include: October 30, 2023, when President Biden released Executive Order 14110, which includes directives on standards for critical infrastructure and mandates that AI must advance equity and civil rights; October 2024’s National Security Memorandum NSM-25; July 2025’s AI Action Plan with 17 taskings to CAISI; and May 2026’s formalization of agreements with major labs.

Agency Responsibilities Comparison

Agency | Primary Role | Oversight Domain |

|---|---|---|

NIST / CAISI | Lead authority for pre-release evaluation and testing | Frontier model assessment, develop voluntary standards, measurement science |

Department of Commerce | Directs CAISI; interfaces with trade and security policy | Policy coordination, international AI standards |

Bureau of Industry and Security (BIS) | Regulatory tools for model security | Reporting requirements, protection from theft |

Intelligence Community | Domain expertise for classified evaluations | National security threat assessment |

Homeland Security | Critical infrastructure protection | Infrastructure risk evaluation |

Defense | Military AI applications | Defense-related AI capabilities |

Organizations should identify which federal agencies have jurisdiction over their specific use cases. For most private sector AI developers of frontier models, CAISI serves as the primary point of contact. However, companies with defense contracts or critical infrastructure applications may engage with additional agencies.

As of May 2026, the US government is considering a shift toward requiring pre-release safety reviews for advanced artificial intelligence models, driven by concerns over cybersecurity risks. While current agreements remain voluntary, discussions of executive orders that could make certain pre-release reviews mandatory are ongoing.

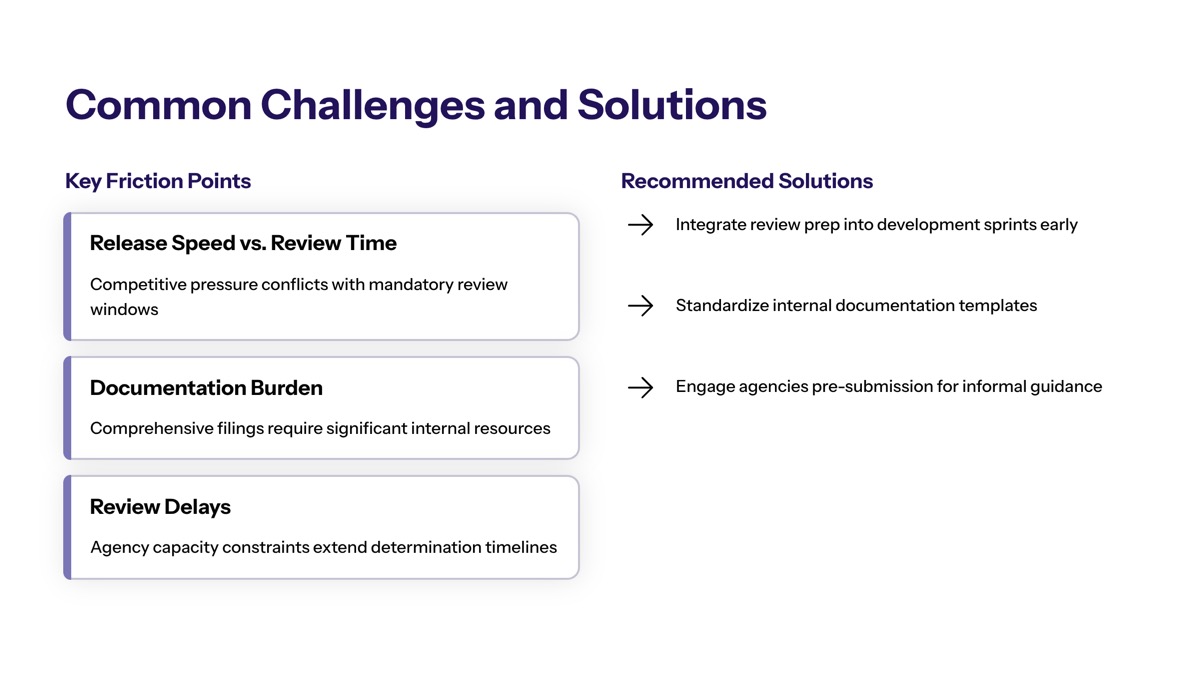

Common Challenges and Solutions

Navigating new federal requirements presents practical obstacles for organizations developing advanced AI systems. These challenges require strategic planning rather than reactive responses.

Review Timeline and Development Delays

Pre-release vetting introduces evaluation periods that may conflict with competitive product roadmaps. Organizations should integrate CAISI engagement early in the development cycle—ideally during the model design phase rather than immediately before planned release. Building buffer time into release schedules accounts for iterative feedback and additional testing requirements.

Regulations aimed at AI safety are feared to hinder AI progress, creating potential roadblocks for development. However, organizations that establish ongoing relationships with CAISI and develop evaluation-ready documentation practices can reduce approval timelines for subsequent models.

Technical Documentation Requirements

Federal standards require comprehensive documentation that many development teams may not routinely produce. Prepare documentation templates covering model architecture, training data provenance, safety mitigations, and internal red-team results. Maintain version control for models and detailed logging throughout development to facilitate testing of reduced-guardrail versions.

The goal is to assist industry in meeting requirements efficiently. Organizations should align internal threat models and risk taxonomies with CAISI’s priorities—cybersecurity, biosecurity, and chemical weapons risk—to ensure documentation addresses evaluation criteria directly.

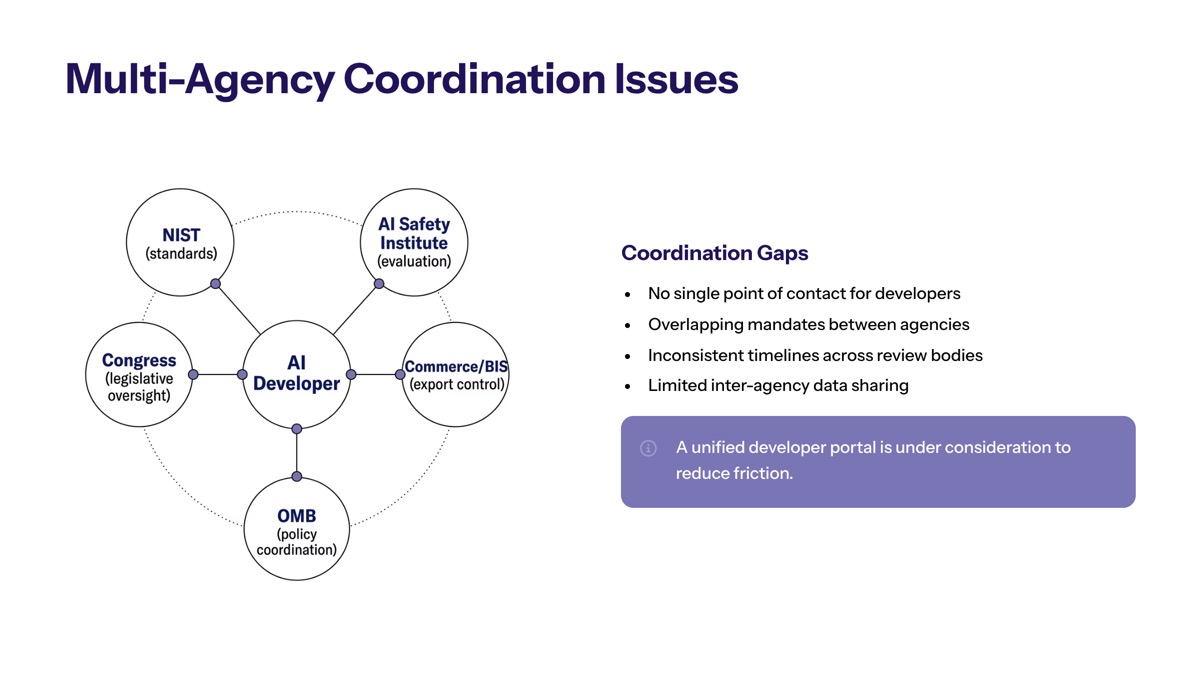

Multi-Agency Coordination Issues

Overlapping requirements from different federal agencies can create confusion about which regulations apply and which agency has primary jurisdiction. Organizations should designate a compliance point of contact responsible for coordinating with CAISI and tracking engagement with other federal agencies.

Some concerns exist that burdensome and unnecessary regulation could slow innovation. However, early engagement with CAISI helps clarify requirements and may reduce duplicative oversight. The TRAINS Taskforce provides a mechanism for interagency coordination, streamlining the process for organizations that engage proactively.

Conclusion and Next Steps

Pre-release AI model reviews represent mandatory federal oversight for high-risk AI systems, fundamentally changing how advanced AI models reach public deployment. The US government has transitioned from voluntary commitments to direct involvement in AI development cycles, with CAISI conducting comprehensive evaluations of frontier models before release authorization.

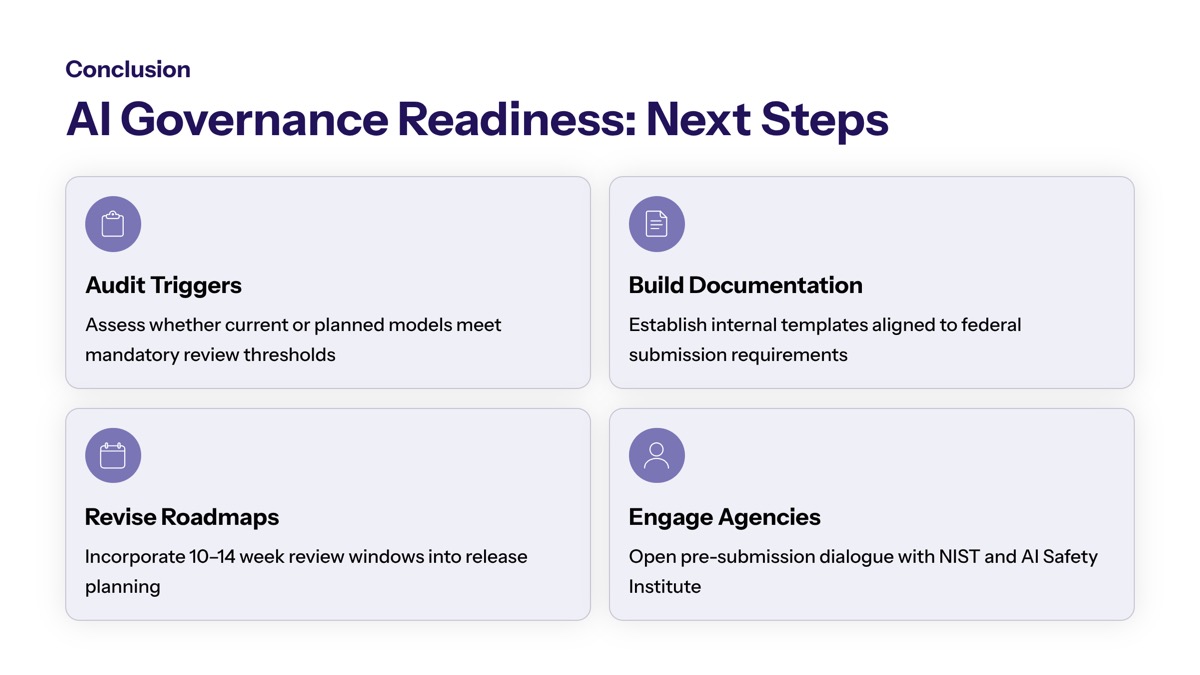

Immediate actionable next steps:

Assess current AI models against frontier capability thresholds and national security risk domains

Engage with CAISI early in development—ideally during model design rather than pre-release

Prepare technical documentation covering architecture, training data provenance, safety mitigations, and internal testing results

Build evaluation timeline buffers into product roadmaps

Designate compliance personnel to coordinate federal agency engagement

Related topics worth monitoring include ongoing monitoring requirements for deployed models, international AI competition dynamics affecting US policy, and evolving definitions of frontier model thresholds. In October 2022, President Joe Biden unveiled a new AI Bill of Rights, outlining five protections for Americans in the AI age, including safe and effective systems and algorithmic discrimination protection—these principles continue informing federal AI governance as requirements expand.

Additional Resources

NIST AI Risk Management Framework (AI RMF 1.0): Foundational voluntary guidance for AI risk identification and management

CAISI guidance documents: Official standards for pre-release evaluation submissions

National Security Memorandum NSM-25: Defines frontier AI models and establishes federal governance structures

Executive Order 14110: Comprehensive AI policy directives including critical infrastructure standards

Organizations seeking to develop guidelines for internal compliance should reference CAISI’s published evaluation methodologies and connect with NIST organizations for clarification on documentation requirements.