Private LLM Solutions for Enhanced Local AI Experiences

In an era where data privacy and AI efficiency are paramount, private large language models (LLMs) have emerged as a game-changing solution. These models harness the power of advanced ai models while ensuring sensitive information remains secure on your device.

Key Takeaways

-

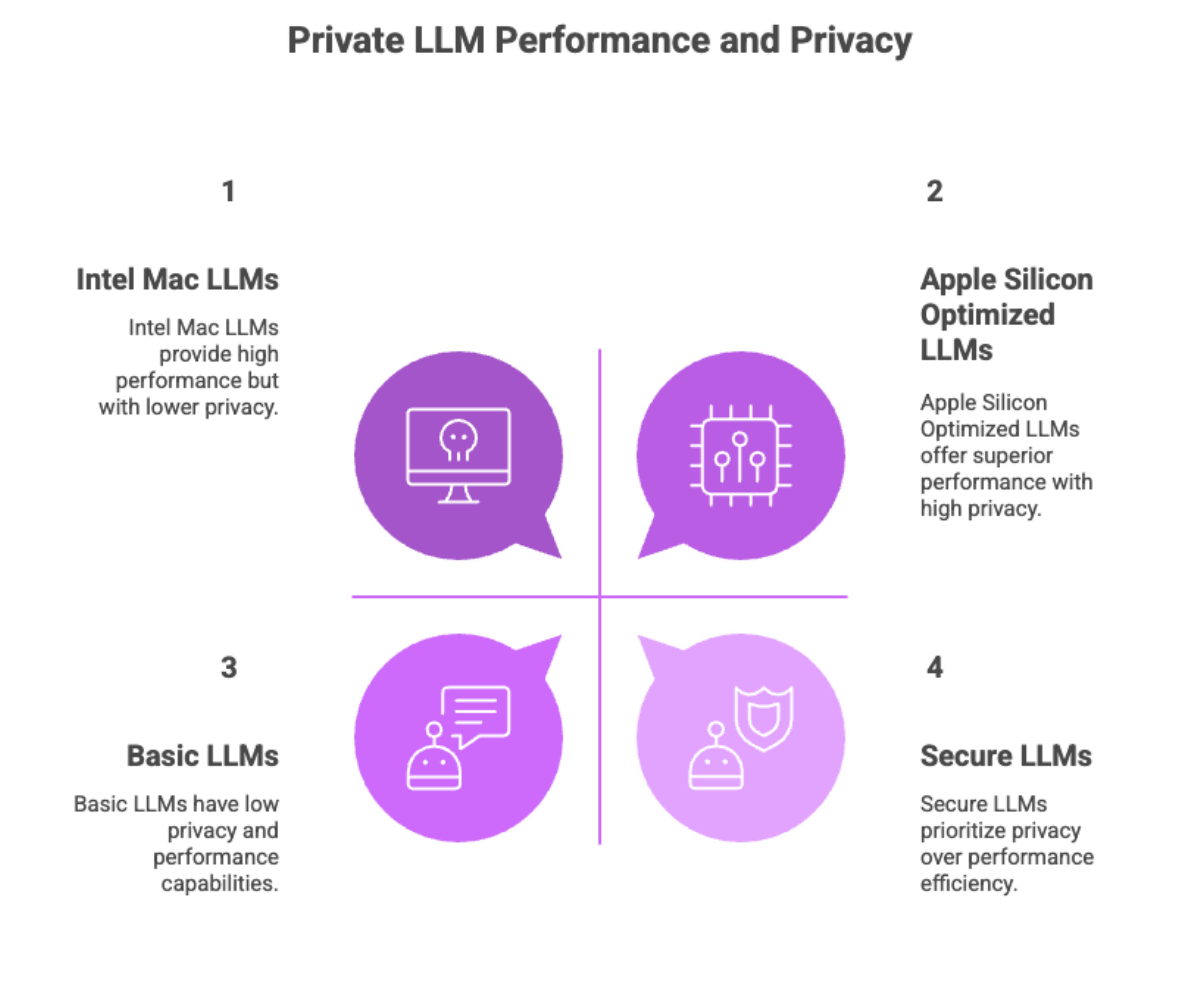

Private LLMs offer higher accuracy by leveraging domain specific data and preserving the model’s weight distribution through advanced quantization techniques. These are based models such as Llama, Google Gemma, and Qwen, which are further optimized for privacy and performance.

-

Optimized for Apple Silicon and designed for macOS applications, these models provide superior performance with minimal resource consumption, avoiding too much power usage.

-

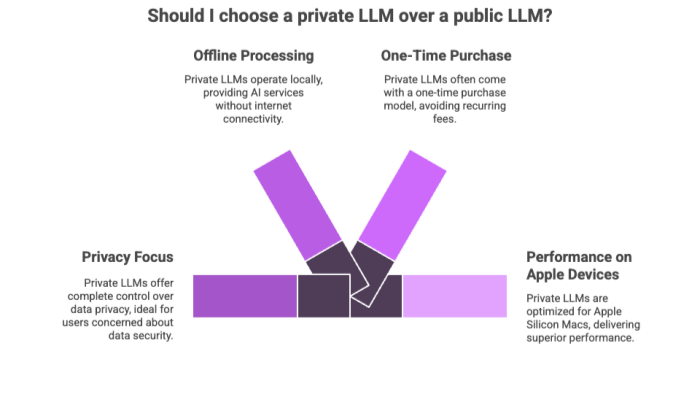

With a single purchase and family sharing support, private LLMs deliver a seamless AI experience across iPhone, iPad Pro, and Mac, making them the go-to choice for secure, efficient, and customizable AI workflows.

Introduction to Private LLMs

As AI continues to evolve, the demand for private large language models (LLMs) has surged—particularly among users who prioritize privacy, performance, and on-device processing.

Unlike public LLMs, which often require continuous internet connectivity and rely on cloud infrastructure, private LLMs operate locally offering a secure and offline AI language service.

Private LLMs leverage advanced machine learning techniques to deliver personalized and secure AI experiences.

These tools are ideal for users who seek:

-

Uncensored chat capabilities

-

One-time purchase models with no recurring fees

-

Complete control over data privacy

-

AI-powered tools for productivity and personalization

Supporting high-performing models like Llama 3.2, Google Gemma 2, Qwen 2.5, and Llama 3.1, private LLMs are especially optimized for Apple Silicon Macs, delivering superior performance and accurate responses that exceed what’s possible with standard quantization.

Benefits of Private LLMs on Apple Devices

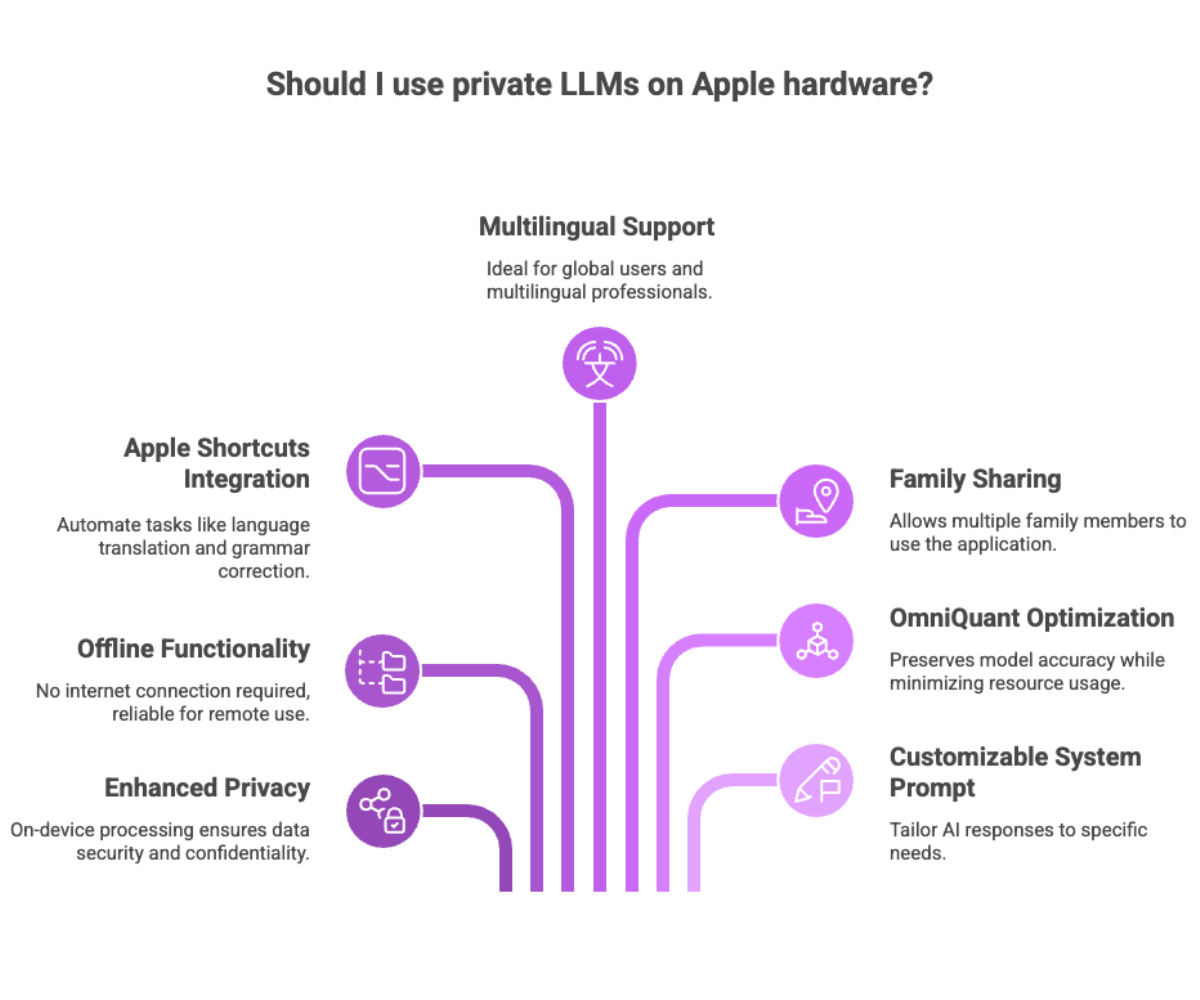

From improved latency to enhanced usability, private LLMs offer an array of benefits that redefine AI-driven workflows on Apple hardware. For users, this means hallelujah privacy—on-device processing ensures your data stays secure and confidential, free from cloud-based collection.

Key Advantages:

-

Offline functionality: No internet connection required, making them reliable for remote or secure environments.

-

Shortcuts integration: Automate repetitive tasks like language translation, grammar correction, or content summarization.

-

AI in multiple languages: Ideal for global users and multilingual professionals.

-

Family Sharing: Allows multiple family members to use the application across devices.

-

OmniQuant optimization: A quantization method that preserves model accuracy while minimizing resource usage.

-

Customizable system prompt: Tailor AI responses and behavior to your specific needs by adjusting the system prompt.

Whether you’re building a daily planner using Siri or auto-generating emails with Shortcuts, private LLMs empower users with on-device intelligence that feels fast, personal, and highly secure.

Streamlining Workflows with Private LLMs

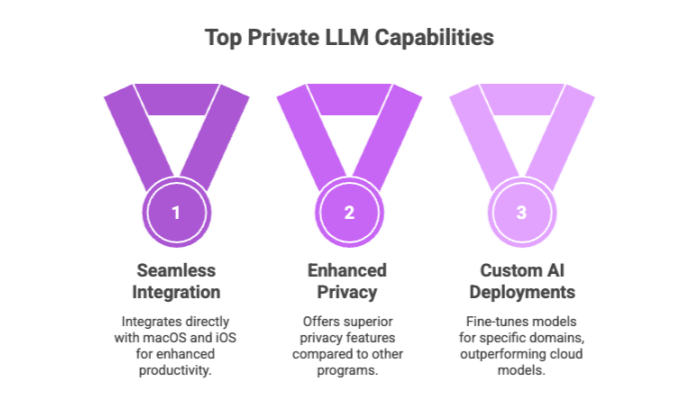

One of the biggest selling points of private LLMs is their seamless ability to streamline workflows. These models integrate directly with macOS and iOS environments to automate, analyze, and enhance productivity. Compared to other programs, private LLMs offer superior integration with Apple devices and enhanced privacy features, making them a more secure and efficient choice.

Supported Workflows:

-

Text parsing and grammar correction

-

Summarization of long content in real time

-

Conversational interfaces powered by uncensored chat

-

Support for app development and scripting tasks

Users can also fine-tune an existing model to cater to specific domains—be it legal writing, academic summaries, or customer service scripts. This opens up possibilities for custom AI deployments that outperform generalized cloud models.

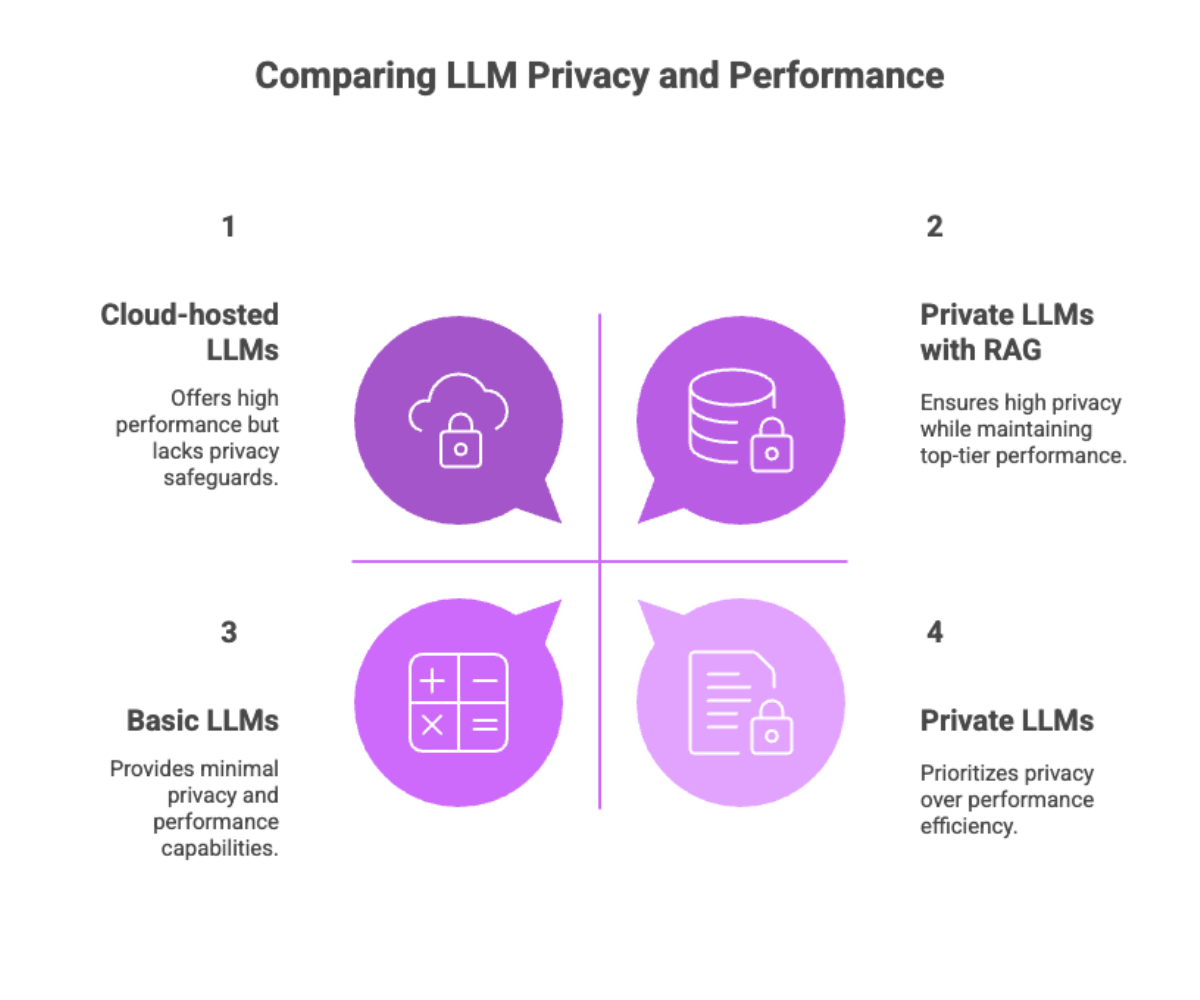

Data-Driven Private LLMs

Unlike cloud-hosted LLMs that rely on persistent internet access and third-party APIs, private LLMs are built with data privacy at their core. Private LLMs with retrieval augmented generation can securely access information from web pages and knowledge bases without compromising user privacy.

How they protect your data:

-

On-device processing: Ensures that all prompts and responses are contained locally, never transmitted externally.

-

No cloud sync or storage: Completely avoids risks related to centralized data breaches.

-

Custom training data: Allows for domain-specific development, improving accuracy for niche tasks.

For users in sectors like healthcare, law, or journalism—where data sensitivity is paramount—private LLMs offer peace of mind without sacrificing performance.

Understanding Model-Based Private LLMs

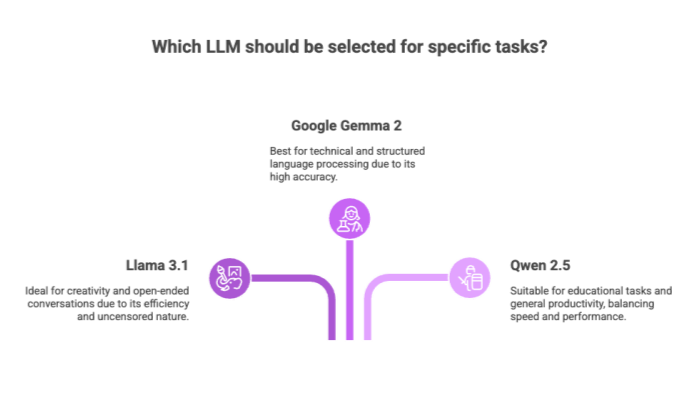

At their core, private LLMs are driven by leading large language models such as Llama 3.1, Google Gemma 2, and others. Support for new models is continually added, allowing users to benefit from the latest advancements in AI language technology. These model-based architectures provide the intelligence behind tasks like summarization, rewriting, and translation.

Why model selection matters:

-

Llama 3.1: Efficient, uncensored, great for creativity and open-ended conversations.

-

Google Gemma 2: Highly accurate and designed for technical and structured language processing.

-

Qwen 2.5: Balanced for speed and performance, suitable for educational tasks and general productivity.

While these models may not be identical to ChatGPT 3.5T, their performance is on the same level for most practical applications.

With support for separate conversations, memory-based queries, and multi-threaded responses, these LLMs give users granular control over their AI interactions.

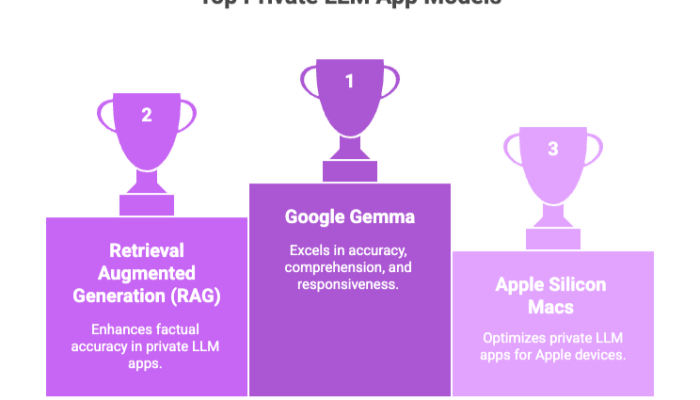

Google Gemma and Private LLMs

Among the emerging models used in private LLM apps, Google Gemma stands out for its focus on accuracy, comprehension, and responsiveness.

Key Features:

-

Ideal for text parsing and summarization

-

Supports retrieval augmented generation (RAG) for factual accuracy

-

Highly optimized for Apple Silicon Macs

-

Works offline for sensitive tasks like contract analysis or policy reviews

By choosing models like Gemma, users gain access to enterprise-grade AI capabilities in a compact, secure form factor.

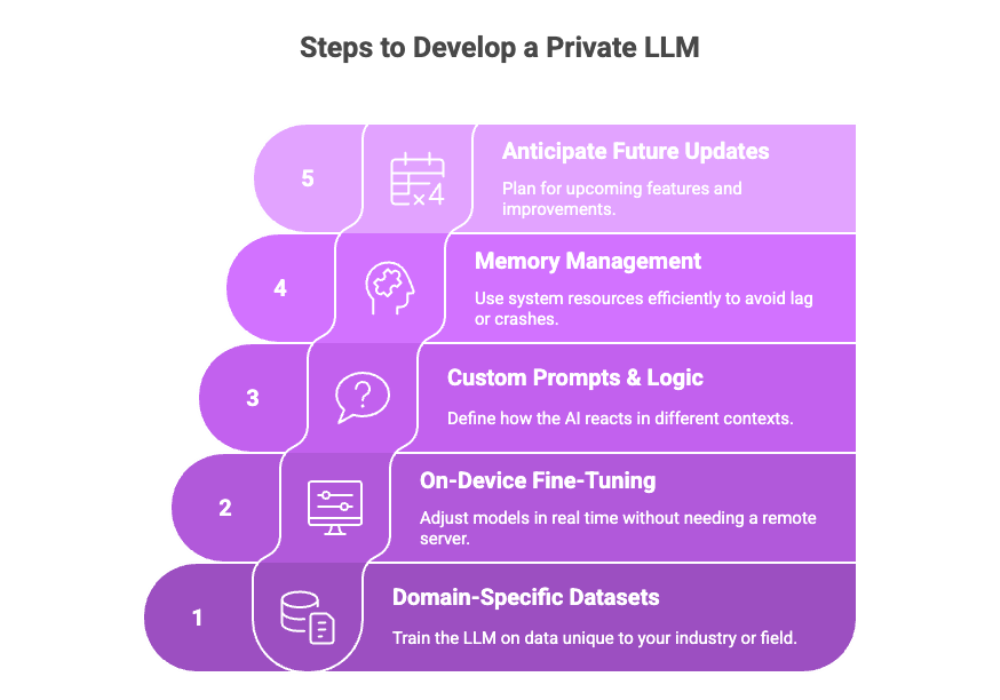

Private LLM Development: From Model to Experience

Developing and fine-tuning a private LLM requires more than just installing a pre-trained model—it involves curating data, refining parameters, and optimizing performance.

Development Considerations:

-

Domain-specific datasets: Train your LLM on data unique to your industry or field.

-

On-device fine-tuning: Adjust models in real time without needing a remote server.

-

Custom prompts and behavior logic: Define how the AI reacts in different contexts.

-

Memory management: Use system resources efficiently to avoid lag or crashes.

-

Anticipate future update: Plan for upcoming features and improvements that will further enhance private LLM capabilities.

Companies and power users alike are leveraging private LLM development for use cases such as knowledge management, technical writing, and automated email responses.

Monitoring and Governance of Private LLMs

As private LLMs become increasingly integrated into daily workflows, especially on Apple Silicon Macs, iPhones, and iPads, ensuring their secure and ethical operation is essential. Monitoring and governance play a critical role in maintaining model reliability, data privacy, and compliance with regulations. This section introduces key strategies and best practices to help users and organizations responsibly manage their private LLM deployments.

Ensuring Security, Compliance, and Responsible Use

As private LLMs become central to AI-driven workflows on Apple Silicon Macs, iPhones, and iPads, robust monitoring and governance are essential to unlock their full potential while safeguarding sensitive data. Unlike public LLMs, private models operate entirely on-device, leveraging the model’s weight distribution and domain-specific data to deliver superior performance and accurate responses—without ever needing an internet connection. This local AI approach offers a huge difference in privacy and control, but it also requires organizations and users to take proactive steps to ensure responsible use.

Effective monitoring and governance frameworks help maintain the integrity and reliability of private LLMs, such as Llama 3.1 8B, Google Gemma 2, and Llama 3.3 70B. By regularly auditing data collection and training data, users can prevent biases and ensure that AI models remain aligned with organizational values and compliance requirements. This is especially critical in industries like healthcare and finance, where the consequences of data mishandling can be significant.

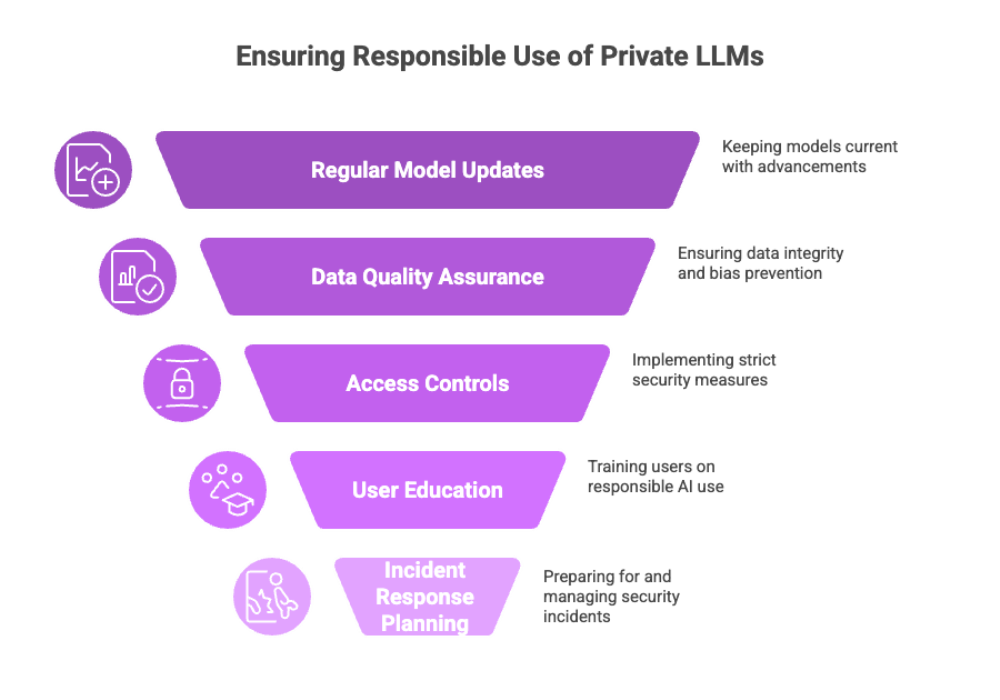

Key features of a strong monitoring and governance strategy for private LLMs include:

-

Regular Model Updates and Maintenance: Keep your LLM model current with the latest advancements, such as retrieval augmented generation (RAG) techniques, to ensure best performance and higher accuracy.

-

Data Quality and Integrity: Use only verified, unbiased training data and apply preprocessing methods like tokenization to support accurate responses and effective grammar correction.

-

Access Controls and Authentication: Implement strict access controls to protect sensitive data and prevent unauthorized use, especially for resource-intensive models like Llama 3.3 70B.

-

User Education and Training: Equip users with clear guidelines and best practices for using AI-powered tools, including text parsing and Apple Shortcuts, to maximize productivity while minimizing risks.

-

Incident Response Planning: Develop and maintain incident response protocols to quickly address any issues or breaches, leveraging the flexibility of on-device processing and local AI for rapid containment.

By prioritizing these governance measures, organizations and individual users can confidently deploy private LLMs across their Apple devices, knowing that their data remains secure and their AI models are used responsibly. This not only ensures compliance with regulatory standards but also builds trust with users and customers—making such a huge difference in both performance and reputation.

As private LLMs continue to evolve, staying up-to-date with best practices in monitoring and governance will be key to harnessing the full power of AI language services on Apple Silicon Macs, iPhones, and iPads. With the right frameworks in place, users can enjoy the benefits of uncensored chat, domain-specific intelligence, and AI-powered tools—securely and efficiently, every step of the way.

Best Practices for Private LLM Use

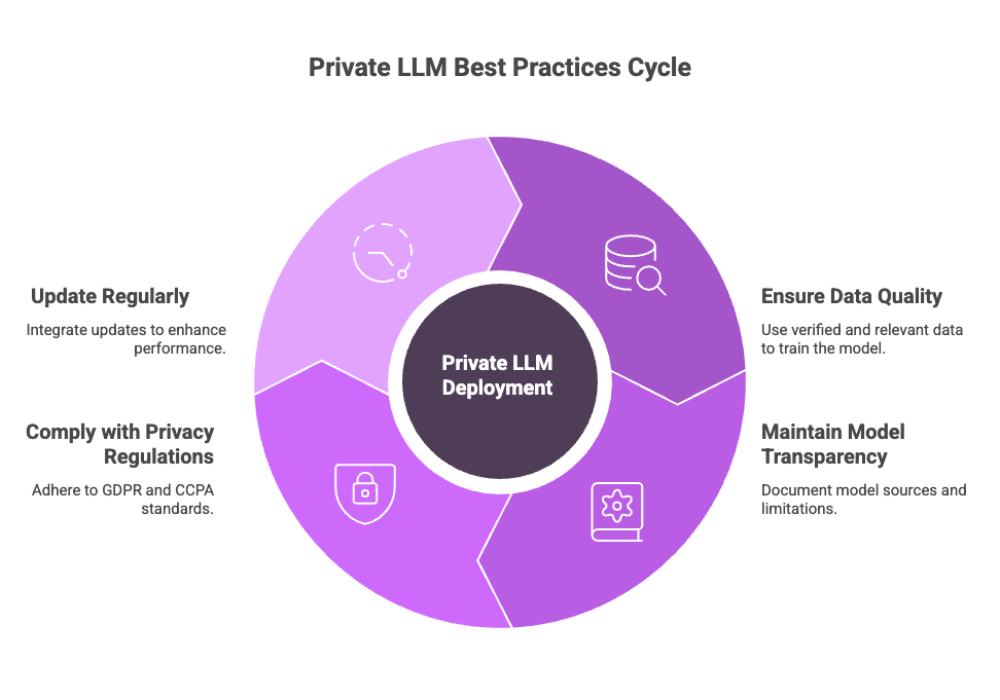

To get the most out of your private LLM investment, it’s essential to follow best practices that ensure quality, consistency, and compliance.

These practices ensure that private LLM deployments are not only powerful but also sustainable and trustworthy. Staying informed about future updates is crucial to maintain optimal performance and security.

Private LLM Best Practices:

-

Data quality assurance: Only train with verified, relevant data to avoid inaccuracies.

-

Model transparency: Keep documentation of model sources, quantization methods, and limitations.

-

User privacy compliance: Adhere to GDPR, CCPA, and similar frameworks for data security.

-

Update regularly: Integrate model updates to improve language comprehension and reduce hallucinations.

-

Leverage the advantages that future updates bring, such as improved conversation management and enhanced model performance.

These practices ensure that private LLM deployments are not only powerful but also sustainable and trustworthy.

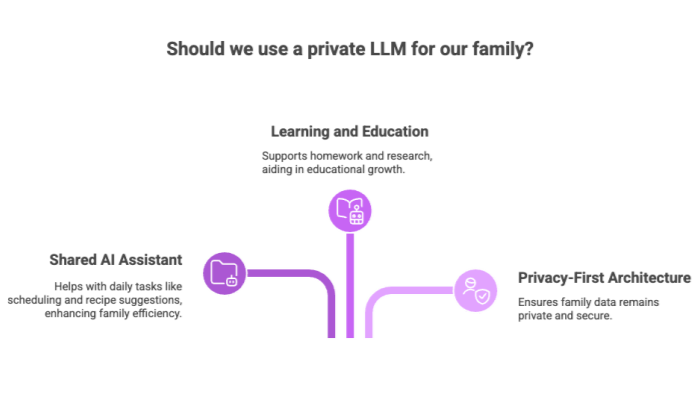

Family Sharing and Private LLMs

For home or shared device use, private LLMs also offer excellent flexibility through Apple Family Sharing. This allows up to six users to access the app without needing additional licenses. Each family member can start a new conversation, ensuring their chat histories remain separate and unaffected by others.

Benefits for families:

-

Shared AI assistant for daily tasks: Scheduling, grammar help, or recipe suggestions

-

Learning and education: Use AI to support homework, writing assignments, and research

-

Privacy-first architecture: Ensures that family data isn’t exposed to external servers

For example, a family might use a private LLM to collaboratively plan a vacation itinerary, with each member suggesting activities and the AI organizing the schedule. This makes private LLMs one of the few AI-powered tools that are truly family-friendly, safe, and efficient for cross-device use.

Final Thoughts

Private LLMs are redefining how we interact with AI—offering speed, accuracy, privacy, and customization all in one powerful package. With models like Llama 3.2, Google Gemma 2, and Qwen 2.5, users now have access to high-performance AI experiences that are local, offline, and tailored to their workflows.

Whether you’re a solo creator, enterprise developer, or tech-savvy family, the private LLM ecosystem offers a one-time purchase solution to unlock the full potential of AI—without compromises.

The current generation of private LLM apps serves as a neat proof of the technology's potential, demonstrating secure, high-performance AI experiences that continue to improve with each update. Users do not regret buying private LLM solutions, as ongoing improvements consistently enhance their value.