Open Source AI Models for Local Deployment

Open source AI models for local deployment represent the most significant shift in enterprise artificial intelligence strategy since cloud computing emerged two decades ago. Organizations can now run production-grade language models like LLaMA 3.2, Mistral 7B, and Qwen2.5 entirely on-premises, eliminating cloud dependencies while reducing operational costs by up to 70% compared to API-based solutions. This transformation enables complete data sovereignty, regulatory compliance, and customization capabilities that closed source models cannot match.

The strategic implications extend beyond cost savings. Leading technology companies including Meta, Microsoft, Alibaba, and Google AI Studio have released enterprise-ready open models that deliver comparable performance to commercial models while providing full control over deployment environments. These developments signal a fundamental restructuring of the AI landscape, where enterprises gain unprecedented autonomy over their intelligence infrastructure.

Key Strategic Takeaways:

• Cost Optimization with Self Hosted LLMs: Local models eliminate per-token pricing models, with successful enterprise implementations showing 3-6 month ROI through reduced API fees and predictable infrastructure expenses across different models and use cases.

• Data Sovereignty and Compliance: On-premises deployment ensures sensitive data stays within organizational boundaries, addressing GDPR, HIPAA, and emerging AI governance requirements while enabling fine tuning on proprietary datasets.

• Strategic Independence & Customization: Local AI models provide protection against vendor pricing changes, service discontinuation, and geopolitical restrictions while enabling customization through domain specific data integration and multi step tool use.

Understanding Open Source AI Models, Open Source LLMs, and Local Models for Deployment

Open source AI models fundamentally differ from closed source models in licensing, transparency, and deployment flexibility. While commercial models like GPT-4 operate through API endpoints with usage-based pricing, open models provide complete model files that organizations can deploy on their own infrastructure. This distinction enables enterprises to achieve full control over their AI applications without external dependencies.

The technical architecture supporting local deployment has matured significantly. Modern large language models utilize transformer architectures optimized for efficient inference on standard enterprise hardware, often capable of running on a single GPU. Model sizes range from compact 1.5B parameter variants suitable for edge devices to massive 70B+ parameter systems requiring specialized processing power. These similarly sized models often match or exceed the performance of their commercial counterparts on specific enterprise tasks.

Leading open source models include Meta’s LLaMA series, which provides multilingual and multimodal capabilities across parameter ranges from 1B to 405B. Mistral AI has emerged as a European alternative with high performance on business applications and extensive multilingual support. Alibaba’s Qwen series offers specialized variants optimized for code generation, mathematical reasoning, and bilingual operations. Microsoft’s Phi-4 represents the latest generation of compact models designed specifically for enterprise deployment scenarios. Google AI Studio also contributes to the open source community with accessible tools for model training and deployment.

Market Landscape and Enterprise Adoption of Open Source LLMs and Local AI Models

Enterprise adoption of local AI models has accelerated dramatically, with current market analysis showing local deployments controlling approximately 55% of enterprise large language model implementations. This shift reflects growing concerns about data privacy, cost predictability, and strategic autonomy that cloud-based solutions cannot address.

|

Deployment Model |

3-Year TCO (1000 users) |

Data Control |

Customization |

Compliance |

|---|---|---|---|---|

|

Cloud APIs (GPT-4) |

$2.4M - $3.6M |

Limited |

Minimal |

Dependent |

|

Local Open Source LLMs |

$800K - $1.2M |

Complete |

Full |

Independent |

|

Hybrid Approach |

$1.5M - $2.1M |

Partial |

Moderate |

Complex |

Fortune 500 case studies demonstrate tangible benefits. A major financial services firm reduced AI operational costs by 68% while achieving sub-100ms latency for customer service applications using fine tuned LLMs deployed on local infrastructure. Healthcare organizations report significant compliance simplification through air-gapped deployment environments that eliminate external data transmission requirements.

Strategic Business Benefits of Running Open Source LLMs on Local Infrastructure

Cost optimization represents the most immediate advantage of local model deployment. Traditional cloud-based AI services charge per token or API call, creating unpredictable expenses that scale with usage. Local models require upfront infrastructure investment but eliminate ongoing usage fees. Organizations processing millions of tokens monthly typically achieve break-even within 3-6 months, with subsequent operations delivering pure cost savings.

Data sovereignty concerns drive adoption across regulated industries. Local AI models enable processing of sensitive information without external transmission, addressing compliance requirements for financial services, healthcare, and government organizations. This capability supports retrieval augmented generation systems that can analyze proprietary documents and databases while maintaining complete data control.

Latency reduction provides competitive advantages for real-time applications. Local models eliminate network round-trips, reducing inference times from hundreds of milliseconds to single-digit latency. This performance improvement enables new use cases including real-time language understanding, interactive customer service, and high-frequency trading applications that require immediate responses.

Customization capabilities through fine tuning represent a strategic differentiator. Organizations can adapt open source LLMs using their specific domain data, creating specialized AI applications that understand industry terminology, company processes, and unique requirements. This customization level remains impossible with closed source models that prohibit modification or training data integration.

Risk Mitigation and Competitive Advantages of Self Hosted LLMs

Vendor independence protects against strategic risks inherent in cloud-dependent AI strategies. Recent API pricing changes and service modifications by major providers demonstrate the volatility of external dependencies. Local models provide immunity from vendor decisions, ensuring business continuity regardless of external market changes.

Intellectual property protection becomes critical as AI applications handle increasingly sensitive business processes. Local deployment creates air-gapped environments where proprietary algorithms, training data, and model outputs remain completely internal. This protection extends to competitive intelligence, research data, and strategic planning documents that require absolute confidentiality.

|

Risk Category |

Cloud Dependency |

Local Deployment |

|---|---|---|

|

Vendor Lock-in |

High |

None |

|

Data Exposure |

Medium-High |

Minimal |

|

Cost Volatility |

High |

Low |

|

Service Availability |

External Dependency |

Internal Control |

|

Customization Limits |

Significant |

None |

Top Enterprise-Grade Open Source LLMs and Large Language Models for 2025

Meta’s LLaMA 3.2 series represents the current gold standard for enterprise open source models. Available in configurations from 1B to 70B parameters, these models provide commercial licensing suitable for business applications. The llama model architecture excels at natural language understanding, question answering, and text generation across multiple languages. Recent benchmarks show LLaMA 3.2 matching GPT-4 performance on business-relevant tasks while enabling complete on-premises deployment.

Mistral AI has established itself as the European leader in open source language models. The company’s 7B parameter model delivers exceptional performance per compute unit, making it ideal for organizations with limited processing power. Mistral’s 8x22B mixture-of-experts model provides enterprise-scale capabilities while maintaining efficiency through its specialized architecture. Both models offer strong performance on multilingual tasks and demonstrate particular strength in code generation and technical documentation.

Alibaba’s Qwen series addresses specific enterprise needs through specialized model variants. Qwen2.5-Coder excels at software development tasks, providing accurate code generation across multiple programming languages. Qwen2.5-Math demonstrates superior performance on quantitative analysis and financial modeling. These specialized models enable organizations to deploy task-specific AI applications without compromising on performance or requiring larger models.

Microsoft’s Phi-4 represents the latest advancement in efficient model design. Despite its compact 14B parameter count, Phi-4 delivers performance comparable to much larger models through advanced pre training techniques and curated training data. This efficiency makes Phi-4 particularly suitable for edge devices and distributed deployment scenarios where computational resources are constrained.

Google AI Studio contributes to the ecosystem by enabling enterprises to build, fine tune, and deploy open source LLMs with integrated tools for multi step tool use and AI apps development. It supports importing local files and managing model cards for transparency and governance.

Model Selection Criteria and Performance Benchmarks for Open Source LLMs

Enterprise model selection requires evaluation across multiple dimensions beyond raw performance metrics. Accuracy on business-relevant tasks, inference speed under production loads, memory requirements for target hardware, and licensing terms all influence deployment decisions. Organizations must also consider multilingual capabilities, function calling support, and integration complexity with existing enterprise systems.

Performance benchmarks reveal significant variations across different use cases. For customer service applications, smaller models like Mistral 7B often provide sufficient accuracy while delivering superior response times. Complex analytical tasks may require larger models like LLaMA 70B to achieve acceptable quality levels. Document processing workloads benefit from models with long context tasks support, enabling analysis of comprehensive reports and legal documents.

|

Model |

Parameters |

Enterprise Use Cases |

Hardware Requirements |

Licensing |

|---|---|---|---|---|

|

LLaMA 3.2 |

1B-70B |

General purpose, multilingual |

4GB-80GB VRAM |

Commercial |

|

Mistral 7B |

7B |

Business applications, coding |

8-16GB VRAM |

Apache 2.0 |

|

Qwen2.5 |

7B-72B |

Specialized tasks, bilingual |

8GB-80GB VRAM |

Apache 2.0 |

|

Phi-4 |

14B |

Efficient deployment, edge |

16-24GB VRAM |

MIT |

|

Stable Diffusion |

N/A |

Image generation and multimodal AI |

8-24GB VRAM |

CreativeML License |

Infrastructure Requirements, Deployment Architecture, and Tools to Run LLMs

Hardware specifications vary dramatically based on model size and performance requirements. Smaller models (1B-7B parameters) can operate on high-end consumer GPUs like RTX 4090 with 16-24GB VRAM. Medium-scale deployments (13B-30B parameters) typically require professional GPUs such as A6000 or multiple RTX 4090s. Enterprise-scale implementations (70B+ parameters) demand specialized hardware including H100 clusters or distributed CPU deployments with substantial memory resources.

Software infrastructure centers around proven open source tools and frameworks. Ollama and LM Studio provide simplified deployment for many models, offering one-command installation and management. Hugging Face Transformers enable custom integration with existing enterprise applications through Python APIs. Containerization using Docker and Kubernetes supports scalable, production-ready deployments across distributed infrastructure.

Network architecture considerations include model serving endpoints, load balancing, and security controls. Many organizations implement reverse proxy configurations to manage access and monitor usage. Integration with existing enterprise systems occurs through REST APIs, enabling seamless connection with business applications, databases, and workflow management systems.

Storage requirements extend beyond model files to include fine tuned models, training data, and inference logs. Model optimization through quantization and pruning can reduce storage needs by 50-75% while maintaining acceptable performance levels. These optimizations prove particularly valuable for edge deployment scenarios where storage capacity is limited.

Implementation Frameworks, Complementary Tools, and Open Source Community Resources

Deployment platform selection depends on organizational requirements and technical expertise. Ollama excels in simplicity, providing pre-configured ollama models with minimal setup requirements. LM Studio offers a user-friendly interface for managing local models, including importing local files and monitoring model cards.

This approach suits organizations seeking rapid deployment without extensive AI infrastructure experience. Custom implementations using tools like Hugging Face and direct integration with machine learning frameworks provide greater flexibility for complex enterprise requirements.

Integration patterns vary from standalone applications to comprehensive enterprise AI platforms. Microservices architectures enable modular deployment where different models serve specific business functions. This approach supports gradual rollout strategies and allows organizations to optimize different models for different use cases simultaneously.

Monitoring and observability tools become critical for production deployments. Organizations require visibility into inference latency, model accuracy, resource utilization, and user interaction patterns. These metrics support capacity planning, performance optimization, and compliance reporting requirements.

Compliance, Security, and Governance Considerations in Running Open Source LLMs

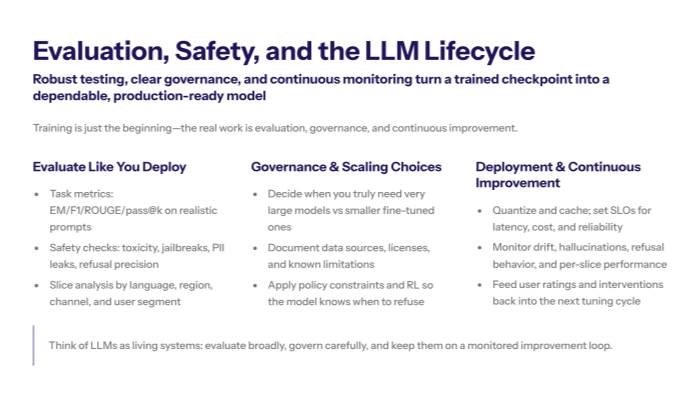

Data governance frameworks must address the complete AI lifecycle from model selection through inference and output management. Organizations require clear policies governing what data can be processed, how fine tuned models are trained and validated, and how inference results are stored and shared. These frameworks become particularly complex when dealing with cross-border data residency requirements and industry-specific regulations.

Security implementation encompasses multiple layers including model encryption, access controls, network security, and audit trails. Model files themselves require protection as valuable intellectual property. Access controls must govern not only who can use AI applications but also who can modify, retrain, or deploy different models within the organization.

Compliance validation procedures ensure ongoing adherence to regulatory requirements. For healthcare organizations, this includes HIPAA compliance for any text data processing. Financial services must address SOX requirements for AI applications involved in financial reporting. Government contractors face additional requirements around data classification and clearance levels for personnel managing AI systems.

Model versioning and change management processes become essential as organizations deploy multiple models and iterate on fine tuned versions. These processes must track model lineage, training data sources, validation results, and deployment history to support audit requirements and rollback capabilities.

Risk Assessment and Mitigation Strategies for Enterprise AI Deployments

Technical risks include model bias, hallucinations, and performance degradation over time. Organizations must implement testing frameworks that continuously evaluate model outputs for accuracy, bias, and appropriateness. This includes adversarial testing to identify potential failure modes and monitoring systems to detect performance degradation in production environments.

Operational risks encompass infrastructure failures, scaling challenges, and maintenance overhead. Local deployments require internal expertise for troubleshooting, updates, and capacity management. Organizations must plan for hardware failures, software updates, and scaling demands that cloud providers typically handle automatically.

|

Risk Category |

Impact Level |

Mitigation Strategy |

Implementation Priority |

|---|---|---|---|

|

Model Bias |

High |

Continuous testing, diverse training data |

Critical |

|

Infrastructure Failure |

Medium |

Redundancy, backup systems |

High |

|

Security Breach |

High |

Encryption, access controls, monitoring |

Critical |

|

Performance Degradation |

Medium |

Performance monitoring, model updates |

Medium |

|

Compliance Violations |

High |

Regular audits, automated compliance checks |

Critical |

Legal risks center around licensing compliance, intellectual property protection, and liability considerations. Organizations must ensure all deployed models operate within their license terms, particularly regarding commercial use restrictions. Some open source models include provisions limiting commercial applications or requiring attribution that may conflict with business requirements. Permissive licenses like Apache 2.0 and MIT provide greater flexibility for enterprise use.

Implementation Strategy, ROI Analysis, and Future-Proofing with Open Source LLMs

Phased deployment approaches minimize risk while building internal capabilities. Organizations typically begin with pilot projects using smaller models for specific use cases, gradually expanding to larger models and broader applications as expertise develops. This progression allows teams to understand infrastructure requirements, develop operational procedures, and demonstrate business value before major investments.

Total cost of ownership analysis must consider hardware acquisition, software licensing, personnel costs, and ongoing maintenance. While local deployments eliminate usage-based API fees, they require upfront capital investment and internal expertise. Most organizations achieve positive ROI within 6-12 months for moderate-to-high usage scenarios, with cost advantages increasing over time.

Performance metrics should align with business objectives rather than purely technical benchmarks. Customer service applications might prioritize response time and user satisfaction scores. Content generation use cases focus on output quality and user productivity improvements. Financial applications emphasize accuracy and compliance with regulatory requirements.

Change management strategies address organizational adoption challenges and user training requirements. Success requires executive sponsorship, clear communication of benefits, and comprehensive training programs. Organizations must also address concerns about job displacement and ensure employees understand how AI tools augment rather than replace human capabilities.

Future-Proofing and Strategic Roadmap for Large Language and Open Source Models

Emerging model architectures including mixture-of-experts designs and multimodal capabilities will reshape enterprise AI strategies through 2026. Organizations should plan infrastructure that can accommodate these developments while maintaining compatibility with current deployments. This includes ensuring hardware can support larger models and more complex workloads as they become available.

Integration with edge computing extends local AI capabilities to distributed locations and mobile devices. This convergence enables AI applications at retail locations, manufacturing facilities, and field operations where connectivity may be limited. Organizations should consider how local models can support edge deployment scenarios as part of their broader digital transformation strategies.

Vendor ecosystem development around open source models continues accelerating, with commercial support options becoming increasingly available. Organizations should monitor these developments to identify opportunities for professional support, managed services, and complementary tools that can reduce internal maintenance overhead while preserving the benefits of local deployment.

Why Open Source AI Models for Local Deployment Are the Best Open Source Models for Enterprise Strategy ?

Open source AI models for local deployment represent a strategic inflection point for enterprise technology leadership. Organizations that successfully implement these capabilities gain substantial competitive advantages through cost optimization, data sovereignty, and customization capabilities impossible with cloud-dependent solutions. The combination of mature open source models, proven deployment tools, and demonstrated ROI creates compelling conditions for enterprise adoption.

The strategic implications extend beyond immediate operational benefits. Local AI deployment provides protection against vendor dependencies, enables compliance with evolving regulations, and creates opportunities for proprietary AI applications that differentiate businesses in their markets. As model capabilities continue advancing and deployment tools mature further, early adopters will establish sustainable advantages in their industries.

Enterprise leaders must act decisively to capture these opportunities. The technology maturity, cost advantages, and strategic benefits create optimal conditions for local AI deployment initiatives. Organizations that delay adoption risk falling behind competitors who successfully implement these capabilities and begin realizing compounding benefits from their AI investments.

Stay ahead of AI and tech strategy. Subscribe to What Goes On: Cognativ’s Weekly Tech Digest for deeper insights and executive analysis.