Why Do Some AI Models Struggle with Edge Cases? Insights and Solutions

Artificial intelligence (AI) has transformed industries, from healthcare to autonomous vehicles, but even the most advanced ai models encounter weaknesses when faced with edge cases. These are rare, unusual, or extreme scenarios that fall outside the typical training data distribution.

Edge cases are not just statistical outliers. They represent unexpected situations where ai’s struggle becomes apparent.

For example:

A self-driving car failing to interpret a temporary construction sign.

A diagnostic tool misclassifying a rare disease due to lack of representative data.

A fraud detection system ignoring an atypical but valid transaction.

Failure to account for these scenarios exposes organizations to safety risks, reputational damage, and financial losses. This makes edge case handling a critical part of any ai project roadmap.

Key Takeaways

Edge cases are low-frequency but high-impact events that ai models must learn to manage.

Ignoring edge cases can lead to ai failure, particularly in high-stakes environments such as healthcare and autonomous vehicles.

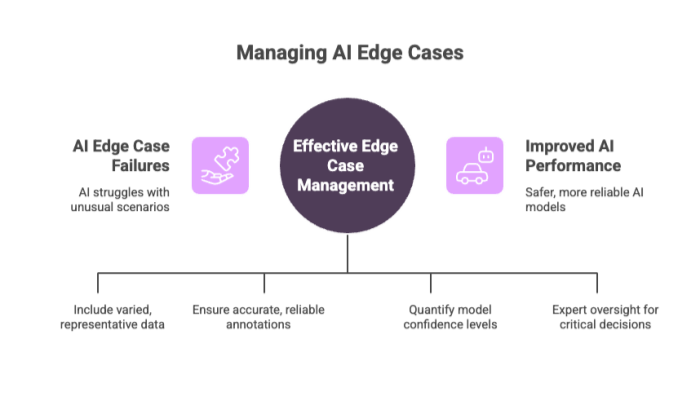

Effective management combines diverse training data, annotation quality, uncertainty estimation, and human-in-the-loop review.

Ethical implications arise when unaddressed edge cases disproportionately harm vulnerable groups.

The long tail problem means edge cases are effectively infinite; strategies must focus on adaptation, not elimination.

Companies that invest in edge case management improve model performance, safety, and trust.

The Impact of Edge Cases on AI Projects

Edge cases pose significant challenges to AI projects by exposing the limitations of models trained predominantly on typical data. These rare and unexpected instances can cause AI systems to fail in critical ways, affecting safety, performance, and trust. Understanding their impact is essential for developing more resilient and reliable AI solutions.

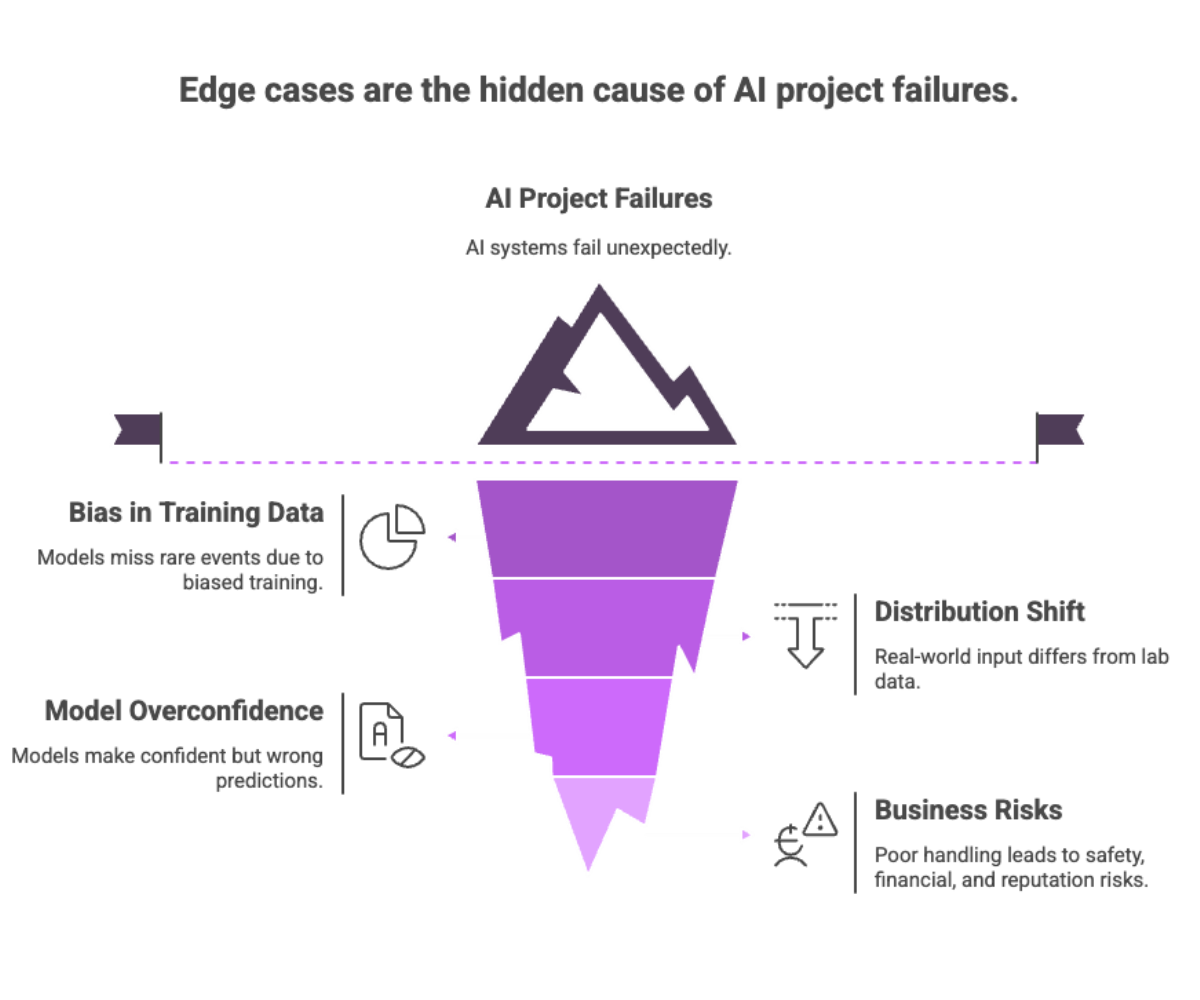

Why Edge Cases Break AI Models?

Bias in training data: Models trained only on typical patterns miss rare events.

Distribution shift: Real-world input diverges from lab-tested data.

Overconfidence: Models often produce confident but wrong predictions on unfamiliar cases.

When ai projects fail, edge cases are usually the culprit.

Business Risks of Poor Edge Case Handling

Risk Type |

Example |

Consequence |

|---|---|---|

Safety |

Misclassified pedestrian by autonomous vehicles |

Accident, liability |

Financial |

Rare fraud pattern ignored by fraud detection |

Monetary loss, regulatory fines |

Reputation |

AI chatbot mishandles sensitive query |

Loss of customer trust |

Compliance |

Bias in decision systems (e.g., loan approvals) |

Legal action, sanctions |

A company that underestimates edge cases risks both short-term losses and long-term erosion of trust.

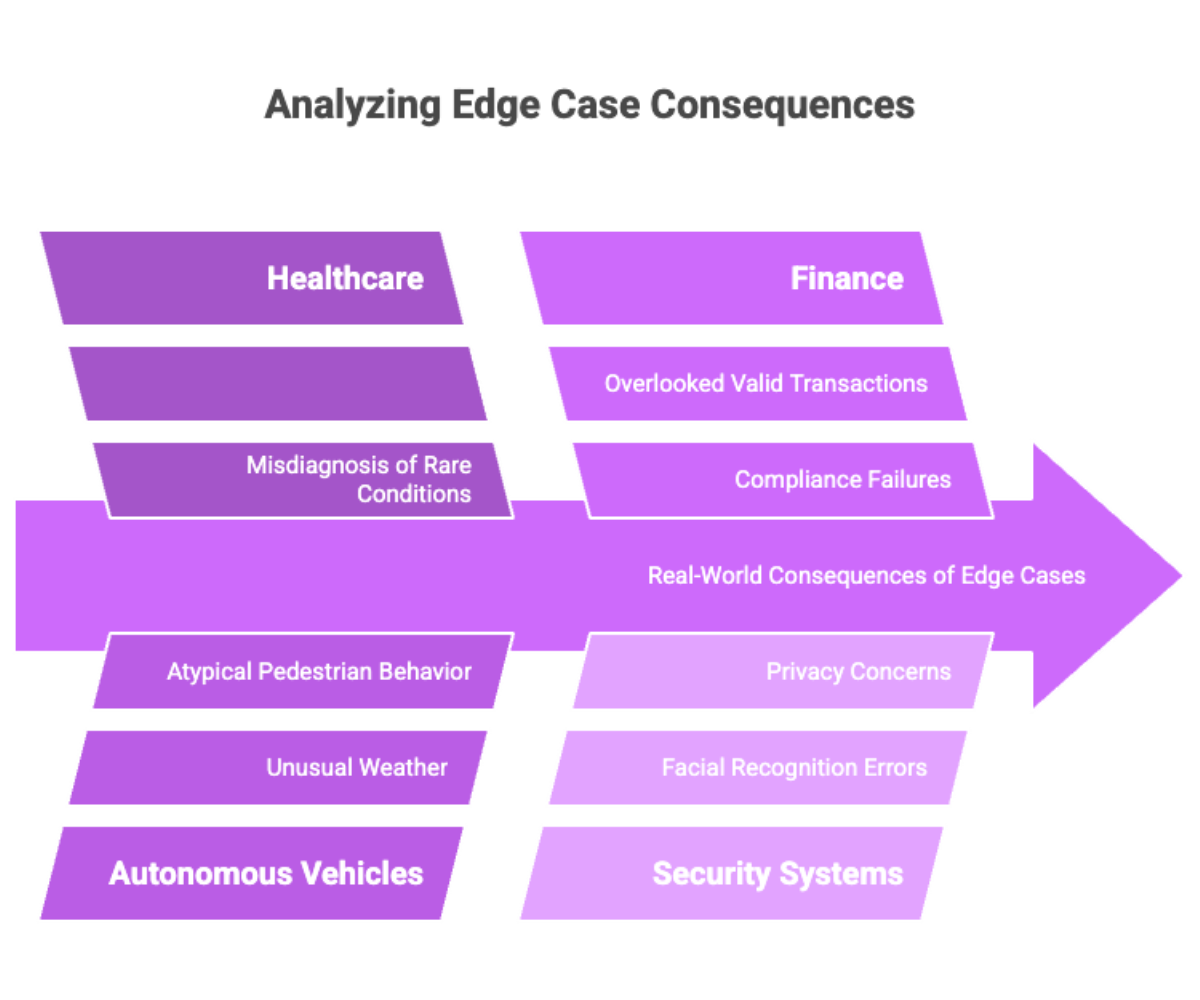

Real-World Consequences of Edge Cases

Healthcare: A misdiagnosed rare condition may delay treatment and endanger lives.

Autonomous vehicles: Edge cases like unusual weather or atypical pedestrian behavior have caused real accidents.

Finance: AI systems overlooking unusual but valid transactions have created compliance and fraud detection failures.

Security systems: Edge cases in facial recognition lead to misidentification, raising concerns over privacy and integrity.

These examples illustrate why ignoring edge cases is not just a technical oversight but a critical challenge with human, financial, and ethical consequences.

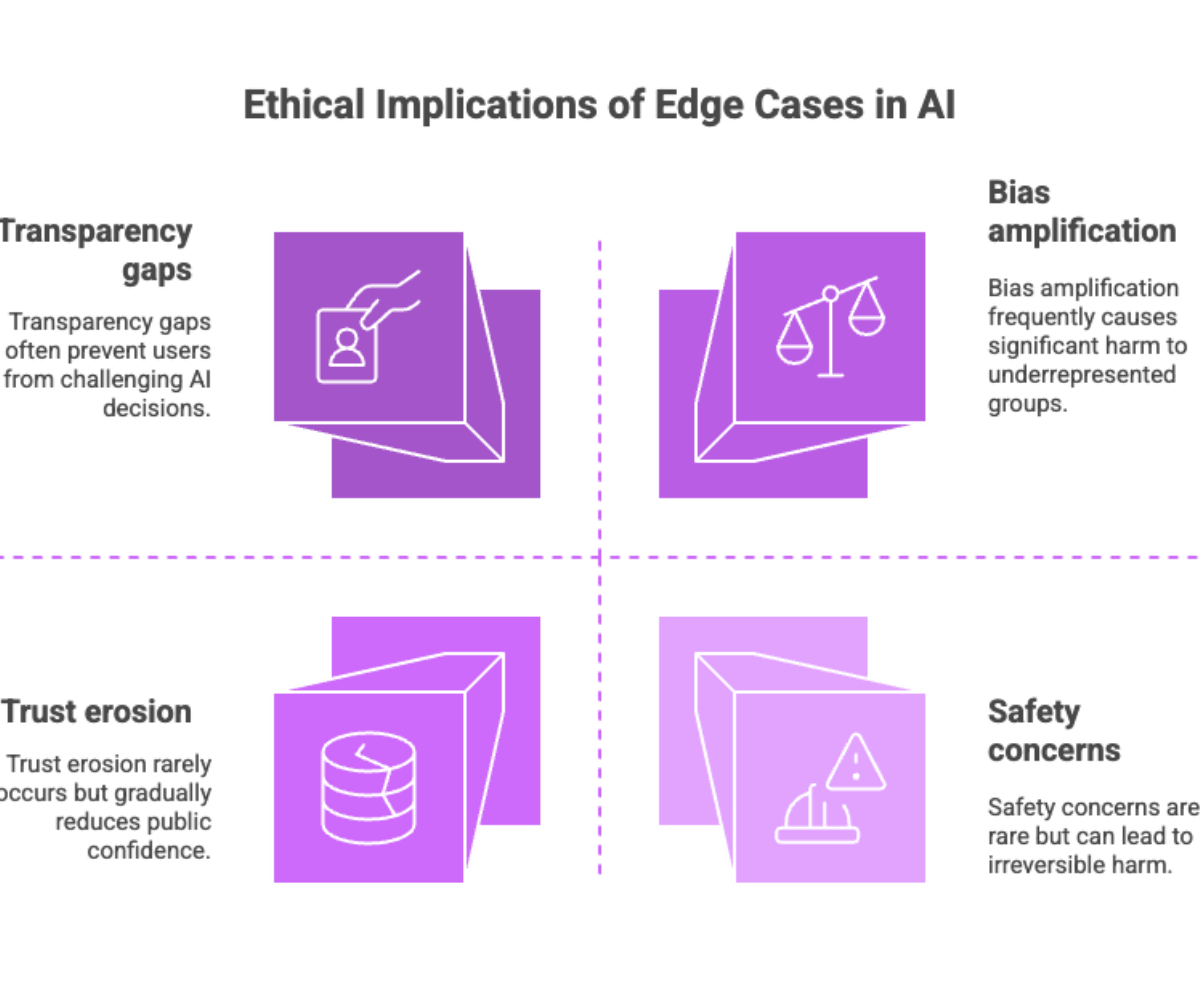

Ethical Implications of Edge Cases

Bias amplification: Underrepresented groups face disproportionate errors.

Transparency gaps: Users cannot challenge AI decisions if failures are hidden.

Safety concerns: A single edge case failure can cause irreversible harm.

Trust erosion: Repeated errors in rare cases reduce public confidence in AI.

Ethical implications demand robust data governance, diverse datasets, and continuous monitoring.

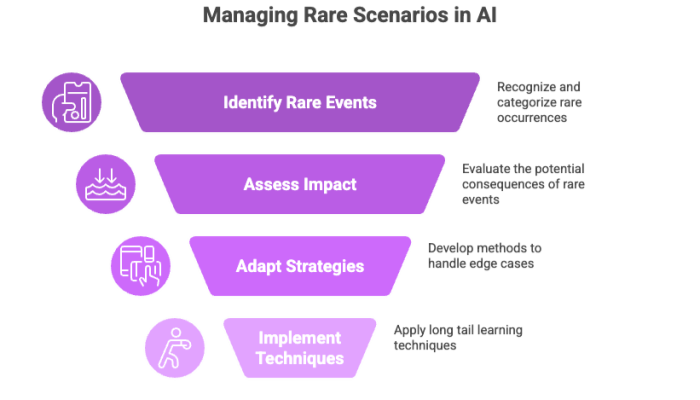

Understanding the Long Tail of Edge Cases

The long tail refers to the vast number of rare scenarios in the real world. While each event may occur infrequently, collectively they dominate the error space of ai models.

Why the Long Tail Matters?

It’s impossible to anticipate every scenario.

Rare events often carry critical impact (e.g., an airplane malfunction, not a routine flight).

Continuous adaptation is required to handle edge cases effectively.

Long tail learning techniques such as re-sampling, weighted loss functions, and data augmentation help balance datasets and improve robustness.

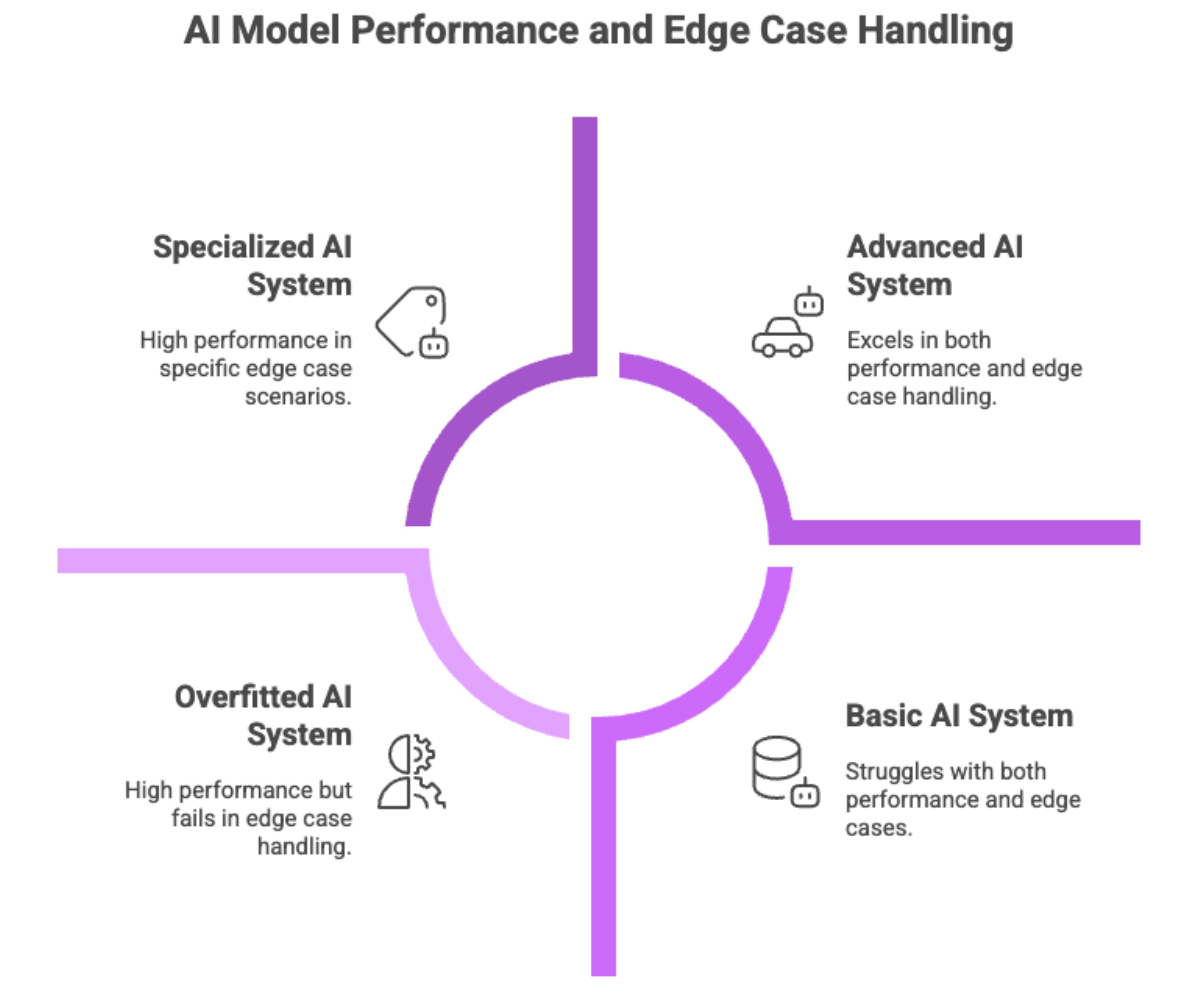

AI Failure and Edge Cases

Artificial intelligence (AI) models are not all created equal; their ability to handle complex tasks varies significantly due to inherent constraints in training data and model design. Understanding why some AI models struggle with edge cases requires examining the multiple layers of context and nuance involved in solving these rare but critical scenarios. Effective edge case handling is essential for AI success, providing benefits such as improved model performance, enhanced safety, and greater trust in AI-powered systems.

Common Edge Cases: Self-Driving Cars

Unusual weather (fog, snow, heavy rain).

Irregular road signs or temporary detours.

Pedestrians behaving unpredictably.

Emergency vehicles approaching at unusual angles.

Why It Matters?

Safety: A single edge case can lead to fatalities.

Scale: Billions of real-world miles mean edge cases are inevitable.

Public trust: Failures reduce acceptance of autonomous systems.

Companies working on autonomous vehicles invest heavily in simulation frameworks to generate rare edge scenarios, but the middle stage of development remains the most challenging, as it is where systems confront the unpredictable nature of the real world.

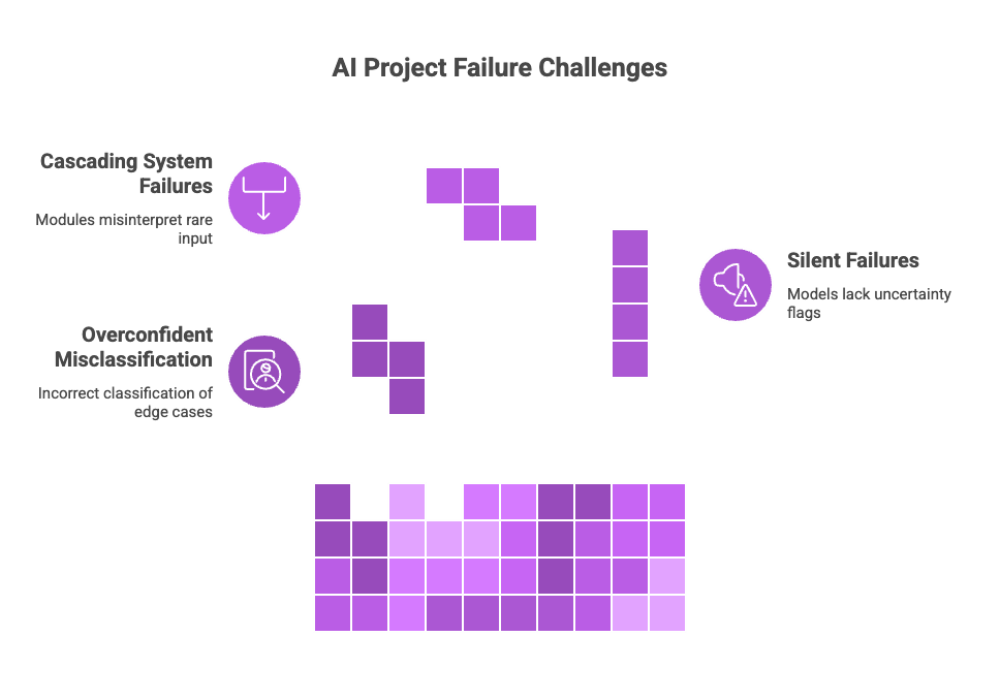

AI Failure and Edge Cases

When ai projects fail, the common root cause is inability to handle edge cases. Patterns include:

Overconfident misclassification.

Silent failures where models do not raise uncertainty flags.

Cascading system failures when multiple modules misinterpret rare input.

Mitigation strategies include uncertainty estimation, fallback policies, and human intervention.

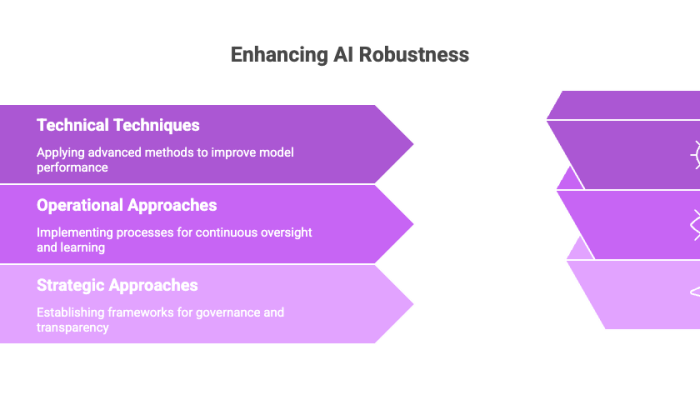

Solutions for Edge Case Handling

Effectively managing edge cases requires a combination of advanced techniques and thoughtful processes. By integrating diverse data sources, leveraging AI tools, and incorporating human judgment, organizations can improve model robustness and ensure AI systems perform reliably even in unexpected situations.

Technical Techniques

Data Augmentation: Generate synthetic variations of rare scenarios.

Transfer Learning: Use related datasets to improve generalization.

Ensemble Methods: Combine multiple models to diversify errors.

Uncertainty Estimation: Flag low-confidence predictions for review.

Robust Optimization: Train models to resist noisy or adversarial inputs.

Operational Approaches

Human-in-the-loop: Expert oversight in ambiguous scenarios.

Continuous monitoring: Detect drift and new edge cases post-deployment.

Edge case logging: Build structured libraries of failures for retraining.

Strategic Approaches

Invest in AI tools for automated annotation and analytics.

Adopt governance frameworks to enforce accountability.

Foster transparency with users by communicating limits.

Edge Case Annotation and Analysis

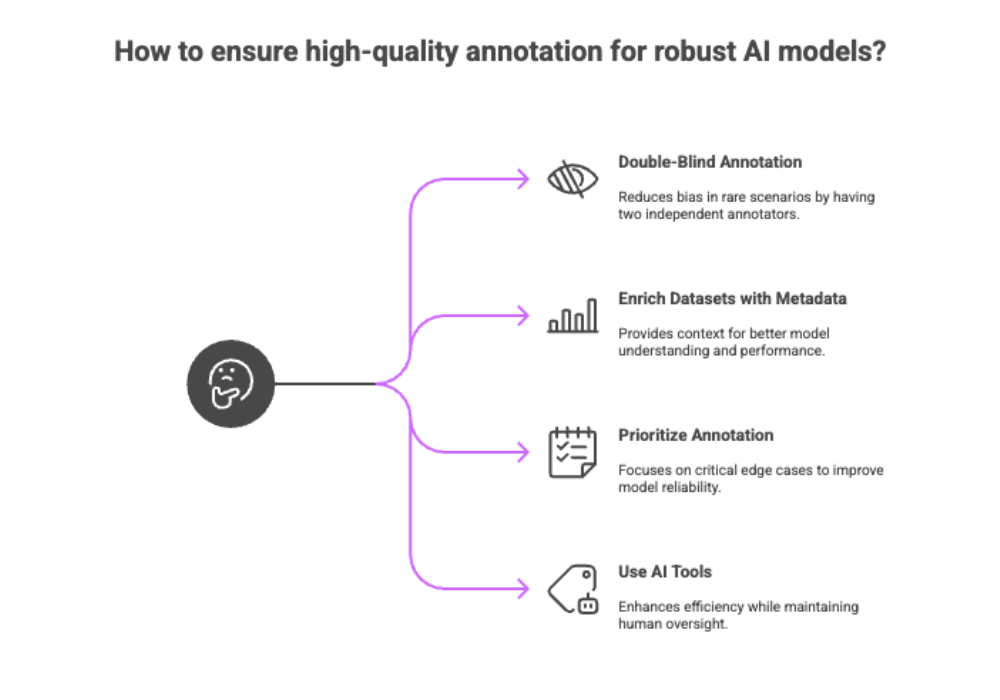

Annotation quality is critical for building robust ai models. Poor labeling amplifies edge case risks.

Best practices include:

Double-blind annotation for rare scenarios.

Enriching datasets with metadata (time, location, environmental conditions).

Prioritizing annotation based on frequency and severity of edge cases.

Using AI tools for semi-automated annotation while keeping humans in control.

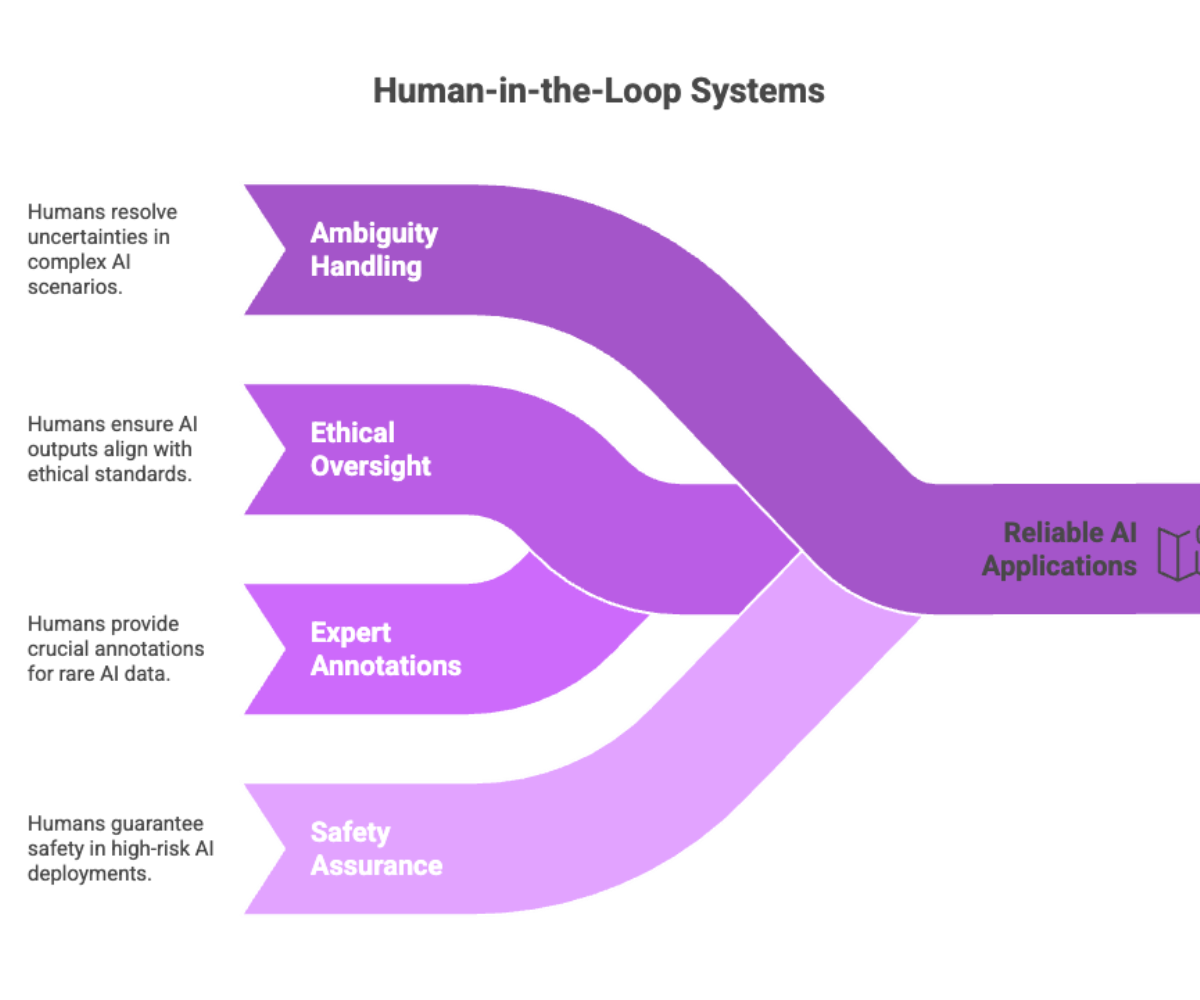

The Role of Human Judgment

No matter how advanced ai models become, humans remain indispensable in:

Handling ambiguity in complex scenarios.

Overriding unsafe or unethical model outputs.

Providing expert annotations for rare training data.

Ensuring safety and accountability in high-risk deployments.

Human-in-the-loop systems combine computational efficiency with human oversight, creating more reliable AI applications.

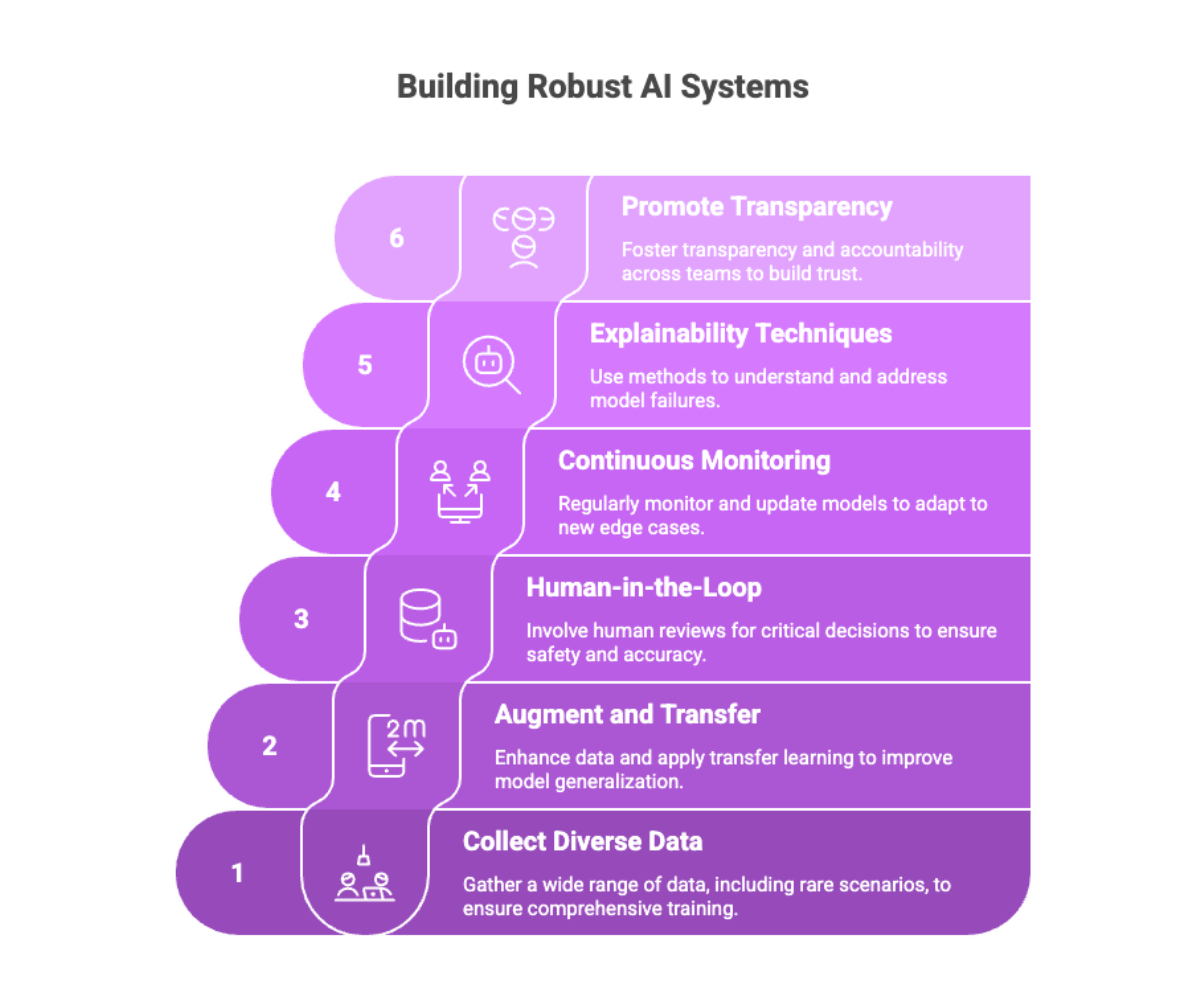

Best Practices for Edge Case Handling

Collect diverse training data that includes rare scenarios.

Apply data augmentation and transfer learning for better generalization.

Implement human-in-the-loop reviews for safety-critical decisions.

Monitor and update models continuously to capture new edge cases.

Use explainability techniques to verify why models fail.

Promote transparency and accountability across teams.

Conclusion: Preparing AI for the Real World

Edge cases represent the frontier of AI reliability. While common patterns define model competence, rare events define trust. Companies that ignore edge cases risk ai failure, ethical scrutiny, and safety breaches.

The future of AI depends on stronger solutions for edge case handling, including:

Smarter language models with better contextual reasoning.

Wider use of explainable AI for diagnosing failure.

Improved training data diversity to reduce bias.

Enhanced human-in-the-loop frameworks for accountability.

Addressing edge cases is not optional; it is the foundation of building AI that truly works in the real world.