Data Quality Audit Checklist for Effective Analysis

In today’s data-driven business environment, ensuring the accuracy, reliability, and completeness of organizational data is paramount. This article presents a comprehensive data quality audit checklist designed to help enterprises systematically evaluate and enhance their data assets. We explore the critical role of data quality audits in supporting sound business decisions, operational efficiency, and regulatory compliance. By integrating advanced data quality checks and strategic audit planning, organizations can confidently manage their data, mitigate risks, and unlock valuable insights that drive growth.

Organizations that prioritize data quality can unlock untapped potential, improve scalability, and drive measurable business outcomes. Ensuring data integrity not only supports current operations but also prepares the business for future challenges and growth.

Key Takeaways

A well-structured data quality audit checklist is essential to identify and remediate common data quality issues such as duplicate records, missing values, and inconsistent entries, thereby safeguarding data integrity.

Effective audit planning involves setting clear objectives, defining the audit scope, and aligning data quality metrics with organizational goals to ensure meaningful and actionable outcomes.

Combining basic and advanced data quality checks with continuous monitoring and governance frameworks enables organizations to maintain high-quality data that supports reliable business operations and compliance.

Regular audits, automated tools, and stakeholder engagement help to maintain high standards, reduce inefficiencies, and address issues proactively.

Introduction to Data Quality

Data quality forms the backbone of effective business operations, analytics, and decision-making. High-quality data ensures that every insight, report, and strategic move is grounded in accurate and reliable information. Conversely, poor data quality can lead to inefficiencies, compliance violations, and lost opportunities, undermining organizational performance.

A data quality audit is a systematic process to assess and improve the accuracy, completeness, consistency, and timeliness of data. Central to this process is the data quality audit checklist—a practical tool that guides data managers through essential validation steps to confirm data reliability across various systems and datasets.

Organizations such as IBM and Gartner emphasize that data accuracy and integrity are critical to digital transformation initiatives, especially as enterprises integrate AI/ML technologies and comply with regulations like GDPR and HIPAA. The following sections delve into the components and procedures necessary for an effective data quality audit.

Planning the Audit

Before launching a data quality audit, it is crucial to establish a clear framework that defines the audit’s objectives, scope, and resources. This strategic planning ensures that the audit delivers value aligned with business priorities.

Establish Clear Objectives

Setting precise goals for the audit helps focus efforts on critical data points and quality metrics. Objectives may include improving data accuracy to a specific threshold, eliminating duplicate entries, or ensuring compliance with industry regulations such as PCI-DSS for payment data security. Clear objectives also facilitate stakeholder buy-in and resource allocation.

Establishing measurable KPIs ensures data quality efforts are aligned with organizational goals, providing benchmarks such as minimum accuracy rates or reduction targets for duplicate records.

Define the Audit Scope

Determining which datasets, departments, or business units to audit is essential for managing complexity. For example, a financial institution might prioritize customer transaction data, while a healthcare provider focuses on patient records. Identifying the relevant systems and data sources, including databases, cloud platforms, and external feeds, ensures comprehensive coverage.

Identify Tools and Resources

Selecting appropriate tools for data collection, validation, and analysis is a key planning step. Modern data observability platforms like Monte Carlo or Collibra provide automated monitoring and anomaly detection, streamlining the audit process. Automated data quality checks help data teams get end-to-end coverage across their entire data pipeline, quickly pinpointing root causes of data issues.

Additionally, audit trails ensure secure records of all data changes through logs that detail data creation, modification, and deletion, providing accountability and traceability.

Assigning roles and responsibilities across IT, data governance, and business teams fosters accountability and effective governance, creating a culture of continuous data quality improvements aligned with organizational goals.

Develop a Remediation Plan

An effective audit plan includes strategies for addressing identified data quality issues. This may involve cleansing duplicate data, enriching incomplete records, or enhancing validation rules within data pipelines. The remediation plan should align with the organization’s broader data governance policies and compliance requirements.

“Data quality audits are not just about finding errors; they are about building trust in your data so that every decision is made with confidence.” — Data Governance Institute

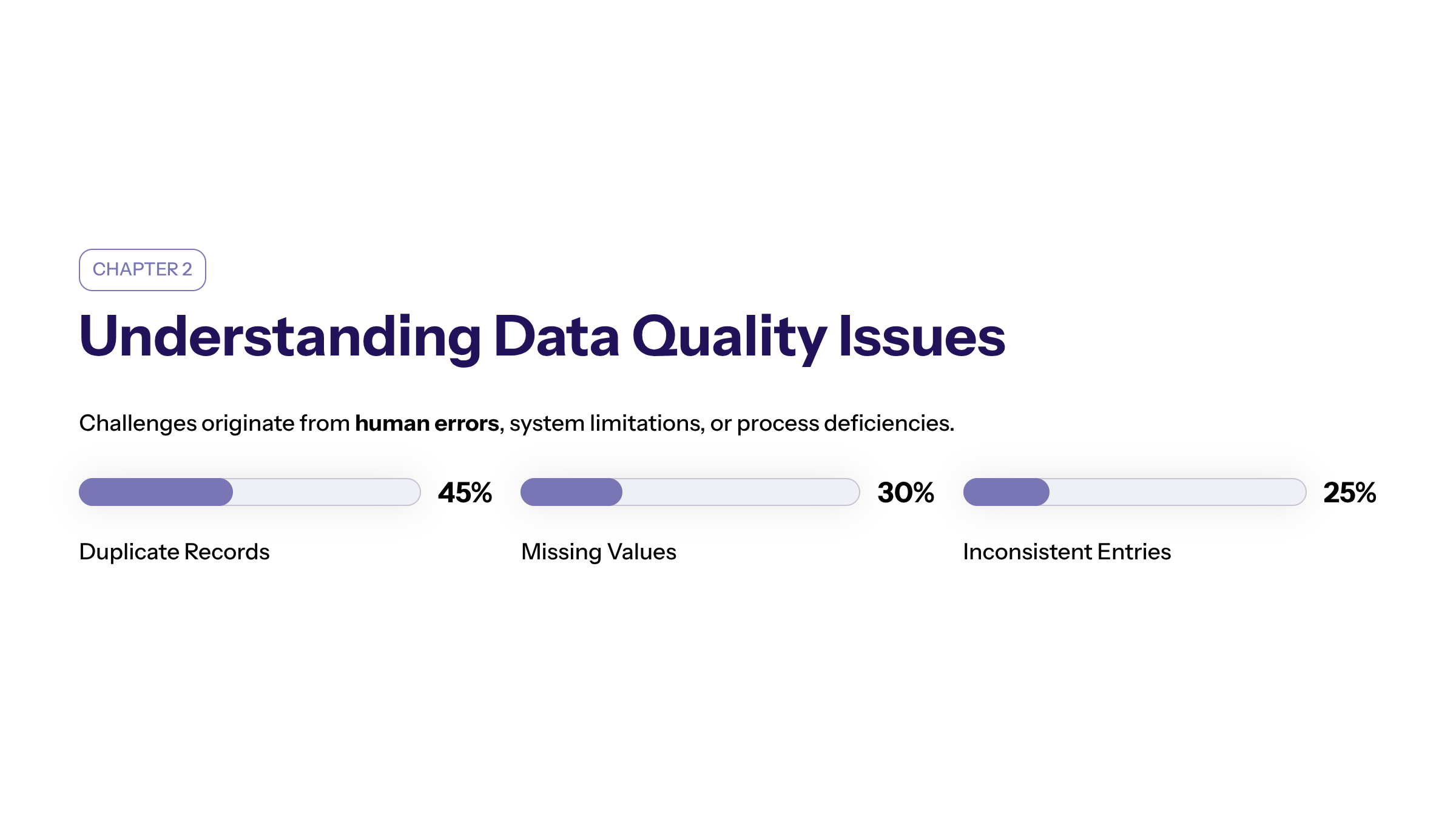

Understanding Data Quality Issues

Data quality challenges are multifaceted and can originate from human errors, system limitations, or process deficiencies. Recognizing these issues is critical to designing effective audit criteria.

Common Data Quality Issues

Duplicate Records: Repeated entries inflate storage costs and skew analytics, adversely affecting customer insights and operational reporting. Uniqueness identifies duplicate records and involves running deduplication checks to ensure no duplicates exist.

Missing Values: Gaps in data fields compromise completeness, leading to inaccurate analyses and impaired compliance reporting.

Inconsistent Data Entry: Variations in formatting, naming conventions, or units across systems reduce data usability and complicate integration. Standardized formats create uniformity, improving data usability and reducing errors during integrations and analysis.

Invalid Data: Entries that do not conform to defined business rules or formats undermine data validity and reliability. Validity ensures data conforms to defined rules and specifications, checked against formats, ranges, and business rules.

Root Causes

Human factors such as manual data entry errors, lack of standardized procedures, and insufficient training contribute significantly to data quality problems. Additionally, system glitches, outdated validation rules, and inadequate data governance frameworks exacerbate these issues.

Data Quality Metrics

Key dimensions for data quality audits include Accuracy, Completeness, Consistency, Timeliness, Validity, Uniqueness, Relevance, and Compliance.

Accuracy: The degree to which data reflects real-world entities or events. Accuracy in data quality refers to whether data reflects reality and can be cross-referenced with trusted sources.

Completeness: The extent to which all required data points are present. Completeness in data quality checks if all required fields are filled and whether there are any missing values or gaps.

Consistency: Uniformity of data across different systems and datasets. Consistency checks data uniformity across systems and involves checking formatting, units, and naming conventions.

Timeliness: The currency of data relative to its intended use. Timeliness assesses whether data is up-to-date, reviewing refresh rates and latency between data occurrence and entry.

Uniqueness: Absence of duplicate records.

Validity: Conformance to specified formats and business rules.

Relevance: Ensures datasets are aligned with current business needs and objectives.

Compliance: Adherence to regulatory requirements and standards.

Measuring these metrics enables organizations to prioritize audit efforts and track improvements over time.

Data Collection

Data collection is a foundational step in the audit process, requiring meticulous planning to ensure comprehensive and reliable data acquisition.

Sources and Methods

Data may be sourced from internal databases, cloud storage, third-party systems, or data lakes. Leveraging APIs, ETL pipelines, and direct database queries facilitates efficient data extraction while maintaining integrity.

Handling and Storage

Proper handling protocols, including secure transfer methods and controlled access, prevent data corruption or unauthorized modifications. Ensuring data is stored in formats consistent with organizational standards supports subsequent validation.

Data security protects sensitive information and establishes compliance with regulatory standards, safeguarding data throughout its lifecycle.

Validation and Verification

Applying data quality checks during collection helps identify anomalies early. Techniques include format validation, range checks, and cross-referencing with trusted sources. Detecting duplicate data and missing values at this stage reduces remediation efforts downstream.

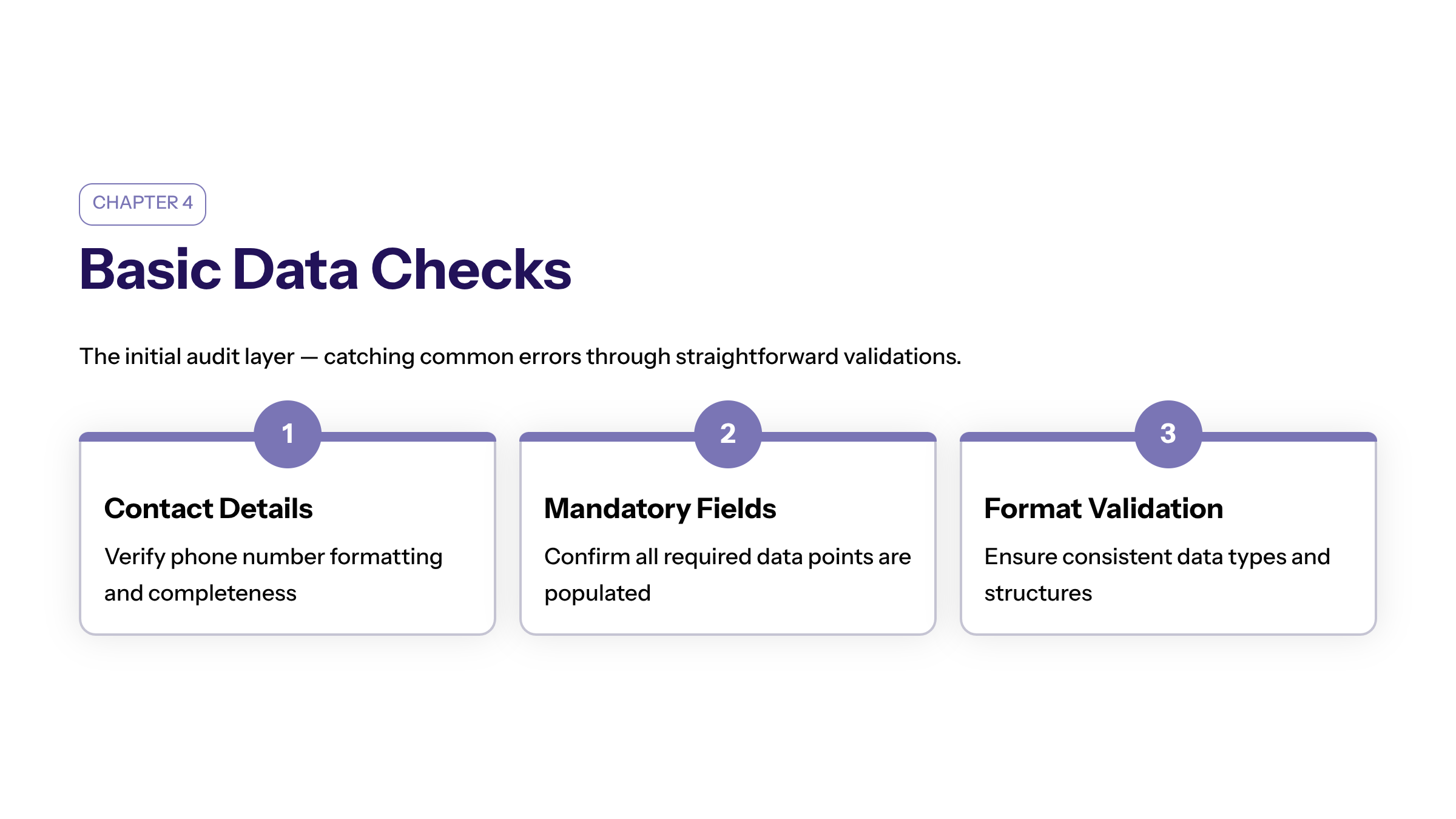

Conducting Basic Data Checks

Basic data checks form the initial layer of the audit, focusing on straightforward validations that catch common errors.

Key Basic Checks

Phone Numbers and Contact Details: Verify formatting and completeness to support customer engagement.

Mandatory Fields: Confirm all required data points are populated to avoid blank entries that compromise completeness.

Data Formats: Ensure consistency in date formats, currency symbols, and measurement units across datasets.

Blank and Invalid Entries: Identify and address missing or nonsensical values.

Duplicate Entries: Detect and remove redundant records to maintain uniqueness.

Implementation

These checks can be automated through scripts or data quality tools, enabling rapid assessment across large datasets. Basic checks establish a reliable foundation for more advanced validations.

According to Gartner, “Basic data checks are essential but insufficient alone; they must be complemented by advanced data checks to ensure data is truly fit for purpose.”

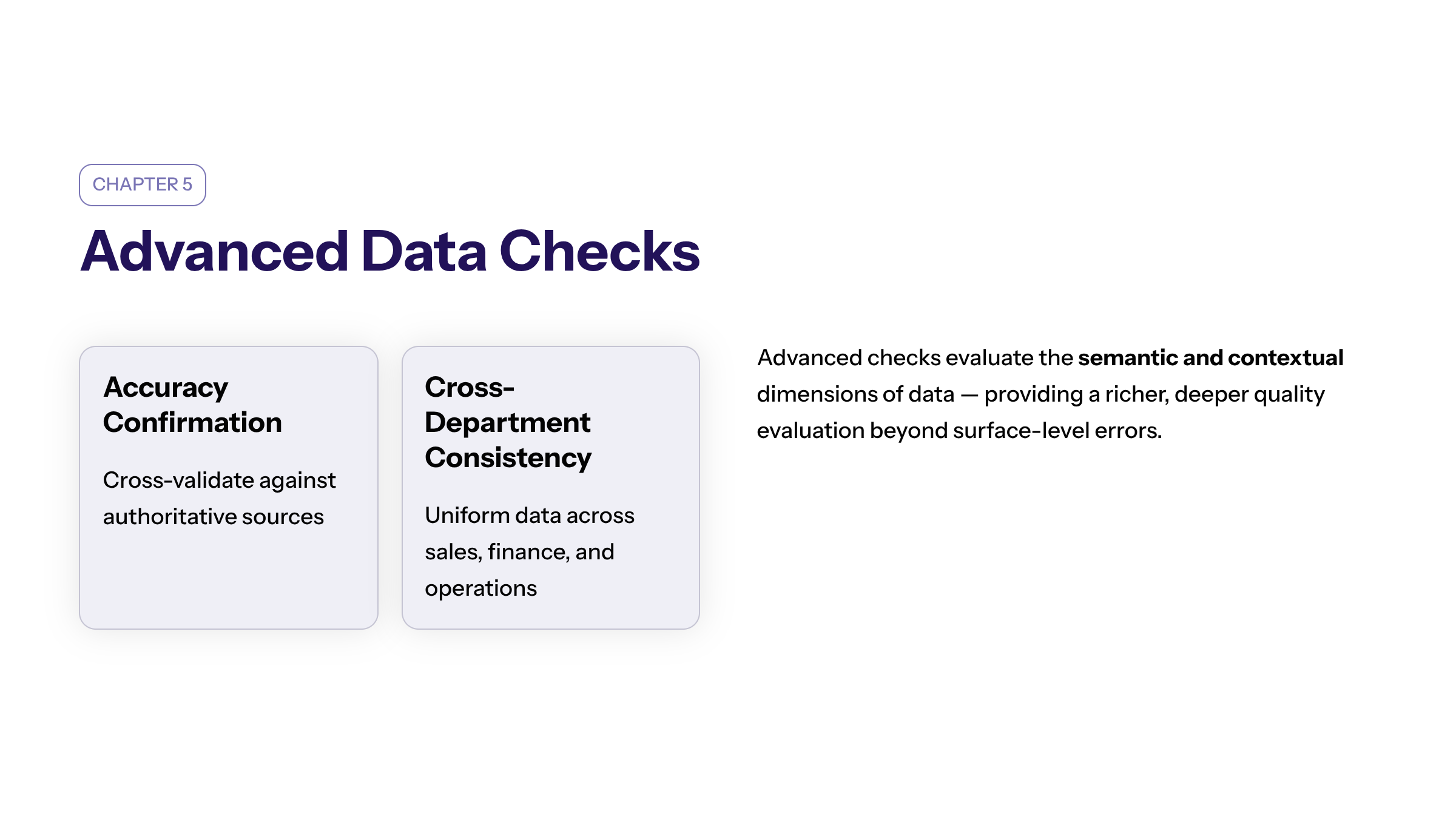

Conducting Advanced Data Checks

Advanced data checks delve deeper into the semantic and contextual aspects of data quality, providing a richer evaluation.

Types of Advanced Checks

Data Accuracy Confirmation: Cross-validate data points against authoritative sources to confirm correctness.

Consistency Across Departments: Ensure data uniformity in different business units, such as sales and finance, to support integrated reporting.

Relevance Assessment: Evaluate whether data remains pertinent to current business operations and objectives.

Timeliness Verification: Confirm that data reflects the most recent information, critical for operational responsiveness.

Compliance Validation: Check adherence to regulatory requirements, including data privacy and security standards.

Tools and Techniques

Machine learning algorithms and AI-driven data profiling tools can identify complex anomalies and patterns indicative of quality issues. Data lineage addresses the flow of data from its source to its reporting to ensure understanding of origins and transformations, facilitating root cause identification.

Case Study: Financial Services

A leading bank implemented advanced data checks integrating real-time monitoring of transaction data to detect anomalies, reduce fraud risk, and ensure compliance with anti-money laundering regulations. This proactive approach enhanced operational efficiency and customer trust.

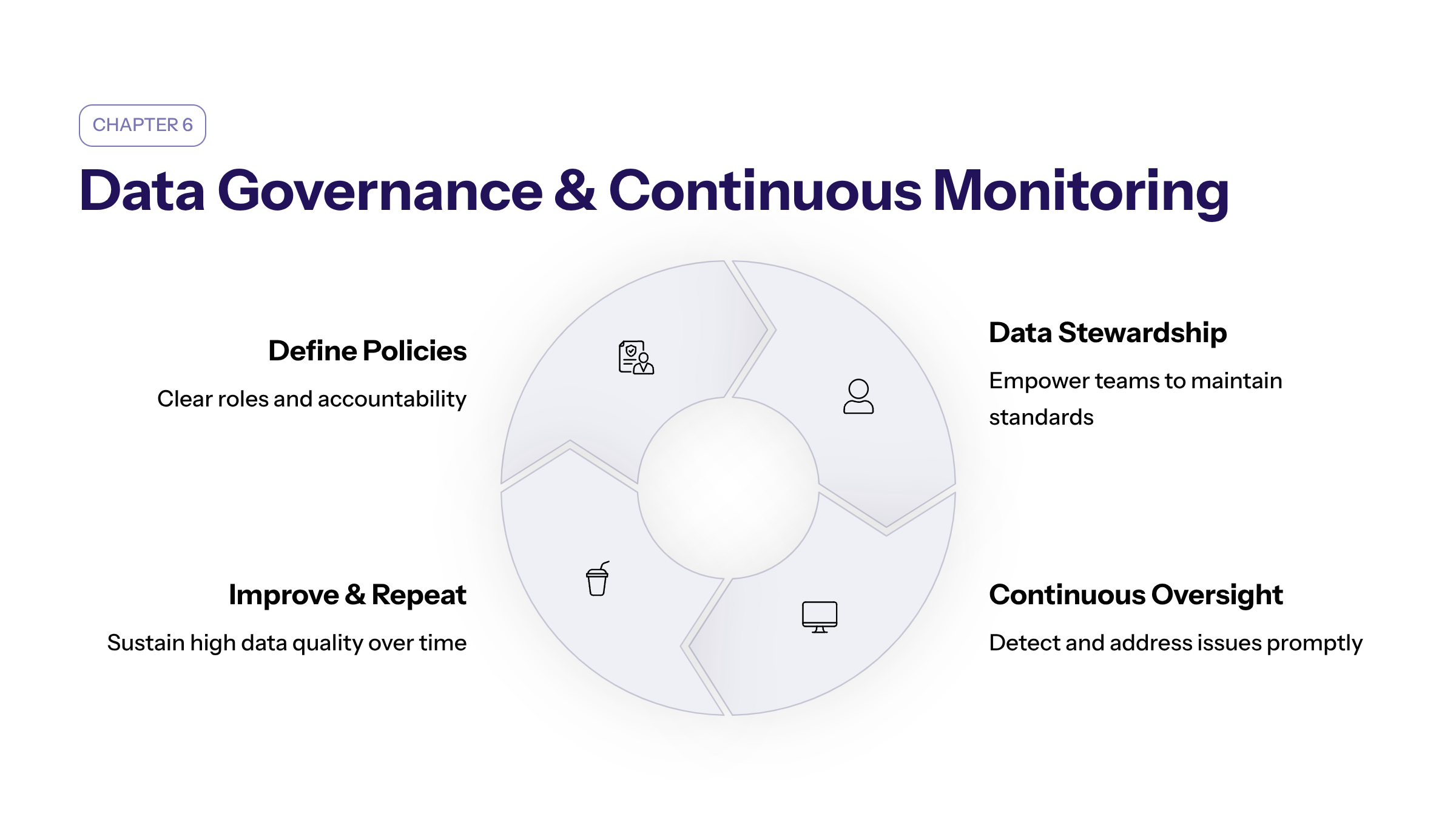

Establishing Data Governance and Continuous Monitoring

Sustaining high data quality requires embedding governance frameworks and continuous oversight.

Governance Protocols

Defining clear policies, roles, and accountability mechanisms ensures consistent data quality management. Data stewardship programs empower teams to maintain standards and address issues promptly.

Effective governance creates a culture of accountability, ensuring ongoing data quality improvements and alignment with organizational goals.

Continuous Monitoring

Automated monitoring tools provide real-time alerts on data quality deviations, enabling swift remediation. Dashboards track key performance indicators such as error rates and data completeness scores.

Continuous monitoring ensures that the data quality checklist changes with organizational needs, adapting to new challenges and maintaining effectiveness.

Benefits

Continuous governance and monitoring reduce data downtime, improve engineer productivity, and support scalable data operations aligned with business growth.

“Continuous data quality monitoring is the cornerstone of a resilient data strategy.” — Forrester Research

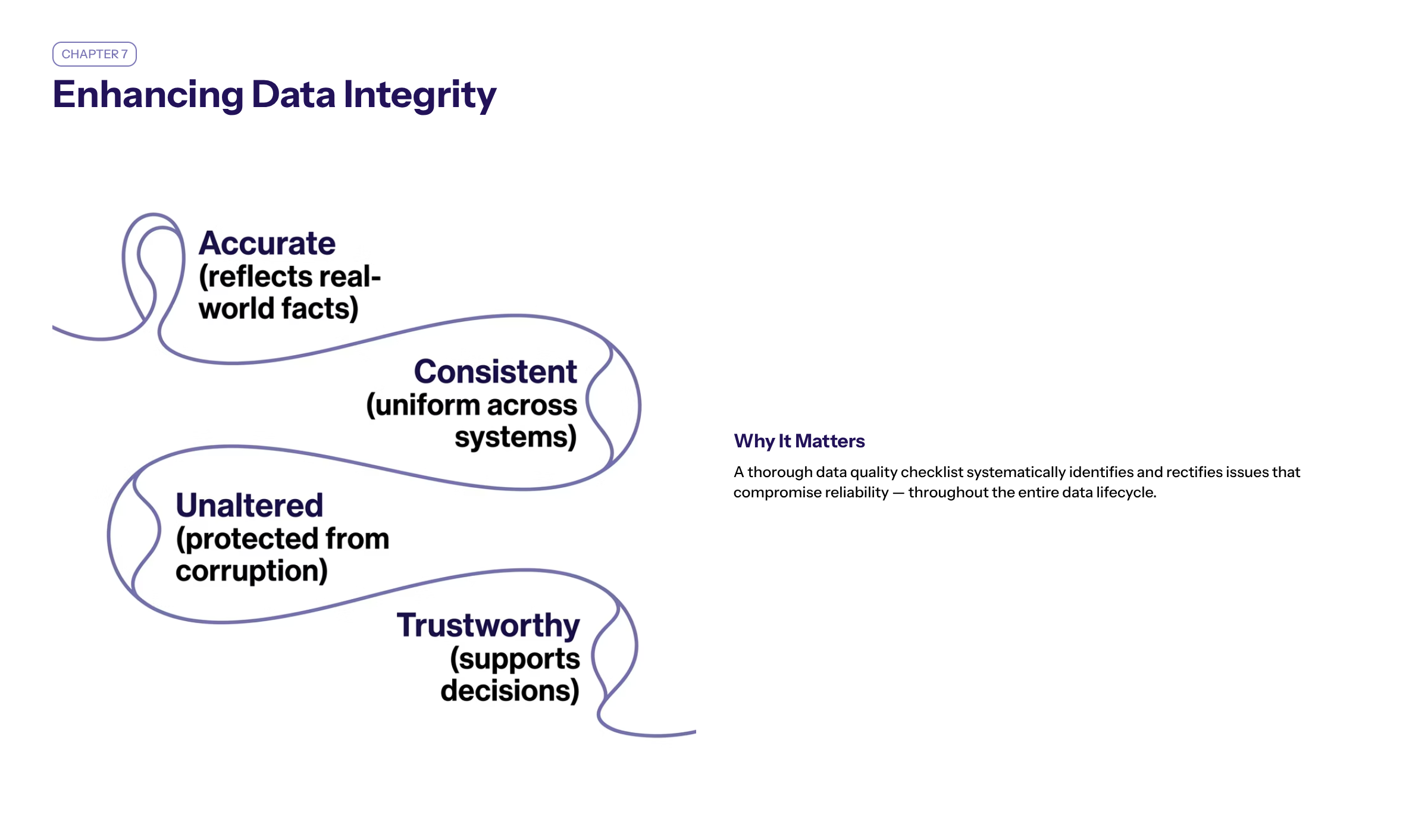

Enhancing Data Integrity Through a Comprehensive Data Quality Checklist

Maintaining data integrity is fundamental to the trustworthiness and usability of organizational data. A thorough data quality checklist serves as an effective tool to uphold this integrity by systematically identifying and rectifying issues that compromise data reliability.

Importance of Data Integrity

Data integrity ensures that information remains accurate, consistent, and unaltered throughout its lifecycle. Breaches in integrity, such as unauthorized changes or data corruption, can lead to flawed analyses and misguided business decisions.

Ensuring data integrity not only supports current operations but also prepares the business for future challenges and growth.

Integrating Data Quality Checks for Integrity

Incorporating both basic and advanced data quality checks into the audit process helps detect anomalies like duplicate entries, inconsistent formats, and invalid values. Regularly validating data against established business rules and standards reinforces integrity across all systems.

Role of Duplicate Entries in Compromising Integrity

Duplicate entries not only inflate storage costs but also distort reporting and analytics. Identifying and eliminating these duplicates through deduplication techniques is vital to preserving data integrity and ensuring accurate insights.

Improving Data Accuracy with Targeted Data Quality Checks

Data accuracy reflects how well data represents real-world facts. Enhancing accuracy is a primary objective of any data quality audit and is achieved through targeted data quality checks.

Methods to Ensure Data Accuracy

Cross-referencing data points with trusted external sources, performing range and format validations, and conducting consistency checks across datasets are key practices to verify accuracy.

Impact of Accurate Data on Business Operations

Accurate data supports precise reporting, informed decision-making, and regulatory compliance. It reduces risks associated with errors and builds confidence among stakeholders in the organization's data-driven initiatives.

Addressing Duplicate Entries to Boost Accuracy

Duplicate entries can lead to conflicting information and skewed analytics. Implementing automated duplicate detection and removal processes is essential to maintain high data accuracy levels.

Improving data quality significantly impacts the bottom line, not only through improved sales but also through a sharp decrease in wasted resources.

Conclusion

Implementing a comprehensive data quality audit checklist is a strategic imperative for organizations seeking to harness the full value of their data assets. By combining meticulous planning, thorough data collection, and layered quality checks—from basic validations to advanced analytics—enterprises can achieve reliable, accurate data that underpins confident decision-making and operational excellence. Embedding governance and leveraging automation further ensure sustained data integrity in an evolving digital landscape.

Organizations investing in robust data quality audits position themselves to unlock actionable insights, enhance compliance, and drive measurable business outcomes in an increasingly competitive environment.

Regular reviews and updates keep the checklist aligned with business needs, ensuring data continues to support measurable outcomes and organizational success. Training and role assignments corroborate effective implementation by fostering team alignment and accountability. Developing action plans to improve data management and quality is a critical part of the data quality audit process.

Using a data quality audit toolkit can assist users in assessing the quality of reported data, making the audit process more efficient and effective.

A well-implemented data quality checklist provides a reliable framework for sustained business success, enabling organizations to confidently navigate the complexities of modern data management.

Automated monitoring tools streamline data quality processes and reduce risks, while automating data quality checks allows organizations to proactively address data quality issues before they impact decision-making.

Implementing automated data quality checks enhances the accuracy and reliability of data used in business operations, empowering organizations to maintain a competitive edge in the digital age.