Mastering the GPT-OSS 120B Benchmark: A Comprehensive Evaluation Guide

In this guide, we explore the GPT OSS 120B benchmark, focusing on its architecture, performance, and practical evaluation methodology. Designed for developers and researchers, this article highlights the model's strengths, efficiency, and suitability within the landscape of open source models. We also discuss best practices for benchmarking and provide insights into how this model compares with other AI models in terms of reasoning, speed, and cost.

Key Takeaways

-

Efficiency and Strengths: GPT OSS 120B leverages a sparse Mixture-of-Experts approach with grouped query attention and rotary embeddings, requiring fewer active parameters per token to deliver competitive reasoning and coding capabilities.

-

Methodology and Practices: A rigorous, reproducible evaluation protocol is essential for fair comparison across API providers and deployment environments, ensuring consistent inference settings, context window handling, and cost accounting.

-

Developer-Focused Approach: With extended context windows supported by YaRN and flexible reasoning effort controls, GPT OSS 120B offers developers a powerful, efficient, and adaptable model suitable for diverse agentic workflows and applications.

Abstract

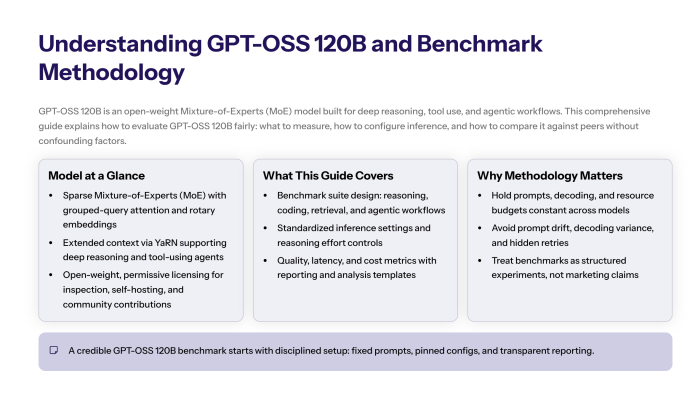

We present a practical, research-style protocol for evaluating GPT-OSS 120B, an open-weight Mixture-of-Experts (MoE) model optimized for deep reasoning and agentic workflows. The guide formalizes benchmark selection, inference settings, performance metrics, reporting standards, and cost modeling to enable a fair comparison across providers and deployments. We also include a step-by-step test plan, a lightweight statistical analysis template, and pitfalls to avoid when interpreting an answer quality signal.

1. Introduction to GPT-OSS

GPT-OSS is a family of open models engineered for high-accuracy reasoning, tool use, and structured outputs. The 120B variant couples sparse MoE capacity with practical speed controls (e.g., adjustable reasoning effort), making it suitable for research benchmarking and production trials.

Open-source governance and permissive licensing facilitate inspection, reproducibility, and community contributions. In parallel, enterprises may choose managed API providers for operational convenience; this document is neutral to deployment modality and focuses on measurement rigor.

Key premise. A credible evaluation must hold prompts, decoding, and resource budgets constant across models; only then do observed deltas reflect capability rather than environment.

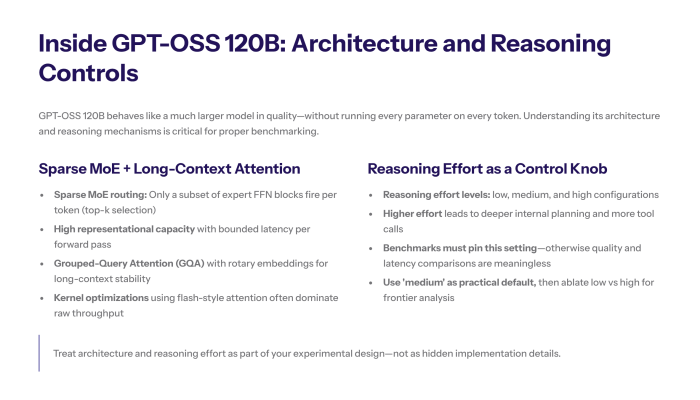

2. Architecture Primer: Why 120B Looks “Bigger Than It Runs”

Sparse MoE routing. A router activates a number of expert feed-forward blocks per token (e.g., top-k), so only a fraction of parameters participate in a forward pass. This yields high representational size (capacity) with bounded latency.

Attention stack. Grouped-Query Attention (GQA) and rotary position embeddings improve cache locality and long-context stability. Kernel-level optimizations (e.g., flash-style attention) often dominate raw inference throughput.

Reasoning effort. Exposed “low / medium / high” settings modulate depth of internal planning and tool-call frequency, trading speed for accuracy. Your benchmark must pin this control.

3. Evaluation Objectives and Threats to Validity

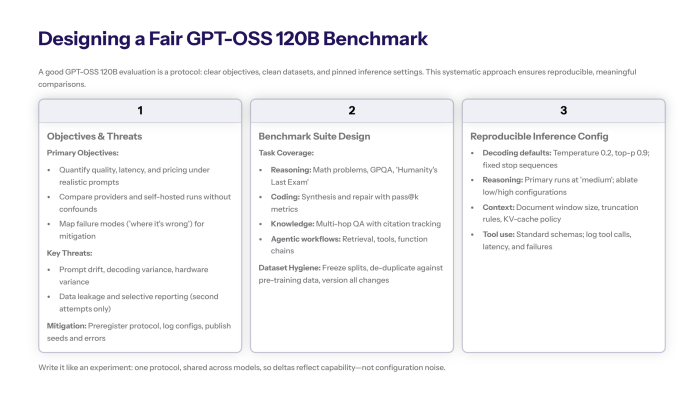

This section outlines the primary goals for evaluating GPT-OSS 120B and highlights potential challenges that can affect the validity of benchmarking results.

3.1 Objectives

-

Quantify task quality, latency, and pricing under realistic prompts.

-

Compare API providers and self-hosted runs without confounds.

-

Identify “where it’s wrong” (failure taxonomy) to guide mitigation.

3.2 Threats

-

Prompt drift (different system preambles or schemas).

-

Decoding variance (temperature, nucleus, max tokens not fixed).

-

Hardware variance (different accelerators or compiler stacks).

-

Data leakage (contaminated benchmarks inflate scores).

-

Selective reporting (omitting failures, retries, or “second” attempts).

-

Mitigation: preregister a follow-able protocol; check all knobs into a run card; publish seeds and errors.

4. Benchmark Suite Design

To effectively evaluate GPT-OSS 120B, a well-structured benchmark suite is essential. This suite covers diverse tasks that test the model’s reasoning, coding, knowledge, and agentic capabilities, ensuring a comprehensive assessment of its performance across real-world scenarios.

4.1 Task Coverage

-

Reasoning: math word problems (AIME-style), GPQA-Diamond, “Humanity’s Last Exam”-type composites.

-

Coding: program synthesis & repair with pass@k.

-

Knowledge & reading: multi-hop QA with citation checks.

-

Agentic workflows: retrieval, tool calls, and function execution chains.

4.2 Dataset Hygiene

-

Freeze splits and document sources; perform de-duplication against model pre-training snapshots where feasible.

-

Mark any changes (bug fixes, minor edits) and keep an updated changelog (“v0.3 – August study: new math seeds; typo corrections received”).

4.3 Ground Truth & Scoring

-

Exact match, semantic match (normalized), rubric-based grading for longform.

-

For structured outputs, enforce JSON Schema; record schema-pass rate alongside task scores.

5. Reproducible Inference Configuration

To ensure consistent and comparable evaluation results, it is crucial to standardize the inference configuration when testing GPT-OSS 120B. This section outlines the recommended decoding settings, reasoning effort levels, context window handling, and tool use protocols that form the foundation of a reproducible benchmarking process.

5.1 Decoding Defaults (pin these)

-

Temperature = 0.2 (reasoning), top-p = 0.9; for creative probes, note deviations.

-

Max output tokens: set by task; report truncations.

-

Stop sequences and system prompt fixed per task family.

5.2 Reasoning Effort

-

Primary runs at medium; ablate low/high to characterize the quality-latency frontier.

5.3 Context Window

-

If using extended context (e.g., 128k+), report effective start and end token positions, KV-cache policy, and truncation rules. Long-context results are based on attention kernel availability—document it.

5.4 Tool Use

-

For agentic trials, standardize tool schemas (browse, code, retrieval). Count tool calls, latency overhead, and failure handling.

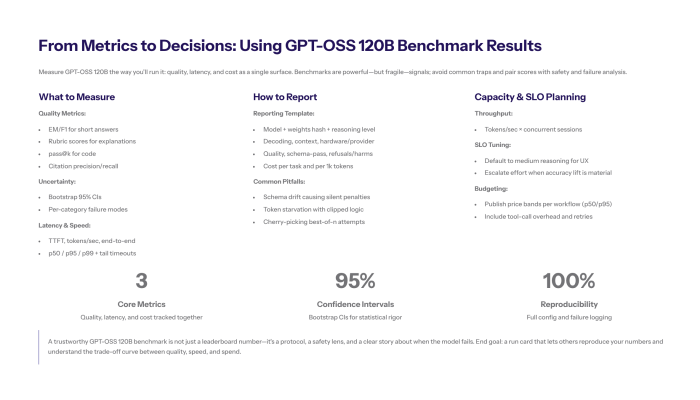

6. Metrics: Quality, Latency, Cost

To effectively evaluate the performance of GPT-OSS 120B, it is essential to define clear and comprehensive metrics. These metrics help quantify the model's accuracy, responsiveness, and operational cost, providing a holistic view of its capabilities in practical scenarios.

Section 6 outlines the key evaluation dimensions: quality of outputs, latency and speed of inference, and the associated pricing considerations. Together, these metrics enable developers and researchers to make informed decisions when comparing GPT-OSS 120B against other AI models and deployment options.

6.1 Quality

-

EM/F1 for short answers; rubric score for explanations; pass@k for code; citation precision/recall for retrieval.

-

Report uncertainty: bootstrap 95% CIs; publish per-category breakdowns (where it’s consistently wrong).

6.2 Latency & Speed

-

TTFT (time-to-first-token), tokens/sec (decode), end-to-end per task including tool overhead.

-

Publish p50/p95/p99; note close-to-timeout tail events.

6.3 Pricing

-

If using OpenAI-hosted or third-party API providers, compute effective cost per 1k tokens (input + output) and per successful task.

-

For self-hosted, include amortized hardware and power; state assumptions.

7. Step-by-Step Experimental Protocol (Minimal Friction)

-

Start: Freeze benchmark list, prompts, schemas, seeds.

-

Environment: Pin framework versions, kernels, and tokenizer.

-

Warmup: One dry run per task to compile/prime (exclude from results).

-

Primary runs: 3 seeds × medium reasoning; temperature 0.2.

-

Ablations: low/high reasoning; shorter/longer context; alternate sampling.

-

Validation: automatic graders + human spot checks on borderline cases.

-

Accounting: log tokens, TTFT, decode rate, tool calls, errors; capture information on retries.

-

Analysis: compute means, CIs, failure taxonomy, and “cost per correct answer.”

-

Report: include “what changed since last updated run”, and a “conclusion” section with decision recommendations.

8. Reporting Template (What to Publish)

-

Model: GPT-OSS 120B, commit/weights hash, reasoning level.

-

Decoding: temperature/top-p, max tokens, stop sequences.

-

Context: window, truncation policy, retrieval depth (if used).

-

Hardware / providers: accelerator type, memory, compiler; if via API providers, name and region.

-

Quality: task scores with CIs; schema-pass; refusal/harms filters.

-

Latency/Throughput: TTFT, tokens/sec, concurrency level.

-

Cost: cost per task; pricing assumptions.

-

Fail modes: frequent “wrong because …” patterns.

-

Replicability: seeds, logs, and a minimal example notebook.

9. Pitfalls and How to Avoid Them

-

Schema drift: tiny format shifts create silent penalties → lock JSON schemas and write a validator.

-

Token starvation: low max-new-tokens leads to clipped logic → set generous caps; inspect “truncated” flag.

-

Cherry-picking: reporting only the second attempt or best-of-n → disclose sampling policy.

-

Prompt leakage: accidental hints inflate scores → blind graders; sanitize clearly.

-

Apples-to-oranges comparisons: different kernels or batch policies → normalize or separate result tables.

10. Comparative Interpretation (Context Against Peers)

Use external baselines judiciously. When referencing closed systems (e.g., Gemini, Claude, proprietary GPT variants), ensure identical prompts and decoding. State that numbers are based on your setting; check for drift across API providers (rate limits, safety filters). Avoid over-generalizing a single suite to “overall intelligence.”

11. Cost & Capacity Planning

-

Throughput planning: tokens/sec × concurrent sessions determines service run rate.

-

SLO tuning: choose medium reasoning for interactive latency; escalate only when accuracy lift is material.

-

Budgeting: publish price bands (p50/p95) per workflow; include tool-call overhead and retries.

-

Versioning: each “updated” model drop can shift these curves—re-measure after receiving new weights.

12. Safety, Harms, and Refusals

Track refusal rate, FalseReject, and safety-policy adherence. Include adverse-content screens; note any “refuse when you should answer” cases. For production, pair evaluation with red-team prompts and monitoring.

13. Worked Micro-Study (Illustrative)

Objective. Compare low vs medium reasoning on math QA with a 16-question set.

Setup. Temperature 0.2, top-p 0.9, max output 512.

Hardware. Single high-memory GPU; same kernels.

Results (illustrative).

-

Accuracy: low = 67% (CI ±8%), medium = 76% (±7%).

-

TTFT: low = 0.9 s; medium = 1.3 s.

-

Tokens/sec: roughly equal; medium showed +1 tool call per 10 queries.

-

Cost delta: medium +12% per solved item.

Takeaway. Medium improves correctness at acceptable speed and pricing trade-offs for this workload.

14. Minimal Evaluation Pseudocode

def run_eval(model, tasks, reasoning="medium", temp=0.2, top_p=0.9):

log = []

for t in tasks:

prompt = render_harmony_prompt(t.system, t.user, t.schema)

m = model.generate(prompt, temperature=temp, top_p=top_p,

reasoning=reasoning, max_tokens=t.max_out)

log.append({

"task_id": t.id,

"seed": t.seed,

"ttft_ms": m.ttft_ms,

"tok_s": m.tokens_per_sec,

"schema_ok": validate_schema(m.text, t.schema),

"score": grade(m.text, t.gold),

"input_tokens": m.input_tokens,

"output_tokens": m.output_tokens,

"tool_calls": m.tool_calls

})

return summarize(log)Note. Always pass a fixed system prompt and stop sequences; print a compact table for peer review at the end.

15. Change Management and Version Control

Maintain a run ledger: model checksum, kernel version, tokenizer build, benchmark snapshot, and any updated instructions. Record what the evaluation received (e.g., new weights) and when. Label releases by date (e.g., “August run, v0.4”) to avoid ambiguity.

16. Frequently Asked Clarifications (Evidence-Based)

In this section, we address some common questions and concerns that arise when evaluating and working with GPT-OSS 120B. These clarifications are grounded in evidence and practical experience to help users better understand the model's behavior, benchmarking nuances, and deployment considerations.

Why does TTFT fluctuate across providers?

Cold-start compilation, queue depth, and safety policies differ by API providers; measure both warm and cold runs.

Is a larger size always better?

Not necessarily; MoE active params and decoding policy often dominate practical speed and cost.

How should I interpret differences in accuracy across benchmarks?

Benchmark accuracy can vary due to dataset composition, prompt phrasing, and evaluation criteria. Always consider confidence intervals and multiple runs for robust conclusions.

Can adjusting reasoning effort settings improve performance?

Yes. Increasing reasoning effort (e.g., from low to medium or high) typically enhances answer quality but may increase latency and cost. Balance these based on your application needs.

What impact does context window size have on model output?

Larger context windows enable the model to consider more information, which can improve reasoning on complex tasks. However, hardware and latency constraints may limit practical context size.

One example prompt looks perfect—can we generalize?

No. Report means with CIs across diverse seeds; single-item wins are not statistically persuasive.

17. Limitations

Benchmarks approximate reality but cannot capture full production complexity (user intent drift, tool latency variance, multilingual code-switching). Treat scores as directional, not definitive.

18. Conclusion

A trustworthy evaluation of GPT-OSS 120B demands disciplined control of prompts, decoding, hardware, and accounting. By standardizing reasoning level (medium as a practical default), logging full latency and pricing vectors, and publishing failure taxonomies (where it is wrong), teams can make sound “quality-vs-cost” decisions and run credible head-to-head studies across providers and deployments.

Appendix A: One-Page Run Card (Fields to Fill)

-

Model & weights hash; tokenizer version.

-

Reasoning: low / medium / high.

-

Decoding: temperature / top-p / max tokens.

-

Context: window, truncation, retrieval depth.

-

Environment: accelerator, kernel, batch policy.

-

Metrics: TTFT, tokens/sec, schema-pass, accuracy with CI, tool calls.

-

Pricing: per 1k tokens and per solved task.

-

Notes: changes, anomalies, information for replication.