The Role of Governance in Ethical Artificial Intelligence: Guide for Organizations

Introduction to Artificial Intelligence and AI Governance

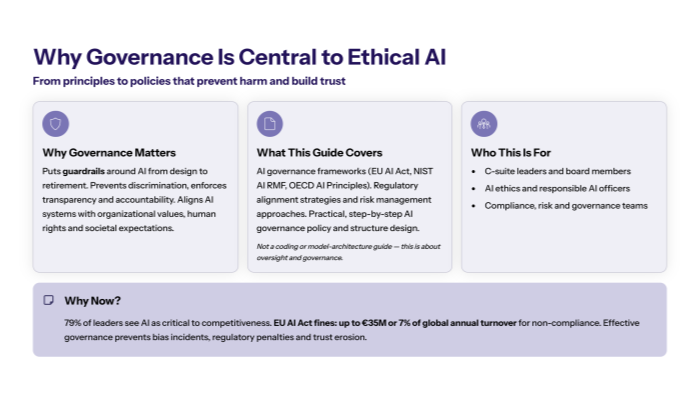

Governance plays a critical role in ensuring ethical AI implementation across organizations by establishing systematic frameworks that guide responsible AI development and deployment from design through operation. As artificial intelligence becomes central to business operations, AI governance frameworks prevent discrimination, ensure transparency, maintain accountability, and build stakeholder trust throughout the AI lifecycle.

Modern AI governance refers to comprehensive policies, procedures, and oversight mechanisms that ensure AI systems align with ethical AI principles, respect human rights, and uphold organizational and societal values while enabling innovation within ethical boundaries.

What This Guide Covers

This guide provides an in-depth look at the role of governance in ethical AI implementation, covering AI governance frameworks such as the EU AI Act compliance and NIST AI Risk Management Framework adoption, regulatory alignment strategies, and practical step-by-step AI governance policy development. We explore governance structures, risk management approaches, and challenge mitigation strategies for organizations deploying AI systems, including generative AI technologies.

What This ISN’T

This guide does not delve into technical AI development details, programming languages, specific AI model architectures, or hands-on coding for AI algorithms.

Who This Is For

This guide is designed for C-suite executives, AI ethics officers, compliance managers, and governance teams responsible for AI oversight. Whether you’re establishing new AI governance frameworks or strengthening existing responsible AI governance structures, you’ll find actionable strategies for implementing effective AI governance programs that monitor AI systems and improve AI governance practices.

Why AI Governance Is Important

Research shows 79% of leaders see AI as critical to competitiveness, yet AI governance is important to prevent costly incidents like biased recruitment algorithms, discriminatory lending systems, and regulatory penalties. The EU AI Act imposes fines up to €35 million or 7% of global annual turnover for non-compliance. Effective AI governance aims to prevent unintended consequences while maintaining innovation momentum and building stakeholder confidence.

What You’ll Learn:

How governance shapes ethical AI development and deployment throughout the AI lifecycle

Implementation of AI governance frameworks including OECD AI Principles and EU AI Act requirements

Step-by-step approaches for establishing responsible AI governance structures and policies

Solutions for common governance challenges including skills gaps, ethical dilemmas, and balancing innovation with oversight

Understanding the Essential Role of Governance in Ethical AI Implementation

AI systems align with ethical AI principles, respect human dignity, and uphold societal values throughout the entire AI development and deployment process. This governance acts as essential guardrails from initial AI project conception through model retirement, embedding ethical considerations into decision-making processes rather than treating ethics as an afterthought.

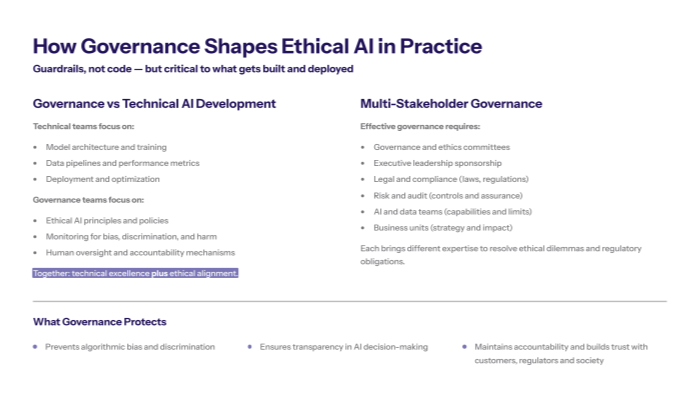

Governance serves multiple critical functions: preventing algorithmic bias and discrimination, ensuring transparency in AI decision-making, maintaining accountability for AI outcomes, and building trust among stakeholders including customers, regulators, and society. Without robust governance practices, organizations risk deploying AI technologies that cause unintended harm, resulting in regulatory penalties, reputational damage, and loss of public trust.

Governance vs. Technical AI Development and Deployment

Governance oversight differs fundamentally from technical AI engineering and development processes. While AI developers focus on model architecture, training data optimization, and performance metrics, governance teams establish ethical AI principles, monitor AI systems for compliance, and ensure human oversight mechanisms function effectively.

This distinction highlights how governance provides the ethical foundation and oversight framework upon which technical teams build and deploy AI systems, ensuring technical excellence aligns with ethical standards and respects human values.

Multi-Stakeholder Governance Approach in the Private Sector

Effective AI governance requires cross-functional collaboration involving governance committees, executive leadership, legal teams, audit functions, AI ethics committees, and business unit representatives. Each stakeholder brings essential expertise: legal teams ensure adherence to legal and regulatory requirements, risk teams assess potential risks and ethical concerns, technical teams understand AI capabilities and limitations, and business leaders align governance with strategic objectives and societal values.

Building on the technical distinction, while AI developers build the systems themselves, governance requires diverse expertise to address ethical dilemmas, regulatory compliance, and societal impact across different domains and use cases.

Transition: Understanding these governance roles provides the foundation for implementing specific AI governance frameworks that operationalize ethical principles into actionable requirements.

Key AI Governance Frameworks Driving Ethical AI Implementation

Organizations implementing ethical AI governance rely on established AI governance frameworks that translate broad ethical principles into specific requirements and actionable guidance for responsible AI development and deployment.

EU AI Act's Risk-Based Governance Approach and AI Regulations

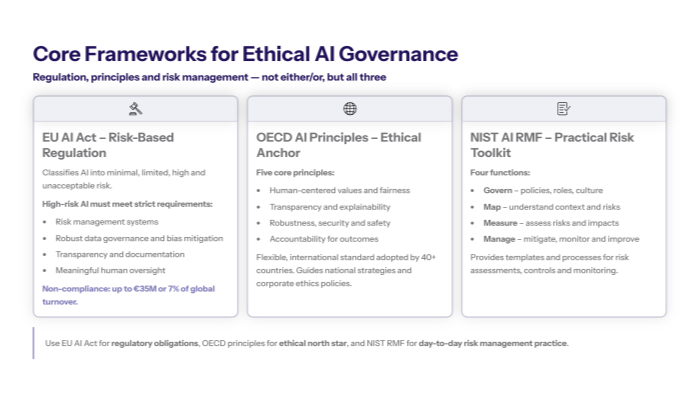

The 2024 EU AI Act establishes a comprehensive risk-based classification system categorizing AI applications into four risk levels: minimal risk (like AI-enabled video games), limited risk (chatbots requiring transparency), high-risk AI systems (affecting safety or fundamental rights), and unacceptable risk (social scoring systems that are prohibited entirely).

High-risk AI systems face stringent governance requirements including mandatory risk management systems, robust data governance ensuring training data quality and bias mitigation, transparency obligations with clear documentation, and human oversight requirements ensuring meaningful human control over AI decision-making. Organizations must implement these governance measures before deploying high-risk AI applications.

Non-compliance carries severe penalties reaching €35 million or 7% of global annual turnover, making adherence to AI regulations and governance policies a critical business imperative for organizations operating in EU markets.

OECD AI Principles for Trustworthy AI and Ethical Development

The OECD AI Principles provide broader ethical guidance adopted by 46 countries, establishing five core principles: AI should benefit people and planet (human-centered values), AI systems should be designed fairly and transparently, AI should be robust and secure, and there should be accountability for AI outcomes throughout the AI lifecycle.

These principles guide national AI strategies and provide organizations with internationally recognized standards for responsible AI governance. Unlike prescriptive regulations, OECD principles offer flexible guidance that organizations can adapt to their specific contexts while maintaining ethical integrity and respecting human rights.

Unlike the EU’s risk-based regulatory approach, OECD principles provide foundational ethical guidance that organizations can customize to their industry, scale, and risk profile while ensuring alignment with international best practices and societal values.

NIST AI Risk Management Framework: Practical Tools for AI Governance Practices

The US NIST AI Risk Management Framework offers structured guidance through four core functions: Govern (establishing policies and oversight), Map (understanding AI risks and context), Measure (assessing AI risks quantitatively), and Manage (mitigating identified risks through controls and monitoring).

This framework provides practical tools for American organizations and those seeking structured risk management approaches, offering detailed guidance for conducting AI risk assessments, establishing governance structures, and implementing monitoring systems to monitor AI systems and ensure compliance with AI governance policies.

Key Points:

EU AI Act mandates specific governance for high-risk AI with substantial financial penalties

OECD principles provide flexible international standards for responsible AI governance aligned with ethical AI principles

NIST framework offers practical risk management tools for systematic AI oversight and continuous monitoring of AI systems

Transition: These AI governance frameworks provide the foundation for practical implementation steps that organizations can follow to establish effective governance structures and policies.

Step-by-Step Implementation of Ethical AI Governance and AI Governance Policy Development

Moving from framework selection to operational governance requires systematic implementation that embeds ethical considerations into daily AI development and deployment workflows while maintaining innovation momentum and regulatory compliance.

Step-by-Step: Establishing AI Ethics Governance Structure and Policy

When to use this: Organizations beginning AI adoption or strengthening existing governance for more comprehensive oversight of AI systems and technologies, including generative AI initiatives.

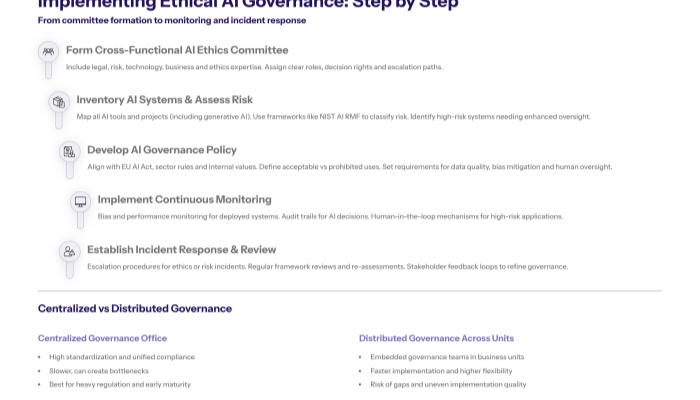

Form Cross-Functional AI Ethics Committee: Establish governance committee including legal counsel (regulatory frameworks expertise), risk management (AI risk assessments and ethical concerns), technology leadership (AI development and deployment understanding), business representatives (operational impact), and ethics expertise (responsible AI principles). Assign clear roles and decision-making authority.

Conduct Comprehensive AI Inventory and Risk Assessment: Map existing AI applications, AI tools, and planned AI projects using frameworks like NIST AI Risk Management. Identify high-risk AI systems requiring enhanced governance, assess potential bias in training data, and evaluate human oversight requirements.

Develop AI Ethics Governance Policy Aligned with Regulations: Create governance policy incorporating EU AI Act requirements (if applicable), industry-specific ethical guidelines, responsible AI principles, and clear procedures for AI development and deployment. Define acceptable AI applications and prohibited uses, emphasizing respect for human dignity and human values.

Implement Continuous Monitoring Systems to Monitor AI Systems: Deploy technical controls for bias detection in AI algorithms, establish performance monitoring for deployed AI systems, create audit trails for AI decision-making processes, and implement human oversight mechanisms for high-risk applications.

Establish Incident Response and Review Procedures: Create escalation procedures for AI ethics concerns, implement regular governance framework reviews, establish stakeholder feedback mechanisms, and schedule periodic AI risk reassessments as AI technologies evolve.

Comparison: Centralized vs. Distributed Governance Models in the Private Sector

Feature |

Centralized Governance Office |

Distributed Governance Across Units |

|---|---|---|

Oversight Consistency |

High standardization across all AI projects |

Variable implementation quality |

Implementation Speed |

Slower due to centralized bottlenecks |

Faster with embedded governance teams |

Resource Requirements |

Higher initial investment, specialized staff |

Lower per-unit cost, existing resources |

Regulatory Compliance |

Strong unified compliance approach |

Risk of compliance gaps across units |

Innovation Flexibility |

Structured but potentially restrictive |

Higher flexibility, innovation-focused |

Organizations should choose centralized governance for strong regulatory environments (EU AI Act compliance) or distributed models for innovation-focused environments with lower regulatory requirements, considering their AI maturity level and available governance expertise.

Transition: Even well-designed AI governance frameworks face common implementation challenges that organizations must proactively address to improve AI governance.

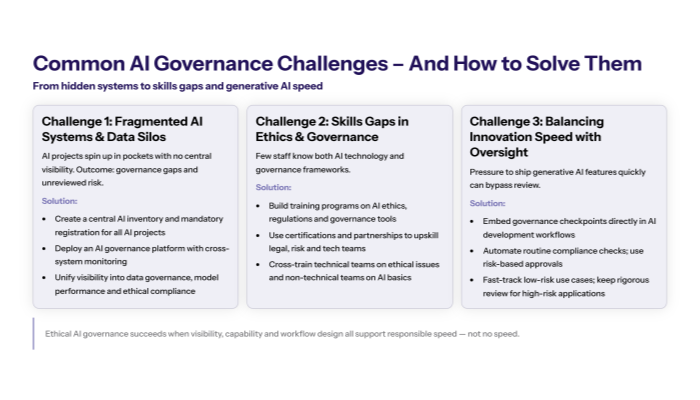

Common Implementation Challenges AI Governance Faces and Solutions

Organizations implementing ethical AI governance consistently encounter obstacles that can undermine even well-designed frameworks, requiring targeted solutions that maintain both ethical integrity and operational effectiveness.

Challenge 1: Fragmented AI Systems and Data Silos

Organizations often discover AI applications deployed across departments without centralized visibility, creating governance gaps where AI systems operate without proper oversight or ethical review.

Solution: Implement centralized AI governance platform providing unified oversight across departments and AI applications. This includes creating comprehensive AI inventory systems, establishing mandatory registration processes for all AI projects, and implementing cross-system monitoring that provides visibility into data governance, model performance, and ethical compliance across the organization.

Challenge 2: Skills Gaps in AI Ethics and Governance

Most organizations lack sufficient expertise in AI ethics, responsible AI development, and governance framework implementation, limiting their ability to establish effective oversight mechanisms.

Solution: Develop comprehensive training programs combining AI ethics education with hands-on governance tool usage. Focus on upskilling existing teams through partnerships with ethics organizations, certification programs in responsible AI governance, and cross-training initiatives that help legal, risk, and business teams understand AI implications while teaching technical teams about ethical concerns and ethical development practices.

Challenge 3: Balancing Innovation Speed with Governance Oversight in Generative AI Initiatives

Organizations struggle to maintain innovation momentum while ensuring thorough ethical review, especially in competitive environments where rapid AI deployment provides business advantages.

Solution: Embed governance checkpoints into AI development workflows using automated monitoring and risk-based approval processes. Implement governance-by-design approaches that integrate ethical AI principles from project inception, automate routine compliance checks, and establish fast-track approval procedures for low-risk AI applications while maintaining rigorous review for high-risk systems, including generative AI models.

Transition: Successfully addressing these challenges enables organizations to realize the full benefits of ethical AI governance while maintaining competitive advantages and respecting human rights.

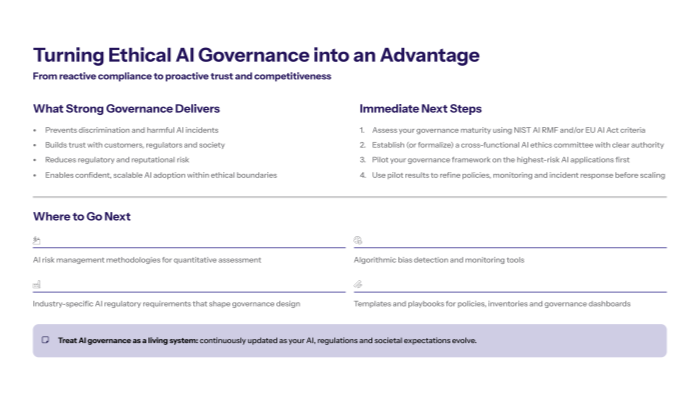

Conclusion and Next Steps: Strengthening AI Governance Policy and Practices

Governance plays an indispensable role in ethical AI implementation, providing the systematic frameworks that enable responsible AI development while preventing discrimination, ensuring transparency, and building stakeholder trust. Effective AI governance transforms from compliance burden into competitive advantage by enabling confident AI adoption within ethical boundaries.

To get started:

Assess current AI governance maturity using established frameworks like NIST AI Risk Management Framework or EU AI Act requirements to identify gaps in your existing oversight capabilities and improve AI governance practices.

Establish cross-functional AI ethics committee with clear roles spanning legal, risk, technology, and business expertise to provide comprehensive governance oversight aligned with regulatory frameworks and ethical AI principles.

Develop pilot governance implementation for your highest-risk AI applications before scaling organization-wide, allowing you to refine processes and demonstrate value in monitoring AI systems and managing potential risks.

Related Topics: Consider exploring AI risk management methodologies for quantitative assessment approaches, algorithmic bias detection tools for technical implementation, and industry-specific AI regulatory compliance requirements that may affect your governance framework design.