AI Governance Frameworks for Real Time Risk and Compliance

Introduction to Responsible AI Governance and AI Systems

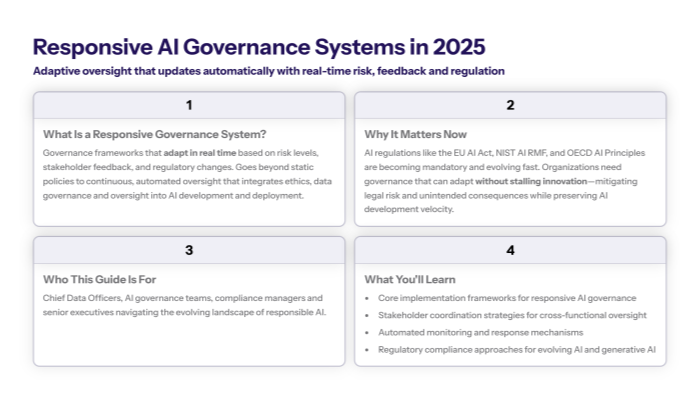

Responsive governance system AI implementation establishes adaptive frameworks that automatically adjust AI oversight based on real-time risk assessment, stakeholder feedback, and evolving regulatory requirements. Unlike static AI governance practices, responsive systems enable organizations to maintain continuous compliance with governance frameworks such as the EU AI Act while adapting to emerging risks as AI systems operate and evolve.

This comprehensive approach addresses the critical need for organizations to govern AI systems that can evolve and respond to changing regulatory landscapes without compromising innovation velocity. It emphasizes responsible AI governance by integrating ethical considerations, data governance, and oversight mechanisms into AI development and deployment processes.

What This Guide Covers

This guide provides complete implementation frameworks for responsive AI governance programs, including stakeholder coordination protocols, automated continuous monitoring systems, and regulatory compliance strategies aligned with key principles such as the OECD AI Principles. It covers technical architecture requirements, policy automation frameworks, and proven deployment methodologies for organizations managing diverse AI portfolios across multiple jurisdictions.

Who This Is For

This guide is designed for Chief Data Officers, AI governance teams, compliance managers, and senior executives responsible for implementing adaptive AI oversight systems in enterprise organizations. Whether you’re establishing your first comprehensive AI governance framework or upgrading existing governance practices to responsive models, you’ll find practical strategies for effective AI governance implementation that ensure trustworthy AI outcomes.

Why This Matters

Responsive governance systems enable real-time AI risk management and regulatory adaptation while maintaining stakeholder trust and innovation velocity. As AI regulations like the EU AI Act and NIST AI Risk Management frameworks become mandatory, organizations need governance structures that can automatically adapt to new requirements without disrupting AI development and deployment processes. This approach mitigates legal risks and unintended consequences associated with AI or ML model use, ensuring alignment with organizational values and applicable data protection laws.

What You’ll Learn:

Core implementation frameworks for responsive AI governance systems

Stakeholder coordination strategies for cross-functional governance structures

Automated continuous monitoring and response mechanisms for oversight mechanisms

Regulatory compliance approaches for evolving AI governance requirements, including generative AI considerations

Understanding Responsive AI Governance Systems and Their Role in AI Development

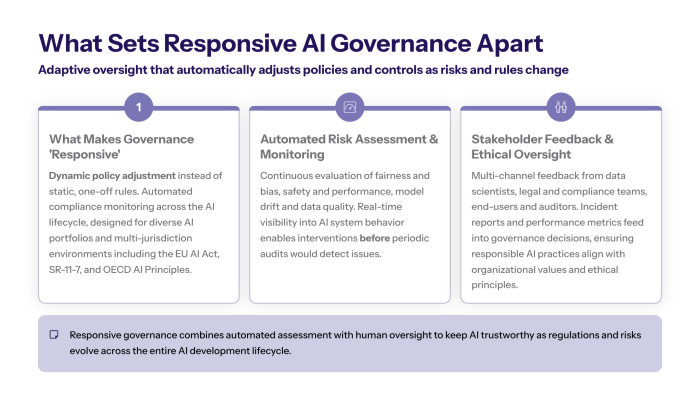

Responsive AI governance systems represent adaptive governance frameworks that automatically adjust AI oversight based on real-time risk assessment, stakeholder feedback, and regulatory changes. These systems differ fundamentally from traditional static governance models through dynamic policy adjustment capabilities and automated compliance monitoring that responds to changing conditions across the AI lifecycle.

AI governance encompasses not only risk management frameworks but also ethical guidelines and data governance policies that ensure AI systems operate transparently and ethically. Responsible AI governance is important for fostering trustworthiness and fairness in AI technologies, addressing ethical concerns such as bias mitigation and privacy preservation.

These frameworks enable organizations to maintain effective AI governance while accommodating the rapidly evolving AI landscape. Rather than requiring manual policy updates when regulations change or new AI risks emerge, responsive systems automatically adapt governance practices to ensure continued compliance and risk management.

Responsive systems matter critically for organizations managing diverse AI portfolios across multiple jurisdictions, particularly with evolving regulations like the EU AI Act, sector-specific requirements such as US SR-11-7 compliance for financial services, and international standards reflected in the OECD AI Principles.

Core Components of Responsive AI Governance Systems

Automated risk assessment engines continuously evaluate AI model development and AI system performance against fairness, bias, and safety metrics, providing real-time visibility into AI systems operation and potential risks. These engines monitor training data quality, model drift, and outcome disparities across demographic groups to identify governance issues before they impact AI outcomes.

This connects to responsive governance because automated assessment enables real-time governance adjustments without manual oversight delays, allowing organizations to address AI risks immediately rather than waiting for periodic reviews or audit cycles. Continuous monitoring is essential for managing high risk AI systems and ensuring explainable AI processes are upheld.

Stakeholder Feedback Integration and Ethical Development

Multi-channel feedback systems incorporate input from data scientists, legal teams, end-users, and external auditors to ensure comprehensive oversight of AI systems development and deployment. These systems collect structured feedback through automated surveys, incident reports, and performance metrics to inform governance decisions.

Building on automated assessment capabilities, stakeholder integration provides human oversight validation for governance decisions while ensuring that responsible AI practices incorporate diverse perspectives on AI ethics and risk management. This approach supports ethical AI systems development aligned with organizational values and ethical principles.

Transition: Understanding these foundational concepts enables organizations to design the technical architecture required for responsive governance implementation.

Implementation Architecture and Technical Foundation for Trustworthy AI

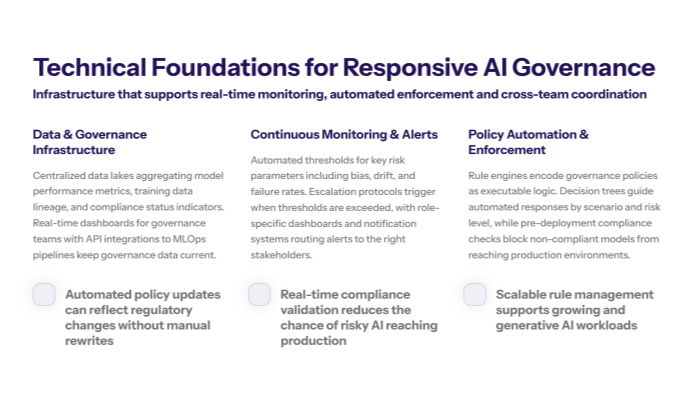

Implementing responsive governance systems requires robust technical infrastructure that supports real-time monitoring, automated policy enforcement, and dynamic stakeholder coordination across distributed AI environments.

Data Infrastructure Requirements for AI Systems

Centralized data lakes aggregate governance data from across AI systems, including model performance metrics, training data lineage, and compliance status indicators. Real-time monitoring dashboards provide governance teams with continuous visibility into AI governance policies compliance and risk indicators. API integration capabilities enable seamless data flows between MLOps pipelines and governance systems, ensuring that governance data remains current and actionable.

These infrastructure components support responsive governance by enabling automated data collection and analysis that would be impossible through manual processes. Effective data governance is critical for maintaining data quality, data privacy, and compliance with applicable data protection laws.

Monitoring and Alert Systems for Continuous Oversight

Automated threshold monitoring continuously evaluates AI or ML models against predefined risk parameters, triggering escalation protocols when thresholds are exceeded. Dashboard visualizations present complex governance data in accessible formats for different stakeholder groups, from technical teams to executive leadership. Notification systems automatically alert relevant stakeholders when governance interventions are required.

Unlike static monitoring approaches, responsive systems trigger automatic policy adjustments based on predefined risk thresholds rather than simply reporting issues for manual resolution. This ensures that AI systems evolve safely and remain aligned with ethical guidelines and legal requirements.

Policy Automation Framework Supporting AI Deployment

Rule engines codify governance policies as executable logic that can automatically enforce compliance requirements across AI systems. Decision trees guide automated responses to different risk scenarios, ensuring consistent governance practices. Automated compliance checking validates AI systems against applicable regulations and organizational policies before deployment.

Key Points:

Automated policy updates respond to regulatory changes without manual intervention

Real-time compliance validation prevents non-compliant AI systems from reaching production

Integration with existing enterprise systems leverages established IT infrastructure

Scalable rule management accommodates growing AI portfolios and generative AI technologies

Transition: With technical foundations established, organizations can follow structured implementation processes to deploy responsive governance capabilities.

Step-by-Step Implementation Process for a Responsive AI Governance Program

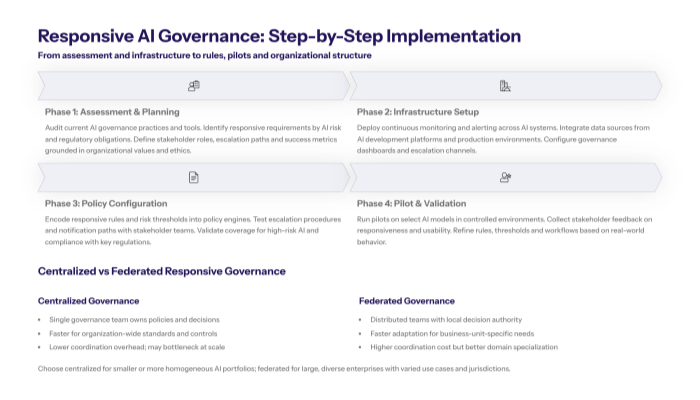

Building on the technical foundation requirements, organizations need systematic deployment processes that establish responsive governance capabilities while maintaining existing AI operations and ensuring stakeholder alignment throughout implementation.

Step-by-Step: Responsive Governance Deployment for AI Systems

When to use this: Organizations with mature AI portfolios requiring dynamic oversight capabilities across multiple AI applications and regulatory jurisdictions.

Phase 1 - Assessment and Planning: Conduct comprehensive audit of current AI governance practices, identify responsive requirements based on AI risks and regulatory obligations, define stakeholder roles and success metrics for governance effectiveness. Integrate ethical considerations and ensure AI initiatives align with organizational values and ethical principles.

Phase 2 - Infrastructure Setup: Deploy automated continuous monitoring systems for AI oversight, integrate data sources across AI development platforms, establish alert mechanisms for governance escalation and stakeholder notification.

Phase 3 - Policy Configuration: Configure responsive rules for automatic governance adjustments, test escalation procedures with stakeholder teams, validate notification systems for different risk scenarios and compliance requirements, including high risk AI systems.

Phase 4 - Pilot and Validation: Run controlled pilot program with select AI models to test responsiveness, gather stakeholder feedback on governance effectiveness, refine response mechanisms based on real-world performance data and ethical guidelines.

Comparison: Centralized vs Federated Responsive Governance Structures

Feature |

Centralized Governance |

Federated Governance |

|---|---|---|

Decision Authority |

Single governance team controls all AI policies |

Distributed teams with local decision-making authority |

Response Time |

Faster for organization-wide policies |

Faster for business-specific requirements |

Resource Requirements |

Lower coordination overhead |

Higher coordination but specialized expertise |

Scalability |

Limited by central team capacity |

Scales with organizational structure |

Centralized models work effectively for smaller organizations or those with similar AI applications across business units, while federated approaches better serve large enterprises with diverse AI portfolios requiring specialized governance expertise.

Transition: Successfully implementing responsive governance requires addressing common organizational and technical challenges.

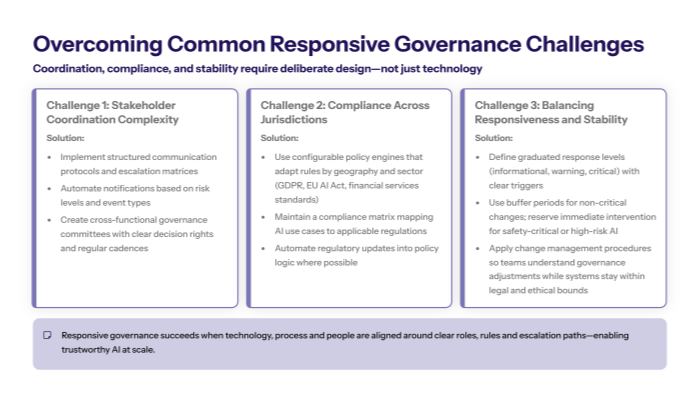

Common Challenges and Solutions in AI Governance

Organizations implementing responsive governance systems frequently encounter coordination complexities, regulatory compliance challenges, and stability concerns that can undermine governance effectiveness if not properly addressed.

Challenge 1: Stakeholder Coordination Complexity in AI Processes

Solution: Implement structured communication protocols with defined escalation matrices and automated stakeholder notifications based on risk levels and governance requirements. Establish cross-functional governance committees with clear decision-making authority and regular coordination meetings.

These protocols ensure that internal and external stakeholders receive timely information about governance decisions while maintaining clear accountability for AI governance outcomes across different organizational functions.

Challenge 2: Regulatory Compliance Across Jurisdictions and Data Protection Laws

Solution: Deploy configurable policy engines that automatically adjust compliance requirements based on geographic deployment and applicable regulations like GDPR, EU AI Act, and sector-specific standards such as financial services requirements.

Maintain compliance matrices that map AI applications to relevant regulatory frameworks and implement automated regulatory update mechanisms that adjust governance policies when new requirements emerge. This reduces legal risks and ensures adherence to applicable data protection laws.

Challenge 3: Balancing Responsiveness with Stability in AI Deployment

Solution: Establish graduated response protocols with buffer periods for non-critical adjustments and immediate action triggers for high risk AI applications or safety concerns.

Implement change management procedures that notify stakeholders of governance adjustments while ensuring that AI systems continue operating within legal and ethical boundaries during transitions.

Transition: With these implementation strategies and challenge solutions, organizations can establish effective responsive governance systems.

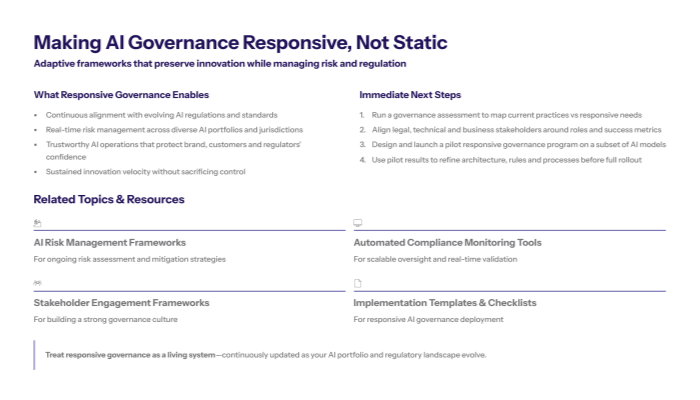

Conclusion and Next Steps in AI Governance Frameworks

Responsive governance systems enable organizations to maintain AI innovation velocity while ensuring adaptive risk management and regulatory compliance across the rapidly evolving AI landscape. These systems provide the foundation for trustworthy AI operations that can adapt to changing requirements without compromising effectiveness or stakeholder trust.

To get started:

Conduct governance assessment to evaluate current AI governance practices and identify responsive requirements based on your AI portfolio and regulatory obligations.

Align stakeholders across legal, technical, and business teams to establish governance roles, responsibilities, and success metrics for responsive implementation.

Plan pilot deployment with select AI models to validate responsive governance capabilities before enterprise-wide rollout and continuous improvement implementation.

Related Topics: Consider exploring AI risk management frameworks for comprehensive risk assessment, automated compliance monitoring for regulatory adherence, and stakeholder engagement strategies for expanded governance capabilities across your AI initiatives.

Additional Resources for Responsible AI Governance

Implementation templates for responsive governance deployment, compliance checklists for regulatory framework alignment, and stakeholder communication frameworks that support adaptive governance operations. These condensed reference materials provide governance teams and technical implementers with practical tools for establishing effective responsive AI governance systems that ensure responsible AI practices while maintaining innovation capabilities.