Essential GPT OSS 120B Hardware Requirements for Optimal Performance

OpenAI's GPT OSS 120B hardware requirements are critical to understand for anyone looking to run this powerful open-source language model efficiently. GPT OSS offers state-of-the-art chain of thought reasoning and tool use capabilities, built on a sophisticated mixture of experts architecture. Designed to be compatible with both data center GPUs and high-end consumer cards, this model can be deployed locally or on multi GPU setups ideal for scaling.

By leveraging the transformers library and tools like transformers serve CLI command, developers can run GPT OSS models with more control, integrating other tools and customizing prompts using python code and chat templates. Whether you want to automatically download model weights or fine-tune the smaller model on consumer hardware, understanding these hardware requirements and setup steps is essential for optimal performance.

Key Takeaways

-

Mixture of Experts Architecture and Hardware Compatibility

The GPT OSS 120B model utilizes a mixture of experts architecture that activates only a subset of parameters per input token, making it efficient yet demanding in terms of hardware. It requires compatible hardware such as data center GPUs (e.g., NVIDIA H100) or powerful consumer cards from later architectures to run smoothly. -

Local Deployment and Multi GPU Setup Ideal for Performance

Running GPT OSS 120B locally provides full control and privacy, with tools like LM Studio simplifying installation and usage. A multi GPU setup is ideal for handling the model's high memory consumption and long document processing capabilities, although single powerful GPUs with sufficient VRAM are also supported. -

Integration with Transformers Library and Developer Tools

Developers can use the transformers library to run GPT OSS models, employing the transformers serve CLI command and built-in chat templates to structure prompts and responses. Installing dependencies such as openai harmony and using python code allows for seamless interaction, enabling GPT OSS to respond as a helpful assistant while integrating other tools for enhanced functionality.

Introduction to GPT OSS Models

OpenAI's GPT OSS models mark a significant milestone as the company's first fully open-source language models since GPT-2. These include the powerful gpt oss 120b and the more accessible openai gpt oss 20b models. Designed to empower developers and researchers, these models enable building advanced AI applications without the constraints of API fees or usage restrictions.

The gpt oss models are engineered for versatility and high performance, making them suitable for diverse AI tasks such as data analysis, text generation, and complex reasoning. OpenAI has tailored these models to support advanced features like chain-of-thought reasoning and tool use, enhancing their practical utility.

Licensed under the Apache 2.0 license, the models offer freedom to use, modify, fine-tune, and commercialize, fostering innovation and customization in AI development.

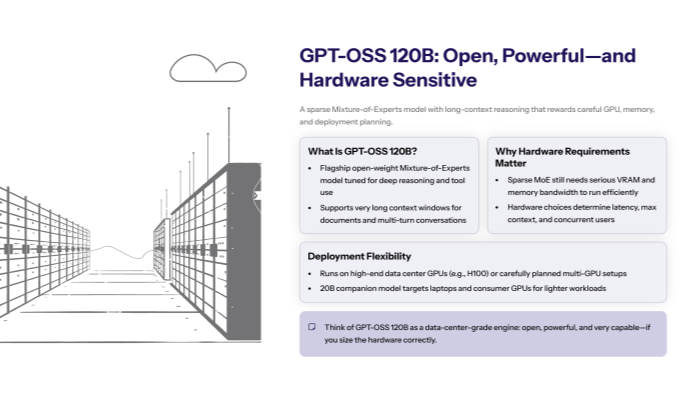

GPT OSS 120B Overview

The gpt oss 120b model is a flagship large language model featuring a sophisticated Mixture-of-Experts (MoE) architecture, enabling it to deliver high computational efficiency while maintaining exceptional reasoning capabilities.

This large model supports extremely long context windows, allowing it to process long documents and multi-turn conversations with ease. Fine-tuned on a single H100 data center GPU node, the model excels in high-performance applications including advanced text generation, data analysis, and complex problem-solving.

Complementing it is the smaller model, openai gpt oss 20b, designed to be fine-tuned and run on consumer hardware such as laptops and desktops, making it accessible for a broader range of users.

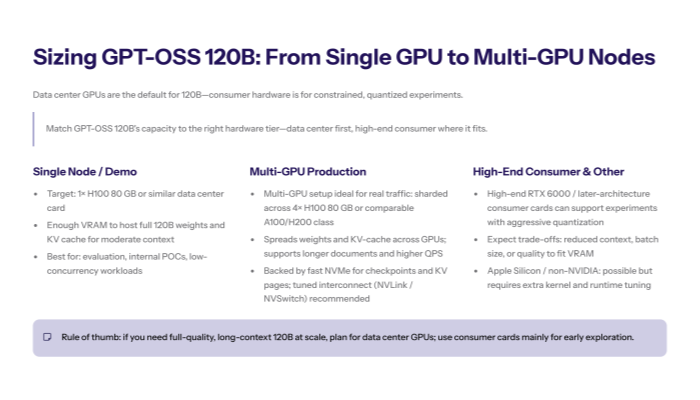

Hardware Requirements

Running the gpt oss 120b model demands compatible hardware with substantial computational power and memory. Recommended setups include data center GPUs like the NVIDIA H100 or high-end consumer GPUs such as the RTX 6000 series.

A multi GPU setup is ideal for optimal performance and scalability, although the model can operate on a single powerful GPU with sufficient VRAM. Specifically, the memory consumption for smooth operation is around 80 GB of RAM, ensuring efficient handling of large input tokens and completion tokens during inference.

While Apple Silicon and other specialized hardware can support local deployment, additional configuration and optimization, such as installing triton kernels, may be necessary to maximize performance.

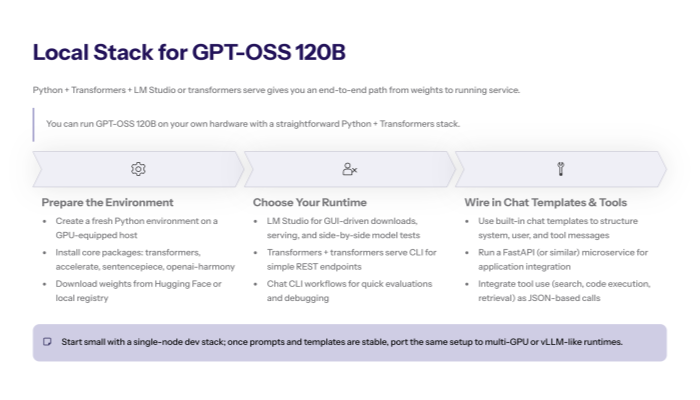

Local Deployment

Deploying the gpt oss 120b model locally on your own hardware offers unparalleled control, privacy, and customization. This approach is highly suitable for users prioritizing data security or specialized setups.

Tools like LM Studio simplify local deployment by managing dependencies and providing user-friendly interfaces. To set up the environment, installing the Transformers library via install transformers commands is essential, alongside downloading the model weights from repositories such as Hugging Face.

A fresh Python environment is recommended for compatibility, with packages like openai-harmony installed using pip install openai-harmony to facilitate interaction with the model.

Running the Model

The gpt oss 120b model can be executed using the Transformers library, leveraging the transformers serve CLI command for straightforward server deployment. The built-in chat template supports structuring messages, tool calls, and managing the system prompt efficiently.

For more advanced use cases, integrating Transformers serve with tools like Cursor enables extended functionality. The model's reasoning and tool-use capabilities empower applications across domains such as data analysis, automated content creation, and interactive AI assistants.

Developers benefit from more control by utilizing the chat CLI Transformers and libraries that help construct prompts, prepare prompts, and parse responses in the harmony maps format, which enhances multi-turn dialogue management.

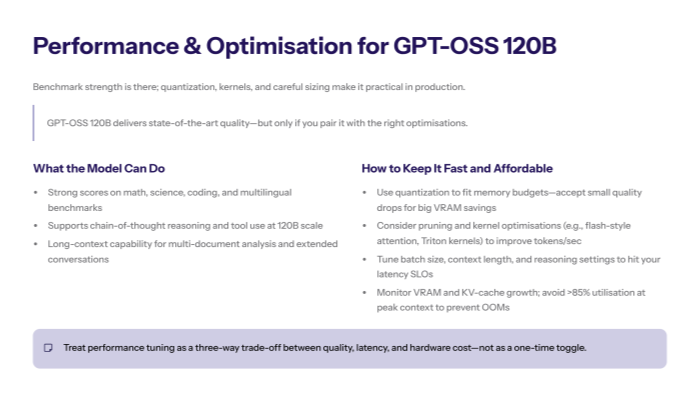

Performance Benchmarks

The gpt oss 120b model consistently outperforms on standard benchmarks covering mathematics, scientific reasoning, coding tasks, and multilingual understanding. Its advanced architecture supports chain of thought reasoning and tool use, often matching or surpassing previously state-of-the-art closed-source models.

Performance optimization techniques such as quantization and pruning further enhance efficiency without compromising accuracy, enabling practical deployment even on resource-intensive tasks.

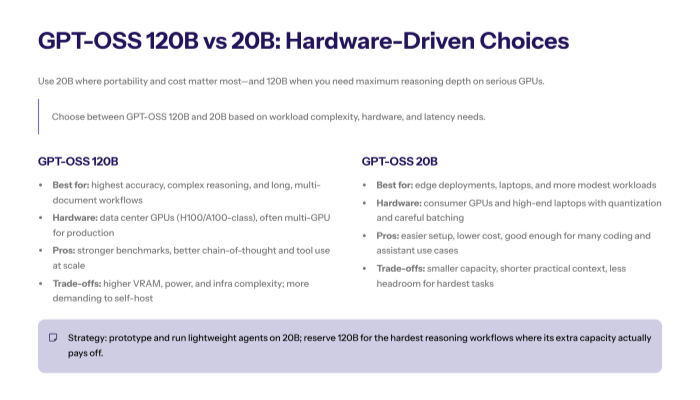

Model Comparison

Comparing the gpt oss 120b and the openai gpt oss 20b models reveals a tradeoff between computational requirements and capability. The large model excels in accuracy, complex reasoning, and handling extensive outputs, but demands higher hardware resources.

Conversely, the smaller model offers accessibility on consumer gpus and laptops, with lower memory needs and easier setup, making it ideal for less resource-intensive applications.

Both models are available on Hugging Face with comprehensive model weights and documentation, allowing users to load and fine-tune them according to their project needs.

Conclusion

The gpt oss 120b model represents a powerful, flexible, and open-source solution for advanced AI tasks, including data analysis, text generation, and complex reasoning. Its requirements for data center gpus and significant memory reflect its high-performance capabilities.

Local deployment on own hardware using tools like LM Studio provides full control and privacy, while the model's excellence on performance benchmarks makes it ideal for demanding applications.

With the ability to handle long documents, advanced reasoning, and tool integrations, the gpt oss 120b is a top-tier choice for developers seeking state-of-the-art open-source AI models.