GPT-OSS 20B Benchmark: A Research-Style Synthesis

A detailed analysis of the gpt oss 20b model, one of the latest open source models from OpenAI. We explore its model architecture, highlight its deep reasoning capabilities, and evaluate its performance through rigorous gpt oss 20b benchmark tests. For those interested in implementing local AI models, this guide provides practical tips and strategies.

Emphasis is placed on agentic tool use, tool calls, and the harmony chat format, positioning this model as a capable and efficient option among openai gpt oss offerings.

Abstract

This paper examines the gpt-oss 20B model, a mid-scale Mixture-of-Experts (MoE) member of the open models GPT-OSS family. We characterize its model architecture, describe an evaluation protocol emphasizing configurable reasoning levels, and summarize empirical findings on efficiency, controllability, and agentic behavior. We further analyze operational concerns—running efficiently on constrained hardware, deployment through API providers, and governance via system prompt conventions (the harmony format and a new format variant). Finally, we discuss limitations and propose a benchmark reading checklist and templates for agentic workflows.

1. Introduction

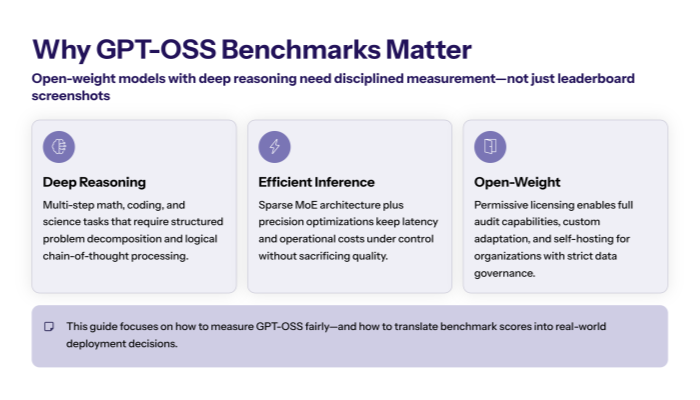

The GPT-OSS series (e.g., 20B, 120B) targets production-grade language systems under a permissive license and emphasizes transparent model architecture, reproducible evaluation, and cost-aware serving. The 20B variant is engineered for single-accelerator inference, reducing waiting time for interactive development while preserving durable intelligence across domains (reasoning, coding, general science).

1.1 Two design goals motivate this study:

-

Quantify the capability–cost trade-off of a mid-scale MoE model relative to frontier models and competitive Chinese models.

-

Establish measurement practices that reflect real workloads: multi-turn context, tool use, and schema-constrained outputs.

1.2 GPT OSS Models

The GPT-OSS family comprises sparse Mixture-of-Experts (MoE) transformers released under a permissive license and engineered for production reliability. Models share (i) optimized attention kernels (e.g., Flash-style implementations), (ii) router-mediated expert selection for favorable latency/quality trade-offs, and (iii) native support for tool use and schema-constrained outputs.

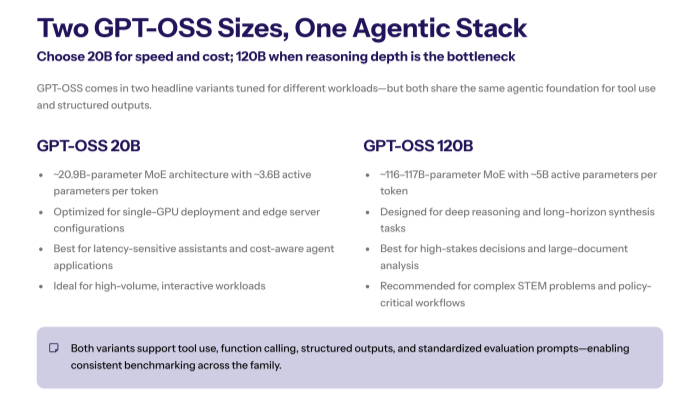

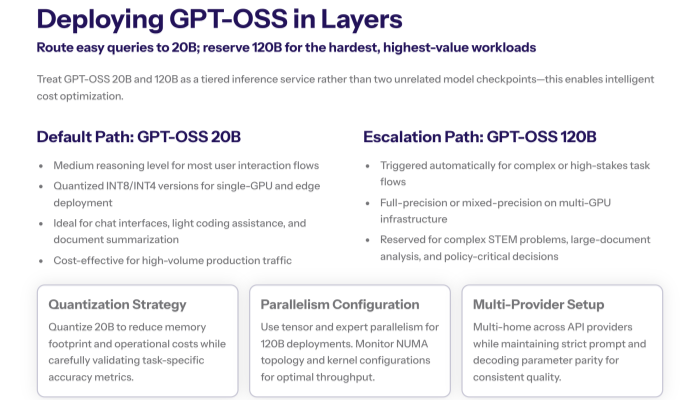

The portfolio currently centers on two scales—gpt-oss 20B and gpt-oss 120B—enabling routing by task hardness, latency SLOs, and cost envelopes. In practice, organizations default to the 20B tier for interactive workloads and escalate selectively to the 120B tier for high-stakes reasoning.

2. Model Overview: GPT-OSS 20B model's ability

Architecture. gpt-oss 20B is a sparse MoE transformer. A learned router selects a small subset of experts per token (“activated parameters”), yielding strong results at favorable latency. Grouped-Query Attention and rotary position embeddings stabilize long-context behavior; fused attention kernels improve speed.

Serving posture. The model targets commodity GPUs (≈16 GB) and edge appliances. KV-cache reuse, continuous batching, and quantization support low-variance latency. The family exposes two sizes (20B and 120B) to enable routing by task hardness and budgetary constraints.

2.1 Quick overview: GPT OSS 120b

Role. A scale-up MoE variant aimed at deep multi-step reasoning, long-horizon synthesis, and tool-augmented tasks under stricter quality bars.

When to route. Escalate from 20B when: (i) chain-of-thought depth materially improves correctness, (ii) evidence spans long contexts, or (iii) policy requires maximal first-pass accuracy.

Trade-offs. Higher compute/latency and memory footprint; best served with tensor/expert parallelism and careful batching.

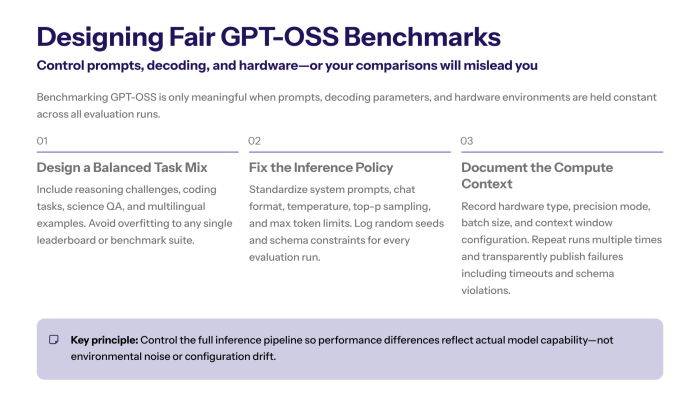

3. Evaluation Protocol

To rigorously assess the performance and versatility of the gpt-oss 20B model, we designed a comprehensive evaluation protocol. This protocol emphasizes real-world applicability by incorporating diverse task types, configurable reasoning levels, and advanced probing techniques. By standardizing prompt governance and employing structured output requirements, the evaluation ensures consistent, reproducible measurements across a range of domains and workloads. This section outlines the key components of our evaluation methodology.

3.1 Task Suite

We assess instruction following, mathematical and science reasoning, code generation, and retrieval-grounded QA. Where appropriate, we require structured outputs (JSON schemas) to measure integration readiness.

3.2 Reasoning Control

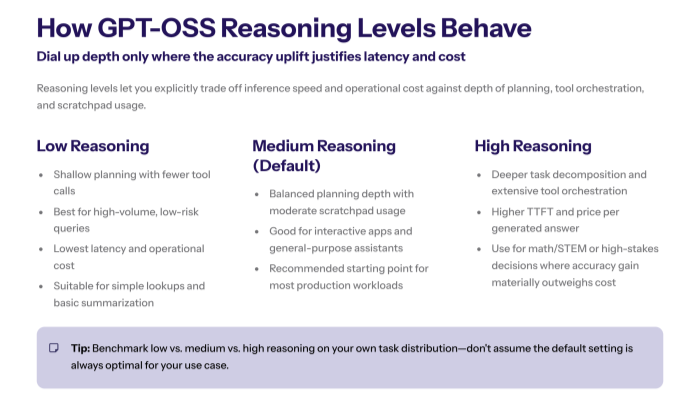

Inference exposes discrete reasoning levels {low, medium, high}. The knob modulates deliberation depth and search budget. We report accuracy jointly with tokens/sec and memory footprint, reflecting the operational Pareto.

3.3 Advanced Probes

We include GPQA and GPQA Diamond splits to stress conceptual depth beyond shallow patterning. Coding tasks use functionally checked evaluations (e.g., pass@k) to minimize grader noise.

3.4 Prompt Governance

We standardize the system prompt using the harmony format (role separation, tool declarations, and schema constraints). A new format variant permits more explicit multi-step planning. This normalizes model conditioning across domains and API providers.

3.4 Harmony chat format

Definition. A standardized system/user/assistant schema that declares tools, output schemas, and policy constraints explicitly.

Rationale. Reduces prompt drift across environments, improves reproducibility, and stabilizes safety refusals.

Template essentials. Version-tagged system prompt, enumerated tool JSON schemas, strict output contracts, and citation rules for retrieval-grounded answers.

3.5 New Format

A variant emphasizing explicit planning and separation of concerns: (1) brief plan/scratchpad, (2) bounded tool calls with validated arguments, (3) schema-validated final answer. Compared with harmony, the new format surfaces intermediate intent more clearly, easing audit and error triage. It is recommended for agentic evaluations and safety reviews where step delineation is desirable.

4. Comparative Analysis

To better understand the strengths and limitations of the gpt-oss 20B model, we conduct a comparative analysis against both frontier models and leading Chinese open models. This section evaluates the model’s ability to handle reasoning tasks, general knowledge, and agentic capabilities, highlighting how its architecture and design choices contribute to its performance. We also discuss how the gpt-oss 20B benchmark measures up in practical scenarios, emphasizing its compelling balance between efficiency and capability in diverse applications.

4.1 Against Frontier Models

Frontier models (≫100B effective parameters) retain an advantage in unconstrained long-form synthesis and rare-knowledge recall without retrieval. However, with retrieval and schema-constrained decoding, gpt-oss 20B narrows gaps on many enterprise tasks at substantially lower cost.

4.2 Against Chinese Models

Competitive Chinese models (14–32B) may lead on sinophone corpora and localized evaluations. gpt-oss 20B closes the gap via retrieval augmentation, locale-specific prompts, and decoding tuned for character-rich context. Localization quality depends strongly on domain data and prompt design.

5. Agentic Tool Use and Structured Outputs

The GPT-OSS family supports function calling with strict JSON schemas. An internal browsing tool retrieves current information; a lightweight Python tool can create intermediate calculations. The runtime records tool arguments and results, yielding audit-ready traces of the model’s response. In practice, robust agentic workflows interleave: (i) retrieval, (ii) function invocation, (iii) schema-validated finalization. This pattern improves determinism and reduces integration defects.

6. Efficiency and Deployment

Efficiency and deployment considerations are critical for maximizing the practical utility of the gpt-oss 20B model. This section explores how the model architecture and system optimizations enable running efficiently on commodity hardware, including edge devices with limited resources. We discuss deployment strategies through various API providers and self-hosted topologies, emphasizing the importance of consistent configuration and prompt governance.

Key concepts such as quantization, KV-cache reuse, and batching are highlighted to illustrate how the model achieves low-latency inference while maintaining strong reasoning capabilities and agentic tool use. Understanding these factors is essential for developers aiming to integrate the gpt-oss 20B into production environments with predictable performance and cost profiles.

6.1 Running Efficiently on Edge

On single-GPU hosts, throughput depends on (i) quantization (e.g., 8-bit), (ii) KV-cache reuse for multi-turn dialogues, (iii) continuous batching under short-query traffic. For default SLAs, medium reasoning balances quality and latency; escalate to “high” only on escalations.

6.2 Providers and Topologies

Teams deploy either self-hosted or through API providers with heterogeneous fleets. Configuration parity (tokenizer, system prompt, decoding defaults) matters when multi-homing across providers so that models share consistent behavior.

6.3 API Providers

Multi-home deployments across API providers require parity control: identical tokenizer builds, chat templates (harmony/new), decoding defaults, and function schemas. Production guidance:

-

SLOs. Track per-provider p50/p95 latency under continuous batching; enforce budget-aware reasoning levels.

-

Governance. Version control the system prompt; log tool arguments/returns for auditability.

-

Consistency. Validate schema outputs at the edge; retry with stricter decoding on failure to normalize behavior across providers.

7. Safety, Model Cards, and Policy

Released model cards document capabilities, domains, and known failure modes. We treat the system prompt as policy code (version-controlled, reviewed, and tested). Refusal patterns mitigate harmful content; schema-constrained outputs reduce injection surfaces. Harmonized traces (prompts, tools, sources, outputs) enable accountable incident response.

8. Results: Qualitative Synthesis

Across standard suites, gpt-oss 20B exhibits:

-

Capability–cost efficiency. Competitive accuracy with materially lower serving cost; practical for default routing in multi-tier stacks.

-

Controllability. The reasoning levels dial yields predictable accuracy–latency trade-offs; medium is a robust default.

-

Integratability. Function calling and schema-valid outputs reduce post-processing; the harmony format produces personality similar responses across deployments.

-

Limits. Without retrieval, rare fact recall and long-horizon synthesis trail the largest dense systems.

Key takeaways. Use retrieval for volatile facts, enforce schemas for compliance, and gate “high” reasoning by policy to control spend and tail latency.

9. Best Practices

-

Prompt governance. Standardize the system prompt; lint for role clarity and schema inclusion.

-

Decoding policy. Greedy/low-temperature for transactional flows; top-p for ideation. Document defaults.

-

Tooling. Define function schemas precisely; validate arguments before execution; cap tool budgets per request.

-

Evaluation. Always pair accuracy with throughput and $/1K tokens; report by reasoning levels and domain.

-

Routing. Keep 20B as default; escalate to 120B when correctness pressure is extreme; down-route simple turns to smaller backends.

-

Observability. Log prompts, tools, and outputs; monitor schema-validation failures and retry with stricter decoding if needed.

10. Limitations and Future Work

No mid-scale model will dominate unconstrained creativity or ultra-rare knowledge without retrieval. Further pre training on targeted corpora, improved expert routing, and tighter tool-use evaluation could reduce gaps. We encourage standardized, open harnesses for tool-augmented benchmarks and cross-provider reproducibility.

11. Conclusion

gpt-oss 20B delivers a pragmatic synthesis of capability, speed, and operating cost. With disciplined prompting (the harmony format or its new format), schema-constrained outputs, and policy-gated reasoning levels, it furnishes a dependable default for enterprise assistants. Strategic escalation to the larger sibling (120B) preserves ceiling performance while keeping budgets predictable. The resulting portfolio—routing by task hardness and SLA—aligns benchmark wins with production impact.

Appendix A — Harmony Prompt Template (Minimal)

-

System prompt (policy, tone, constraints; version-tagged)

-

Tools (JSON schemas for each function; explicit return types)

-

User (goal + evidence)

-

Assistant (plan + calls + schema-valid final)

Appendix B — Agentic Workflow Skeleton

-

Retrieve candidates → cite sources.

-

Decide tool plan → invoke browsing tool/Python with validated args.

-

Synthesize → emit schema-valid output; back-off on validation failure.

-

Log trace (prompts, calls, results) for audit and regression.

Appendix C — Benchmark Reading Checklist

-

Report accuracy and economics (tokens/sec, memory, $/1K).

-

Stratify by reasoning levels and domain; avoid single “overall” numbers.

-

Include ablations: with/without retrieval; strict vs. creative decoding.

-

Fix seeds; pin kernels; record decoding hyperparameters for replication.

Notation Glossary

-

Context: total tokens visible to the model at inference.

-

Scale: parameter count and effective compute per token via MoE routing.

-

Function calling: schema-constrained tool invocation from the model.

-

Structured outputs: JSON or typed responses validated prior to use.

References

1. Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., ... & Amodei, D. (2020). Language models are few-shot learners. Advances in neural information processing systems, 33, 1877-1901.

2. Fedus, W., Zoph, B., & Shazeer, N. (2021). Switch transformers: Scaling to trillion parameter models with simple and efficient sparsity. Journal of Machine Learning Research, 23(140), 1-7.

3. Rae, J., Borgeaud, S., Cai, T., Millican, K., Hoffmann, J., Song, F., ... & Hassabis, D. (2021). Scaling language models with pathway parallelism. arXiv preprint arXiv:2104.04473.

4. Shazeer, N., Mirhoseini, A., Maziarz, K., Davis, A., Le, Q., Hinton, G., & Dean, J. (2017). Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. arXiv preprint arXiv:1701.06538.

5. Smith, S. L., Kindermans, P. J., Ying, C., & Le, Q. V. (2022). Don't decay the learning rate, increase the batch size. arXiv preprint arXiv:1711.00489.

6. Wang, A., Pruksachatkun, Y., Nangia, N., Singh, A., Michael, J., Hill, F., ... & Bowman, S. R. (2019). Superglue: A stickier benchmark for general-purpose language understanding systems. Advances in neural information processing systems, 32, 3261-3275.

7. Wei, J., Bosma, M., Zhao, V. Y., Guu, K., Yu, A. W., Lester, B., ... & Levy, O. (2022). Chain of thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35, 24824-24837.